We're sorry but you will need to enable Javascript to access all of the features of this site.

Stanford Online

Statistical learning with r.

SOHS-YSTATSLEARNING

Stanford School of Humanities and Sciences

This is an introductory-level course in supervised learning, with a focus on regression and classification methods. The syllabus includes: linear and polynomial regression, logistic regression and linear discriminant analysis; cross-validation and the bootstrap, model selection and regularization methods (ridge and lasso); nonlinear models, splines and generalized additive models; tree-based methods, random forests and boosting; support-vector machines. Some unsupervised learning methods are discussed: principal components and clustering (k-means and hierarchical).

This is not a math-heavy class, so we try and describe the methods without heavy reliance on formulas and complex mathematics. We focus on what we consider to be the important elements of modern data analysis. Computing is done in R. There are lectures devoted to R, giving tutorials from the ground up, and progressing with more detailed sessions that implement the techniques in each chapter. We also offer a separate version of the course called Statistical Learning with Python - the chapter lectures are the same, but the lab lectures and computing are done using Python.

The lectures cover all the material in An Introduction to Statistical Learning, with Applications in R by James, Witten, Hastie and Tibshirani, with Applications in R (second addition) by James, Witten, Hastie and Tibshirani (Springer, 2021). The pdf for this book is available for free on the book website .

Prerequisites

Introductory level understanding of core concepts in statistics, linear algebra, and computing.

Core Competencies

Overview of statistical learning

- Linear regression

- Classification

- Resampling methods

- Linear model selection and regularization

- Moving beyond linearity

- Tree-based methods

- Support vector machines

- Deep learning

- Survival modeling

- Unsupervised learning

- Multiple testing

- Engineering

- Artificial Intelligence

- Computer Science & Security

- Business & Management

- Energy & Sustainability

- Data Science

- Medicine & Health

- Explore All

- Technical Support

- Master’s Application FAQs

- Master’s Student FAQs

- Master's Tuition & Fees

- Grades & Policies

- HCP History

- Graduate Application FAQs

- Graduate Student FAQs

- Graduate Tuition & Fees

- Community Standards Review Process

- Academic Calendar

- Exams & Homework FAQs

- Enrollment FAQs

- Tuition, Fees, & Payments

- Custom & Executive Programs

- Free Online Courses

- Free Content Library

- School of Engineering

- Graduate School of Education

- Stanford Doerr School of Sustainability

- School of Humanities & Sciences

- Stanford Human Centered Artificial Intelligence (HAI)

- Graduate School of Business

- Stanford Law School

- School of Medicine

- Learning Collaborations

- Stanford Credentials

- What is a digital credential?

- Grades and Units Information

- Our Community

- Get Course Updates

The Center for Brains, Minds & Machines

Search form, you are here.

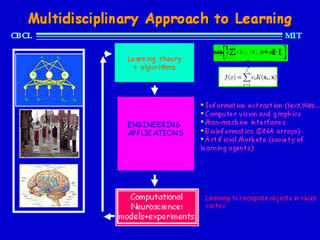

Faculty at CBMM academic partner institutions offer interdisciplinary courses that integrate computational and empirical approaches used in the study of intelligence.

Statistical Learning Theory and Applications

Semester:

Course level: .

- https://poggio-lab.mit.edu/9-520

The class covers foundations and recent advances of Machine Learning from the point of view of Statistical Learning Theory.

Understanding intelligence and how to replicate it in machines is arguably one of the greatest problems in science. Learning, its principles and computational implementations, is at the very core of intelligence. During the last decade, for the first time, we have been able to develop artificial intelligence systems that can solve complex tasks considered out of reach. ATM machines read checks, cameras recognize faces, smart phones understand your voice and cars can see and avoid obstacles.

The machine learning algorithms that are at the roots of these success stories are trained with labeled examples rather than programmed to solve a task. Among the approaches in modern machine learning, the course focuses on regularization techniques, that provide a theoretical foundation to high- dimensional supervised learning. Besides classic approaches such as Support Vector Machines, the course covers state of the art techniques exploiting data geometry (aka manifold learning), sparsity and a variety of algorithms for supervised learning (batch and online), feature selection, structured prediction and multitask learning. Concepts from optimization theory useful for machine learning are covered in some detail (first order methods, proximal/splitting techniques…).

The final part of the course will focus on deep learning networks. It will introduce a theoretical framework connecting the computations within the layers of deep learning networks to kernel machines. It will study an extension of the convolutional layers in order to deal with more general invariance properties and to learn them from implicitly supervised data. This theory of hierarchical architectures may explain how visual cortex learn, in an implicitly supervised way, data representation that can lower the sample complexity of a final supervised learning stage.

The goal of this class is to provide students with the theoretical knowledge and the basic intuitions needed to use and develop effective machine learning solutions to challenging problems.

9.520/6.7910: Statistical Learning Theory and Applications

9.520/6.7910: statistical learning theory and applications, fall 2024, course description.

Understanding human intelligence and how to replicate it in machines is arguably one of the greatest problems in science. Learning, its principles and computational implementations, is at the very core of intelligence. During the last two decades, for the first time, artificial intelligence systems have been developed that begin to solve complex tasks, until recently the exclusive domain of biological organisms, such as computer vision, speech recognition or natural language understanding: cameras recognize faces, smart phones understand voice commands, smart chatbots/assistants answer questions and cars can see and avoid obstacles. The machine learning algorithms that are at the roots of these success stories are trained with examples rather than programmed to solve a task. This has been a once-in-a-time paradigm shift for Computer Science: shifting from a core emphasis on programming to training-from-examples . This course -- the oldest on ML at MIT -- has been pushing for this shift from its inception around 1990. However, a comprehensive theory of learning is still incomplete, as shown by the several puzzles of deep learning. An eventual theory of learning that explains why deep networks work and what their limitations are, may thus enable the development of more powerful learning approaches and perhaps inform our understanding of human intelligence.

In this spirit, the course covers foundations and recent advances in statistical machine learning theory, with the dual goal a) of providing students with the theoretical knowledge and the intuitions needed to use effective machine learning solutions and b) to prepare more advanced students to contribute to progress in the field. This year the emphasis is again on b).

The course is organized about the core idea of a parametric approach to supervised learning in which a powerful approximating class of parametric class of functions -- such as Deep Neural Networks -- is trained -- that is, optimized on a training set. This approach to learning is, in a sense, the ultimate inverse problem, in which sparse compositionality and stability play a key role in ensuring good generalization performance.

The content is roughly divided into three parts. The first part is about:

- classical regularization and regularized least squares

- kernel machines, SVM

- logistic regression, square and exponential loss

- large margin (minimum norm)

- stochastic gradient methods,

- overparametrization, implicit regularization

- approximation/estimation errors.

The second part is about deep networks and, particular, about:

- approximation theory -- why deep networks avoid the curse of dimensionality and are a universal parametric approximator s

- optimization theory -- how weights and activation evolve in time and across layers during training with SGD

- learning theory -- how generalization in deep networks can be explained in terms of the complexity control implicit in regularized (or unregularized) SGD and in terms of the details of the sparse compositional architecture itself.

The third part is about a few topics of current research, starting with the connections between learning theory and the brain, which was the original inspiration for modern networks and may provide ideas for future developments and breakthroughs in the theory and the algorithms of leaning. Throughout the course, and especially in the final classes, ther wil be talks by leading researchers on selected advanced research topics.

Apart for the first part on regularization, which is an essential part of any introduction to the field of machine learning, this year course is designed for students with a good background in ML.

Prerequisites

We will make extensive use of basic notions of calculus, linear algebra and probability. The essentials are covered in class and in the math camp material. We will introduce a few concepts in functional/convex analysis and optimization. Note that this is an advanced graduate course and some exposure on introductory Machine Learning concepts or courses is expected: for course 6 students prerequisites are 6.041 and 18.06 and (6.036 or 6.401 or 6.867).

Requirements for grading are attending lectures/participation (10%), three problem sets (30%) and a final project (60%). Use of LLMs on the final project -- such as ChatGPT -- is allowed, but it must be disclosed.

Classes are conducted in-person.

Grading policies, problem set and project due dates : (slides)

Problem Sets

Problem Set 1 , out: Tue. Sept. 17??, due: Tue. Oct. 01?? Problem Set 2 , out: Thu. Oct. 03??, due: Thu. Oct. 17?? Problem Set 3 , out: Thu. Oct. 17??, due: Thu. Oct. 31??

Submission instructions: Follow the instructions included with the problem set. Use the Latex template for the report. Submit your report online through Canvas by the due date/time.

Guidelines and key dates. Online form for project proposal (complete by Mon. Oct. 14 ).

Final project reports (5 pages for individuals, 8 pages for teams, NeurIPS style) are due on Tue. Dec. 10?? .

Projects archive

List of Wikipedia entries, created or edited as part of projects during previous course offerings.

Navigating Student Resources at MIT

This document has more information about navigating student resources at MIT

Units: 3-0-9 H,G

Class Times: Tuesday and Thursday: 11:00 am - 12:30 pm

Location: 46-3002 (Singleton Auditorium)

Instructors: Tomaso Poggio (TP) , Lorenzo Rosasco (LR) , Michael Lee (ML), Akshay Rangamani (AR), Pierfrancesco Beneventano (PB), Andrea Pinto (AP), Eran Malach (EM)

TAs: Michael Lee , Dan Mitropolski, Ziyin Liu

Office Hours: Wednesdays 3PM - 4PM (46-5193)

Email Contact: [email protected]

Previous Class: FALL 2023 , FALL 2022 , FALL 2021 , FALL 2020 , FALL 2019

Canvas page: https://canvas.mit.edu/courses/22714

Statistics, University of Illinois at Urbana-Champaign

STAT 542: Statistical Learning

Instructor Feng Liang : liangf AT illinois DOT edu Office: 113D Illini Hall Phone: (217) 333-6017

Registration (F23) The instructor cannot manually enroll any students. If you have any questions related to registration and enrollment of STAT 542, please contact the registration office.

- For non-STAT students, registration is open during the first week of Fall 2023 instruction.

- An online version of STAT 542, usually offered in the Fall, is designed for the Online Master of Computer Science in Data Science ( MCS-DS ) and is NOT open to UIUC students outside that program.

- “ The Elements of Statistical Learning: Data Mining, Inference and Prediction” by Trevor Hastie, Robert Tibshirani, and Jerome Friedman.

- “ An Introduction to Statistical Learning with Applications in R ” by Gareth James, Daniela Witten, Trevor Hastie and Robert Tibshirani. Slides, videos and solutions can be found here .

Lecture [ S19 ]

Prerequisites Knowledge of basic multivariate calculus, statistical inference, and linear algebra. You should be comfortable with the following concepts: probability distribution functions, expectations, conditional distributions, likelihood functions, random samples, estimators and linear regression models.

I would suggest non-stat students to pick up some basic knowledge of statistical inference and data analysis, from Wiki pages, online lecture notes, and textbooks for courses at the level of STAT 410 / 425 and STAT 432 .

Course Description This course provides an introduction to modern techniques for statistical analysis of complex and massive data. Examples of these are model selection for regression/classification, nonparametric models including splines and kernel models, regularization, model ensemble, recommender system, and clustering analysis. Applications are discussed as well as computation and theoretical foundations.

Computing The homework assignments and the projects will involve some computing. You are expected to have some prior programming experience with either R or Python.

Trending Resource : 40+ Activities for the First Week of School

53 Fun and Interesting Statistics Activities

This blog post contains Amazon affiliate links. As an Amazon Associate, I earn a small commission from qualifying purchases.

Looking for fun and interesting ways to incorporate data collection into your stats class? Check out this list of 53 fun and interesting statistics activities I have done with my students over the years in Algebra 1, Algebra 2, and Statistics class.

Measures of Central Tendency Activities

Level the towers activity for introducing mean.

This activity will help your students visually understand what we are doing to a data set when we find the mean.

Tenzi vs Splitzi Measures of Central Tendency Activity

This Tenzi vs Splitzi data collection activity was the perfect set-up for practicing finding measures of central tendency with my Algebra 1 students.

Always Sometimes Never Activity for Mean, Median, Mode, and Range

Check out this Always Sometimes Never Activity for Mean, Median, Mode, and Range. This was the perfect way to review the measures of central tendency with my Algebra 1 students. I used this activity as a last-minute review before our quiz over finding measures of central tendency.

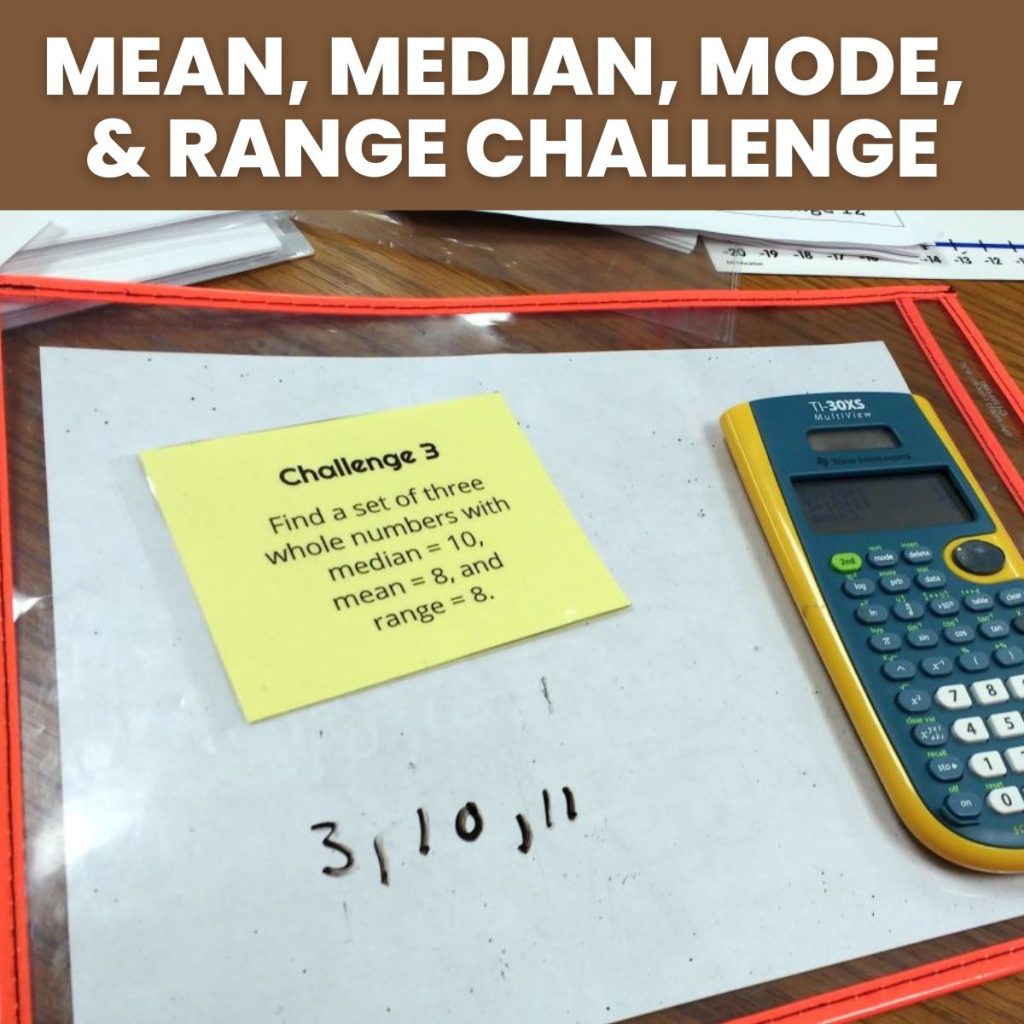

Mean, Median, Mode, and Range Challenge Activity

Can you find a set of numbers that satisfies each challenge involving the mean, median, mode, and range of the data set?

Mean, Median, Mode, and Range Spider Puzzles

Students must add one number to the data set to make it have various means, medians, modes, and ranges. My students did not want to put this activity down!

Measures of Central Tendency Graphic Organizers

I created these measures of central tendency graphic organizers for my Algebra 1 students to review how to find mean, median, mode, and range at the beginning of our data analysis unit.

Categorical vs Quantitative Variables Activities

Categorical vs quantitative variables card sort activity.

I created a categorical vs quantitative variables card sort that I would like to share with you.

Categorical vs Quantitative Variables Hold-Up Cards Activity

I created these categorical vs quantitative variables hold-up cards to help me understand how well my statistics students were grasping the concepts of categorical and quantitative variables.

Emergency Rooms Card Sort Activity for Categorical and Quantitative Variables

Looking for a fun way to assess student understanding on categorical and quantitative variables? Check out this card sort activity I created for my statistics classes involving data that could be collected in an emergency room.

Statistics and Data Analysis Activities

Kentucky derby winning times dot plot analysis.

One of my favorite graphs to use to practice SOCS in statistics is this Kentucky Derby Winning Times graph that I found in Stats: Modeling the World. To practice describing SOCS, I had my class look at this dotplot of the Kentucky Derby Winning Times between 1875 and 2008.

SOCS Foldable for Statistics

I’m pretty happy with how this SOCS foldable turned out that I created for my stats class. We’re practicing identifying the shape, outliers, center, and spread of a quantitative variable.

IQR vs Standard Deviation Card Sort Activity

My stats students had just finished up learning to find IQR by hand and by calculator and standard deviation by hand and by calculator. I made this card sort activity to help students learn when they should report IQR vs standard deviation.

5 Number Summary 5 Finger Summary

Check out this interactive notebook over the 5 elements of a 5 number summary.

Blind Stork Test for Data Collection

How hard can it be to stand on one foot with your eyes closed? Your students will find out with this fun blind stork test that is perfect for collecting data to analyze in Algebra 1.

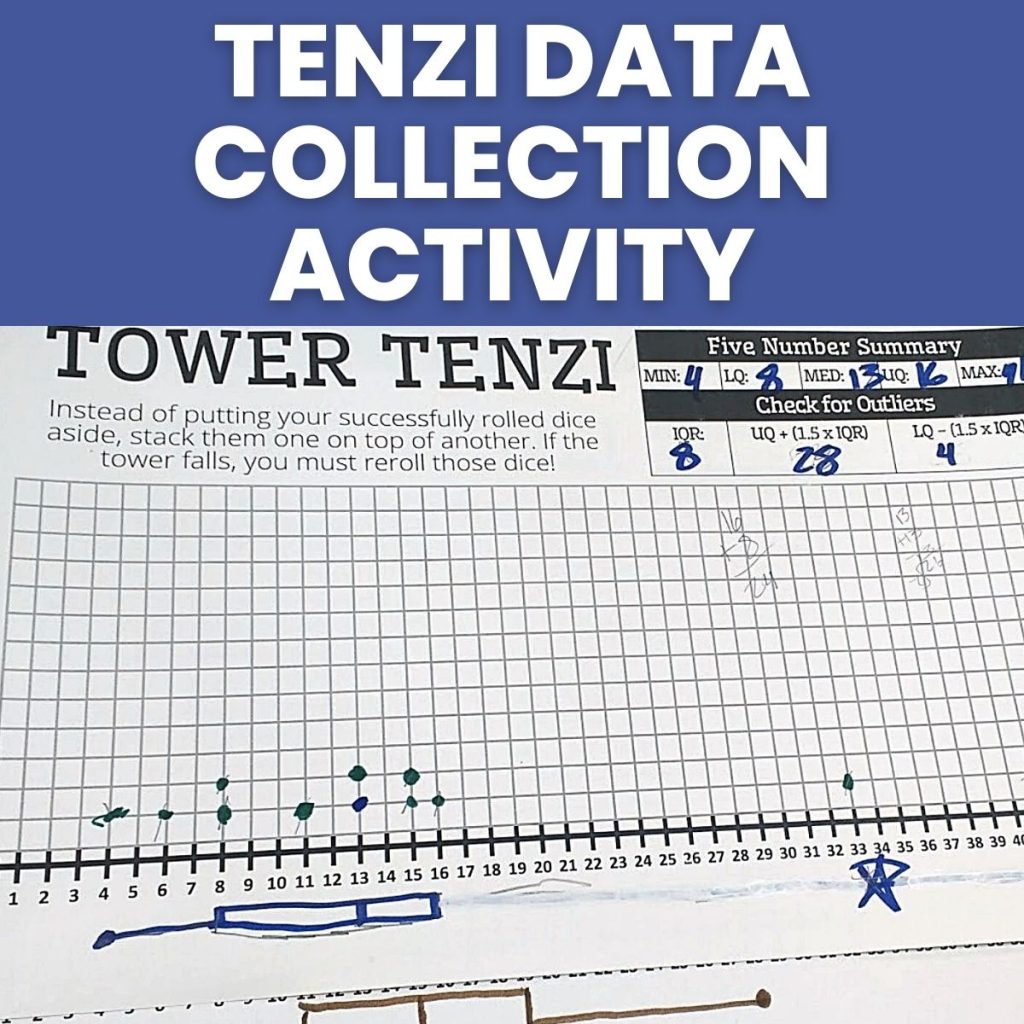

Tenzi Data Collection Activity for Comparing Data Sets

Data collection is fun when it involves playing Tenzi ! Students will play various Tenzi variants and use their collected data to create boxplots which can be easily compared to determine “Which version of Tenzi is the hardest?”

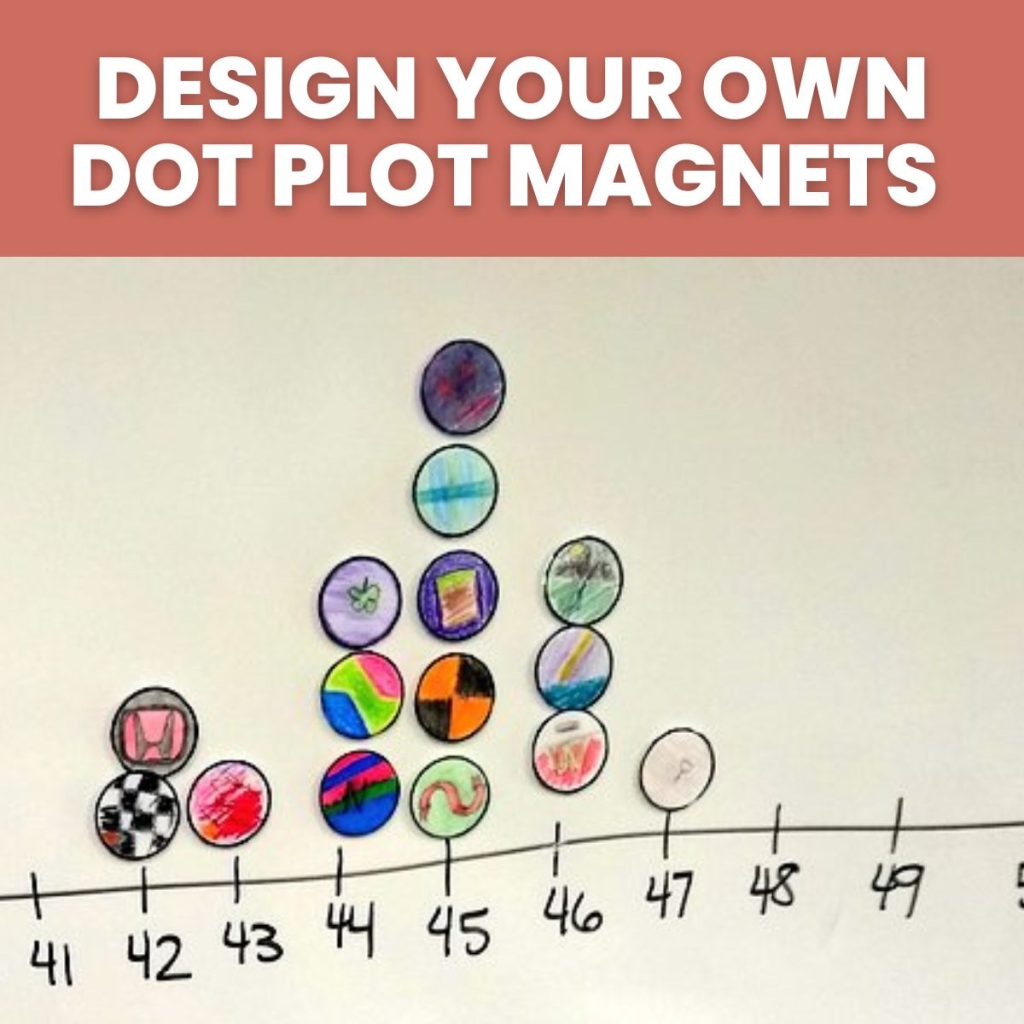

Design Your Own Dot Plot Magnets

Your Algebra 1 students will love creating their own custom magnet which can be used to create class dot plots.

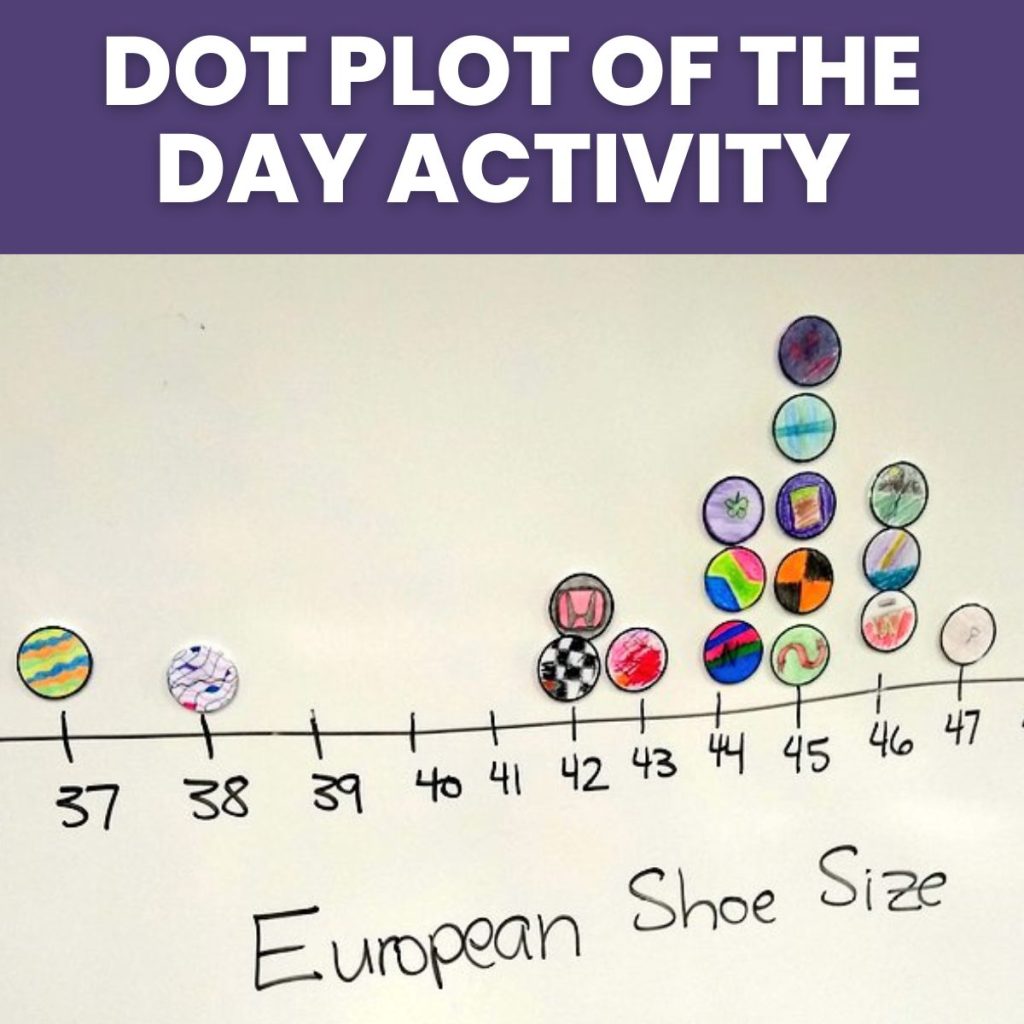

Dot Plot of the Day Activity

Don’t settle for random data sets from your textbook to analyze. In this dot plot of the day activity, students will practice describing data sets involving data collected directly from their classmates.

How Many States Have You Visited Data Collection Activity

Once you have created your dot plot magnets, ask your students “How Many States Have You Visited?” This is a great first dot plot to make and analyze as a class.

Dot Plot Matching Card Sort Activity

The numbers and labels are missing from these dotplots. Can you use your logical reasoning skills to match each dotplot with it represents?

Count the Objects Data Collection Task

How many items will your students count in the allotted time? Have your students create a graphical display of the results. How does the true value compare to the class results?

Analyzing the Ages of Academy Award Winners

This data set analyzing the ages of male and female academy award winners is the perfect introduction to back-to-back stem-and-leaf plots.

Highlights Hidden Pictures Data Collection Activity for Comparing Data Sets

Students were given a plastic sleeve with a hidden picture puzzle and a card with a picture to locate within the puzzle, a MyChron timer , and a data recording sheet to glue into their interactive notebooks.

Game of Greed

I want to share a statistics foldable I made for the Game of Greed. This dice game is such a fun way to collect data!

Boxplot and Histogram Card Sort Activity

My stats students were struggling with boxplots. So, I decided to take a break from making boxplots to letting them look at boxplots that were already made. A quick google search led me to this boxplot and histogram card sort activity.

Looking for Outliers in the OKC Thunder

Students will practice finding a five number summary and determining if there are any outliers by looking at salary data for the OKC Thunder.

5 W’s and H Foldable

I created this 5 W’s and H Foldable for my Stats class to glue in their statistics interactive notebooks (INBs).

Dry Erase Workmat for Finding Five Number Summary, IQR, and Outliers

Students use this helpful dry erase workmat to help them organize their work while finding the five number summary of a data set and using that to find IQR and outliers.

Graphs In the News Statistics Foldable

I made this Graphs in the News Statistics Foldable to give my stat students practice analyzing graphs of categorical data. I pulled some real-life data displays from the internet. Then, I had students discuss these questions together as a group. I loved hearing my students reason through these!

Estimating 30 Seconds Data Collection Activity

How well can your students estimate 30 seconds? This is the perfect quick data collection activity to get a fun set of data to analyze.

Types of Data Displays Foldable

I created this types of data displays foldable for my Algebra 1 students to review bar graphs, stem-and-leaf graphs, box-and-whisker plots, and circle graphs.

Let’s Make a Graph Activity

I created this let’s make a graph activity to give my Algebra 1 students practice making various types of data displays. We needed to practice making bar graphs, box-and-whisker-plots, circle graphs, and stem-and-leaf graphs.

Paper Airplane Lab

Recently, my statistics class was working on a paper airplane lab to explore the effect of the wingspan of a paper airplane on the distance traveled by the plane.

Gummy Bear Catapults

Recently, my statistics students have been doing an activity with gummy bear catapults.

Hiring Discrimination Simulation for Statistics

This year, I decided I wanted to start my statistics class off with a statistical simulation to give them a taste of what was in store for the year. I ran across mention of a hiring discrimination simulation on another blog, and I thought it would make the perfect first activity.

Are your Graphs OK? TULSA Graphing Posters

I want to share a set of TULSA Graphing Posters here on the blog today. Does your graph have a Title, Units, Labels, Scales, and Accuracy?

Normal Distribution Activities

Normal distribution question stack activity.

This normal distribution question stack activity was my introduction to a new practice structure for math class: question stacks. Since then, I have gone on to create question stacks for a large number of math topics.

Scatterplot Activities

M&m’s scatter plot activity.

Students take turns building scatter plots using m&m’s and having their classmates determine if the scatterplot has positive, negative, or no correlation.

Candy Grab Lab for Linear Regression

This Candy Grab Lab is one of my favorite ways to introduce students to the process of linear regression.

Twizzlers Linear Regression Lab

I learned about using twizzlers for linear regression from Tammy Ballard with Wake County Public Schools. I modified her activity to create my own twizzlers linear regression lab that met the needs of my specific group of algebra students.

Best Line of Best Fit Contest

This Desmos activity kept students engaged and interested while learning how to draw lines of best fit by hand.

Hula Hoop Scatterplot Activity

This hula hoop scatterplot activity gets students moving in math class while learning about how to create a scatterplot and use that scatterplot and linear regression to make predictions.

Starburst Scatterplot Activity

This starburst scatterplot activity ended up being one of my favorite activities of the year!

Tongue Twister Linear Regression Activity

I created this tongue twister linear regression activity for my algebra students to use while collecting tongue twister data in their small groups. Students work in groups to collect data regarding the amount of time it takes various numbers of people to recite a tongue twister.

Bouncing Tennis Balls Linear Regression Activity

Warning: don’t try this bouncing tennis balls linear regression activity if your classroom is on the 2nd floor. The science teacher below your classroom will not appreciate it.

Linear Regression Activity with the True Colors Personality Test

I was inspired to give my students the true colors personality test after attending a workshop where we did this personality test as one of our activities. We took turns going around the room saying what color we found as our result.

When comparing the amount of time it took for different numbers of students, we were able to perform a linear regression to figure out how long it would take the entire class to speak their results.

Statistics Projects

Response bias project.

Instead of giving my statistics students a semester test, I chose to assign them a response bias project based on one shared online by Josh Tabor.

Confidence Interval Projects

I’m excited today to share the results of our confidence interval projects in statistics.

Statistics Survey Project

Before Spring Break, I gave my statistics students the assignment to design their own survey projects. We spent well over a week on this project, but I definitely think it was time well spent.

Probability Activities

Hex nut probability activity.

All you need for this fun and engaging hex nut probability activity are hex nuts, empty soda bottles, and a plastic ring.

Mystery Box Probability Activity

Can your students use their knowledge of probability to determine the contents of the mystery box ?

Blocko Probability Game

This Blocko Probability game is perfect for introducing the difference between experimental and theoretical probability to students.

I play the game with linking cubes with my students, but you could actually use any collection of small items.

Probability Bingo

Probability Bingo is not your typical bingo game!

Students fill their bingo boards based on the color combinations they think will appear the most. The winner is the person who fills out their entire bingo board first.

Greedy Pig Dice Game

I have been playing this greedy pig dice game with my students to practice probability since I learned it while student teaching!

All you need for this game is a set of dice !

Venn Diagram Template with Guess My Rule Cards

I created this Venn Diagram template to use with Guess My Rule cards, but it could be used in so many different ways in the math classroom.

More Activities for Teaching Statistics

Sarah Carter teaches high school math in her hometown of Coweta, Oklahoma. She currently teaches AP Precalculus, AP Calculus AB, and Statistics. She is passionate about sharing creative and hands-on teaching ideas with math teachers around the world through her blog, Math = Love.

Similar Posts

3 Circle Venn Diagram Template

Browse Course Material

Course info.

- Prof. Tomaso Poggio

Departments

- Brain and Cognitive Sciences

As Taught In

- Probability and Statistics

- Computation and Systems Biology

- Neuroscience

- Cognitive Science

Learning Resource Types

Statistical learning theory and applications, course description.

You are leaving MIT OpenCourseWare

Statistical Learning

Stat 432 @ uiuc, what’s going on.

- It is currently Week 17 .

- If you have any questions, post on Piazza or stop by office hours !

Week 00 Aug 17 - Aug 21

Syllabus week! Welcome to STAT 432!

Assignments

| Assignment | Deadline | Credit |

|---|---|---|

| Friday, 11:59 PM | 105% |

| Link | Source |

|---|---|

| Course Website | |

| Course Website | |

| Instructor Website | |

| Instructor Website | |

| Basics of Statistical Learning |

Week 01 Aug 24 - Aug 28

First official week of class! Machine learning overview. Probability, statistics, and computing review.

- Keywords: Supervised Learning, Unsupervised Learning, Regression, Classification, Density Estimation, Clustering, Outlier Detection, Dimension Reduction

| Assignment | Deadline | Credit |

|---|---|---|

| Friday, 11:59 PM | 100% | |

| Friday, 11:59 PM | 105% |

| Link | Source |

|---|---|

| Basics of Statistical Learning | |

| Basics of Statistical Learning | |

| Basics of Statistical Learning | |

| Basics of Statistical Learning |

| Title | Link | Mirror |

|---|---|---|

| 1.1 - Welcome! | ||

| 1.2 - R and RStudio Setup | ||

| 1.3 - Just Hit [tab] | ||

| 1.4 - Machine Learning Tasks |

Office Hours

| Staff and Link | Day | Time |

|---|---|---|

| Wednesday | 4:00 PM - 5:00 PM | |

| Wednesday | 7:00 PM - 8:00 PM | |

| Wednesday | 8:00 PM - 10:00 PM | |

| Thursday | 4:00 PM - 5:00 PM | |

| Thursday | 7:00 PM - 8:00 PM | |

| Thursday | 8:00 PM - 10:00 PM |

Week 02 Aug 31 - Sep 04

Introduction to regression for prediction tasks and supervised learning in general.

- Keywords: Regression, Linear Regression, Prediction, Conditional Mean, RMSE, Test-Train Split

| Assignment | Deadline | Credit |

|---|---|---|

| Friday, 11:59 PM | 85% | |

| Friday, 11:59 PM | 100% | |

| Friday, 11:59 PM | 105% |

| Link | Source |

|---|---|

| Basics of Statistical Learning |

| Title | Link | Mirror |

|---|---|---|

| 2.1 - Linear Regression | ||

| 2.2 - Linear Regression in R |

| Staff and Link | Day | Time |

|---|---|---|

| Wednesday | 4:00 PM - 5:00 PM | |

| Wednesday | 5:00 PM - 7:00 PM | |

| Wednesday | 7:00 PM - 8:00 PM | |

| Wednesday | 8:00 PM - 10:00 PM | |

| Thursday | 4:00 PM - 5:00 PM | |

| Thursday | 7:00 PM - 8:00 PM | |

| Thursday | 8:00 PM - 10:00 PM |

Week 03 Sep 07 - Sep 11

Introduction to nonparametric regression and discussions of model flexibility, the bias-variance tradeoff, and overfitting.

- Keywords: Nonparametric Regression, k-Nearest Neighbors, Decision Trees, Model Flexibility, Bias-Variance Tradeoff, Overfitting, No Free Lunch, Curse of Dimensionality

| Link | Source |

|---|---|

| Title | Link | Mirror |

|---|---|---|

| 3.1 - Nonparametric Regression | ||

| 3.2 - KNN and Trees in R | ||

| 3.3 - Supervised Learning Concepts |

| Staff and Link | Day | Time |

|---|---|---|

| Wednesday | 4:00 PM - 5:00 PM | |

| Wednesday | 5:00 PM - 7:00 PM | |

| Wednesday | 7:00 PM - 8:00 PM | |

| Wednesday | 8:30 PM - 10:30 PM | |

| Thursday | 4:00 PM - 5:00 PM | |

| Thursday | 7:00 PM - 8:00 PM | |

| Thursday | 8:00 PM - 10:00 PM |

Week 04 Sep 14 - Sep 18

This week we will introduce the classification task in detail.

- Keywords: Classification, Bayes Classifier, Bayes Error, Nonparametric Classification, k-Nearest Neighbors, Decision Trees, Misclassification Rate, Accuracy

| Link | Source |

|---|---|

| Title | Link | Mirror |

|---|---|---|

| 4.1 - Classification Introduction | ||

| 4.2 - Nonparametric Classification | ||

| 4.3 - Classification in R |

Week 05 Sep 21 - Sep 25

This week we will introduce logistic regression and discuss the details of evaluating models for binary classification

- Keywords: Logistic Regression, Link Function, Decision Boundary, Binary Classification, Confusion Matrix, Sensitivity, Specificity

| Title | Link | Mirror |

|---|---|---|

| 5.1 - Logistic Regression | ||

| 5.2 - Binary Classification | ||

| 5.3 - Logistic Regression in R | ||

| 5.4 - Binary Classification in R |

Week 06 Sep 28 - Oct 02

This week we will prepare for the exam.

| Assignment | Deadline | Credit |

|---|---|---|

| Friday, 11:59 PM | 85% | |

| Friday, 11:59 PM | 100% |

| Link | Source |

|---|---|

| Title | Link | Mirror |

|---|---|---|

| 6.1 - Another Binary Example in R |

Week 07 Oct 05 - Oct 09

Exam week!!!

| Assignment | Deadline | Credit |

|---|---|---|

| Monday, 7:00 PM | NA | |

| Friday, 11:59 PM | 85% | |

| Friday, 11:59 PM | 105% |

| Title | Link | Mirror |

|---|---|---|

| 7.1 - A Mental Model for R | ||

| 7.2 - Generative Models |

Week 08 Oct12 - Oct 16

This week we will discuss simulation, bootstrap, and cross-validation.

| Title | Link | Mirror |

|---|---|---|

| 8.1 - Simulation | ||

| 8.2 - Bootstrap | ||

| 8.3 - Cross-Validation |

Week 09 Oct 19 - Oct 23

This week we will discuss regularization!

- Keywords: Penalized Regression, Shrinkage, Regularization, Variable Selection, Best Subset Selection, Ridge Regression, Lasso

| Title | Link | Mirror |

|---|---|---|

| 9.1 - Regularization |

| Staff and Link | Day | Time |

|---|---|---|

| Wednesday | 4:00 PM - 5:00 PM | |

| Wednesday | 5:00 PM - 7:00 PM | |

| Wednesday | 8:00 PM - 10:00 PM | |

| Thursday | 4:00 PM - 5:00 PM | |

| Thursday | 7:00 PM - 8:00 PM | |

| Thursday | 8:00 PM - 10:00 PM | |

| Friday | 7:00 PM - 8:00 PM |

Week 10 Oct 26 - Oct 30

An introduction to ensemble methods.

- Keywords: Bagging, Random Forest, Boosting

| Assignment | Deadline | Credit |

|---|---|---|

| Friday, 11:59 PM | 100% | |

| Friday, 11:59 PM | 105% | |

| Friday, 11:59 PM | 105% | |

| December 9 | 100% |

Week 11 Nov 02 - Nov 06

This weeky we’ll start transitioning to focusing on analyses.

| Assignment | Deadline | Credit |

|---|---|---|

| Friday, 11:59 PM | 100% | |

| Friday, 11:59 PM | 100% | |

| November 11 | 100% | |

| December 9 | 100% |

Week 12 Nov 09 - Nov 13

| Assignment | Deadline | Credit |

|---|---|---|

| Friday, 11:59 PM | 85% | |

| Friday, 11:59 PM | 85% | |

| November 11 | 100% | |

| December 9 | 100% |

Week 13 Nov 16 - Nov 20

Continue work on analyses!

| Assignment | Deadline | Credit |

|---|---|---|

| November 20 | 100% | |

| December 9 | 100% |

Week 14 Nov 23 - Nov 27

This week is Fall Break. There are no course activities, including no office hours.

Week 15 Nov 30 - Dec 04

Continue work on analyses! Almost the end of the semester!

| Assignment | Deadline | Credit |

|---|---|---|

| Wednesday | 100% | |

| December 9 | 100% | |

| December 9 | 100% |

Week 16 Dec 07 - Dec 11

Last week of class.

| Assignment | Deadline | Credit |

|---|---|---|

| Wednesday | 100% | |

| Wednesday | 100% |

| Staff and Link | Day | Time |

|---|---|---|

| Wednesday | 4:00 PM - 5:00 PM | |

| Wednesday | 5:00 PM - 7:00 PM | |

| Wednesday | 7:00 PM - 8:00 PM | |

| Wednesday | 8:00 PM - 10:00 PM |

Week 17 Dec 14 - Dec 18

You’re still here? It’s over. Go home. Go!

IMAGES

COMMENTS

Trevor Hastie Trevor Hastie is a professor of statistics at Stanford University. His main research contributions have been in the field of applied nonparametric regression and classification, and he has written two books in this area: "Generalized Additive Models" (with R. Tibshirani, Chapman and Hall, 1991), and "Elements of Statistical Learning" (with R. Tibshirani and J. Friedman, Springer ...

An Introduction to Statistical Learning provides a broad and less technical treatment of key topics in statistical learning. This book is appropriate for anyone who wishes to use contemporary tools for data analysis. The first edition of this book, with applications in R (ISLR), was released in 2013. A 2nd Edition of ISLR was published in 2021.

This popular course has been taken by over 290,000 learners as of November 2023. The course for An Introduction to Statistical Learning, with Applications in Python is available here. The video lectures covering the chapter material are the same for both courses. The courses also include sessions in R/Python, which differ between the two courses.

Statistical Learning is a crucial specialization for those pursuing a career in data science or seeking to enhance their expertise in the field. This program builds upon your foundational knowledge of statistics and equips you with advanced techniques for model selection, including regression, classification, trees, SVM, unsupervised learning ...

Statistical Learning Theory and Applications. Menu. More Info Syllabus Calendar Readings Lecture Notes Assignments Lecture Notes. All materials are courtesy of the person named and are used with permission. ... assignment Problem Sets. Download Course. Over 2,500 courses & materials Freely sharing knowledge with learners and educators around ...

Statistical Learning Theory and Applications. Menu. More Info Syllabus Calendar Readings Lecture Notes Assignments Assignments. Problem Sets. Problem Set 1: Kernel ... assignment Problem Sets. Download Course. Over 2,500 courses & materials Freely sharing knowledge with learners and educators around the world.

Module 8 of Math 569: Statistical Learning covers diverse advanced machine learning techniques. It begins with Decision Trees, focusing on their structure and application in both classification and regression tasks. ... To access graded assignments and to earn a Certificate, you will need to purchase the Certificate experience, during or after ...

edX | Build new skills. Advance your career. | edX

Applied Learning Project. Each course in this Data Science: Statistics and Machine Learning Specialization includes a hands-on, peer-graded assignment. To earn the Specialization Certificate, you must successfully complete the hands-on, peer-graded assignment in each course, including the final Capstone Project.

Focuses on the problem of supervised learning from the perspective of modern statistical learning theory starting with the theory of multivariate function approximation from sparse data. Develops basic tools such as Regularization including Support Vector Machines for regression and classification. Derives generalization bounds using both stability and VC theory. Discusses topics such as ...

Khanmigo is now free for all US educators! Plan lessons, develop exit tickets, and so much more with our AI teaching assistant.

Thu 11:00am to 12:30pm. Location: 46-3002 (Singleton Auditorium) Prerequisite (s): see course website. Course Website: https://poggio-lab.mit.edu/9-520. Course Description: The class covers foundations and recent advances of Machine Learning from the point of view of Statistical Learning Theory.

Power, bootstrapping, and permutation tests. 5. Bayesian Statistics - University Of California, Santa Cruz. The Bayesian Statistics specialization offered by the University of California, Santa Cruz on Coursera focuses on using tools from Bayesian statistics to perform analysis, forecasting, and building models on real-world data.

Week 3 Assignment - Statistical Estimation & The Central Limit Theorem ... In select learning programs, you can apply for financial aid or a scholarship if you can't afford the enrollment fee. If fin aid or scholarship is available for your learning program selection, you'll find a link to apply on the description page. ...

In this course, you will learn about several types of sampling distributions, including the normal distribution shown here. (Courtesy of Mwtoews on Wikipedia.) This course is an introduction to statistical data analysis. Topics are chosen from applied probability, sampling, estimation, hypothesis testing, linear regression, analysis of variance ...

This approach to learning is, in a sense, the ultimate inverse problem, in which sparse compositionality and stability play a key role in ensuring good generalization performance. The content is roughly divided into three parts. The first part is about: classical regularization and regularized least squares. kernel machines, SVM.

STAT 542: Statistical Learning. Instructor. Feng Liang : liangf AT illinois DOT edu. Office: 113D Illini Hall. Phone: (217) 333-6017. Registration (F23) The instructor cannot manually enroll any students. If you have any questions related to registration and enrollment of STAT 542, please contact the registration office. For non-STAT students ...

1 Measures of Central Tendency Activities. 1.1 Level the Towers Activity for Introducing Mean. 1.2 Tenzi vs Splitzi Measures of Central Tendency Activity. 1.3 Always Sometimes Never Activity for Mean, Median, Mode, and Range. 1.4 Mean, Median, Mode, and Range Challenge Activity.

This course is for upper-level graduate students who are planning careers in computational neuroscience. This course focuses on the problem of supervised learning from the perspective of modern statistical learning theory starting with the theory of multivariate function approximation from sparse data. It develops basic tools such as Regularization including Support Vector Machines for ...

Basics of Statistical Learning: Video. Title Link Mirror; 2.1 - Linear Regression: 2.1 - YouTube: 2.1 - MediaSpace: 2.2 - Linear Regression in R: 2.2 - YouTube: 2.2 - MediaSpace: Office Hours. ... There are no course activities, including no office hours. Week 15 Nov 30 - Dec 04. Continue work on analyses! Almost the end of the semester ...

There are 8 modules in this course. This course aims to help you to draw better statistical inferences from empirical research. First, we will discuss how to correctly interpret p-values, effect sizes, confidence intervals, Bayes Factors, and likelihood ratios, and how these statistics answer different questions you might be interested in.

impacted their understanding of statistical machine learning. The reflection assignment allows students to provide feedback on the course and their own growth as learners. Points earned Grade 85-100+ A 75-84 B 65-74 C 50-64 D 0-49 F Course Policies Email: Information about the course (changes to assignments, reminders, schedules, etc.) will