- Engineering & Technology

- Industrial Engineering

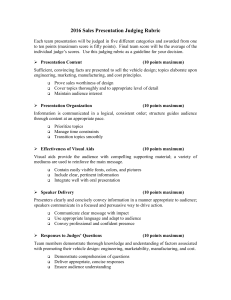

Sales Presentation Judging Rubric

Related documents.

Add this document to collection(s)

You can add this document to your study collection(s)

Add this document to saved

You can add this document to your saved list

Suggest us how to improve StudyLib

(For complaints, use another form )

Input it if you want to receive answer

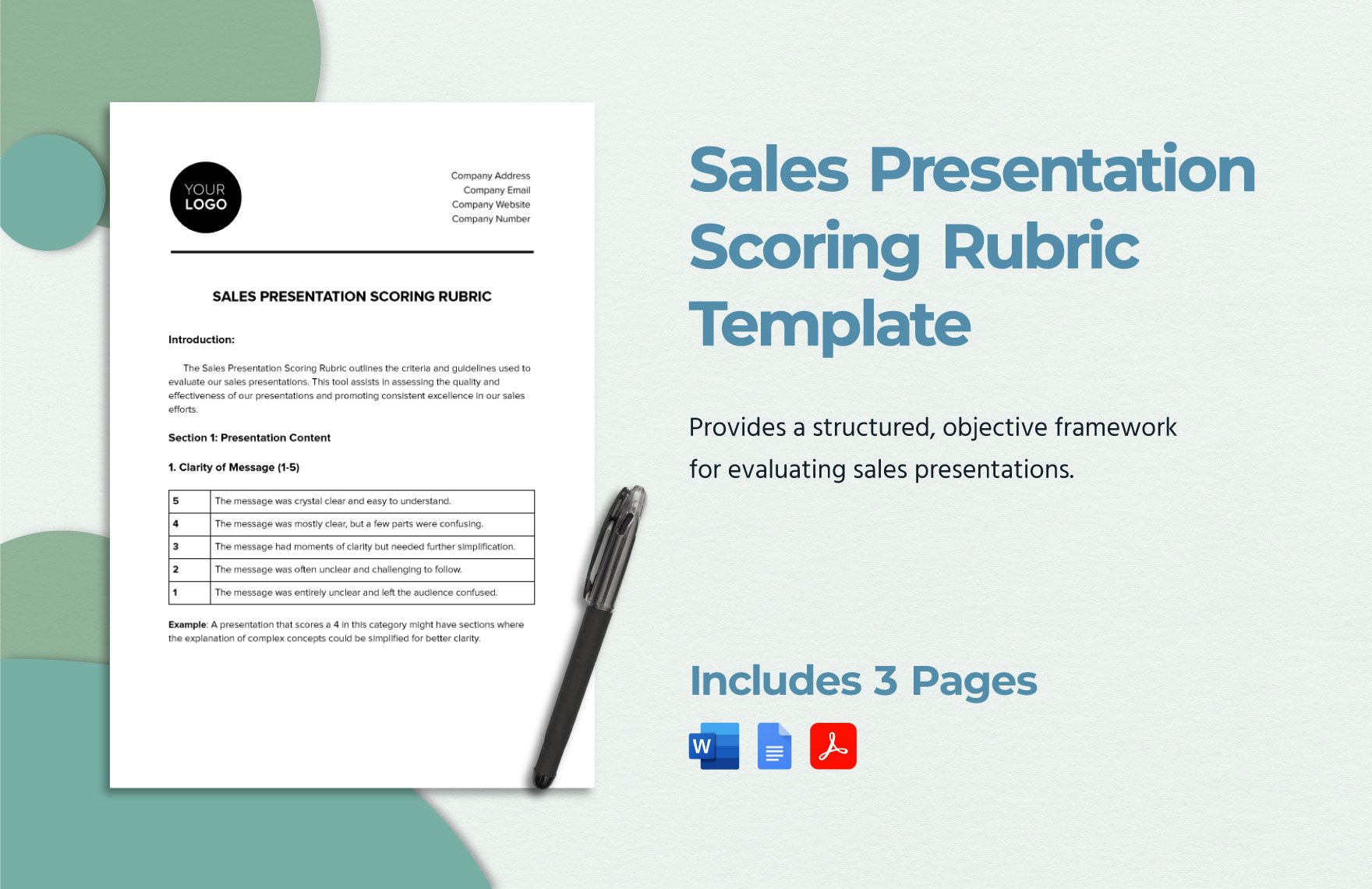

Free Sales Presentation Scoring Rubric Template

Free Download this Sales Presentation Scoring Rubric Template Design in Word, Google Docs, PDF Format. Easily Editable, Printable, Downloadable.

Elevate your pitch game with precision using ths editable template. This customizable Sales Presentation Scoring Rubric Template provides a structured, objective framework for evaluating sales presentations. Seamlessly assess content, delivery, and overall impact, ensuring consistent, data-driven feedback. Revolutionize your presentations and drive conversions. So get it now at Template.net!

Other Sales Business Bundled

- strategy & planning

- client & account management

- lead generation & management

- proposals & quotes

- presentations & collaterals

- training & onboarding

- negotiations & closing deals

- contracts & agreements

- analytics & reporting

- commission & incentive plans

- trade shows & networking events

- customer feedback & post-sale management

Already a premium member? Sign in

- , Google Docs

You may also like

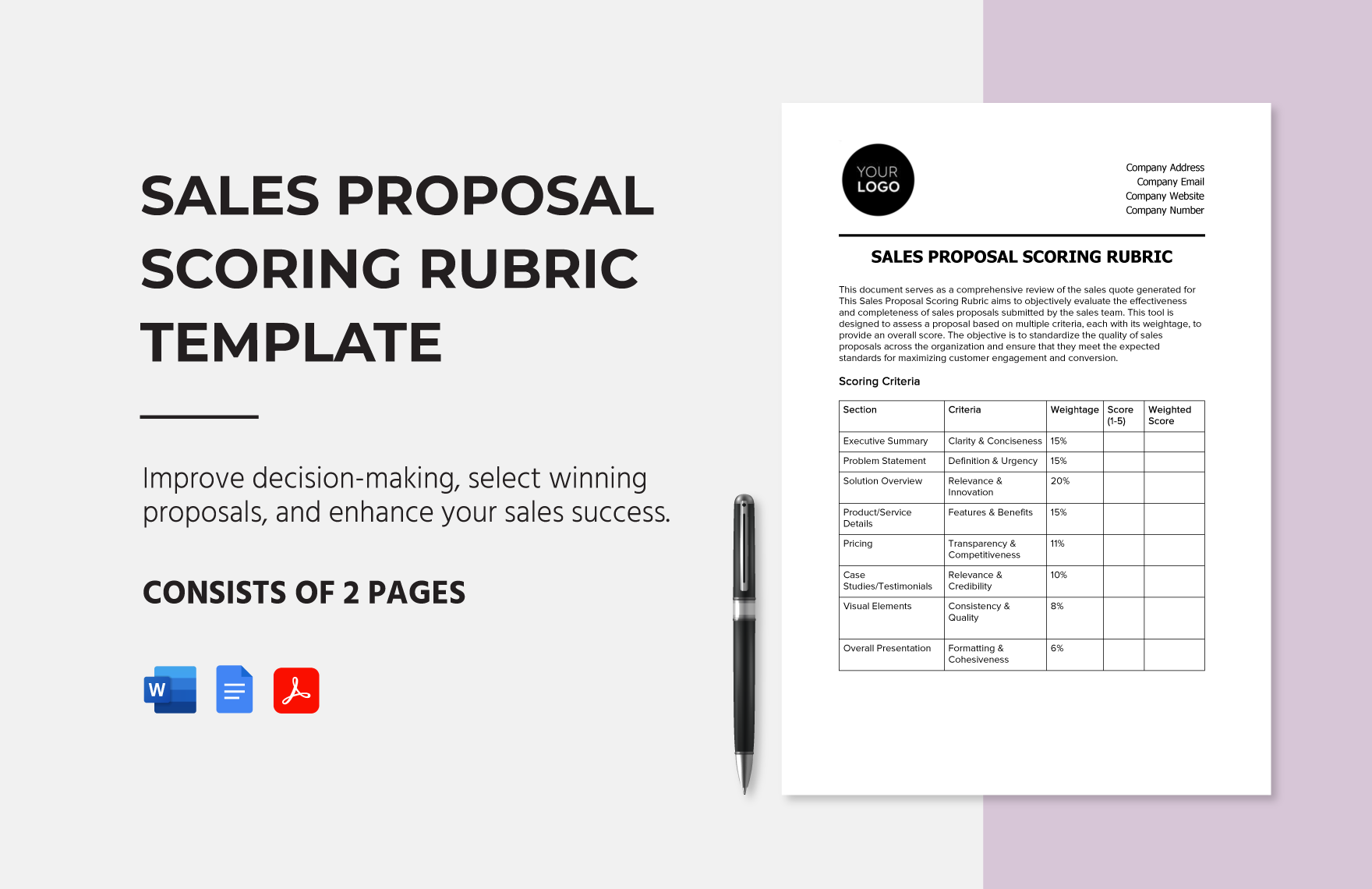

Sales Proposal Scoring Rubric Template

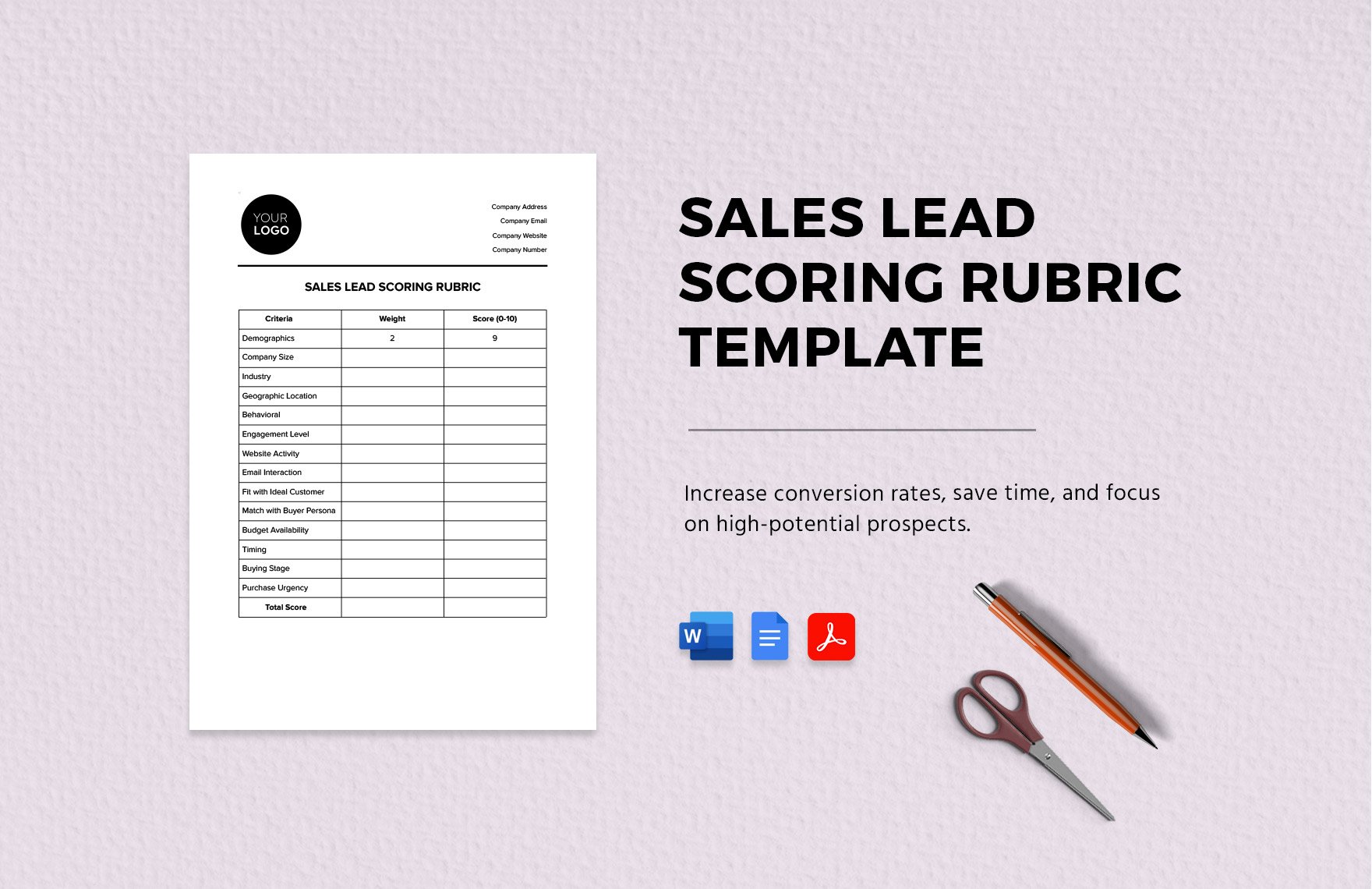

Sales Lead Scoring Rubric Template

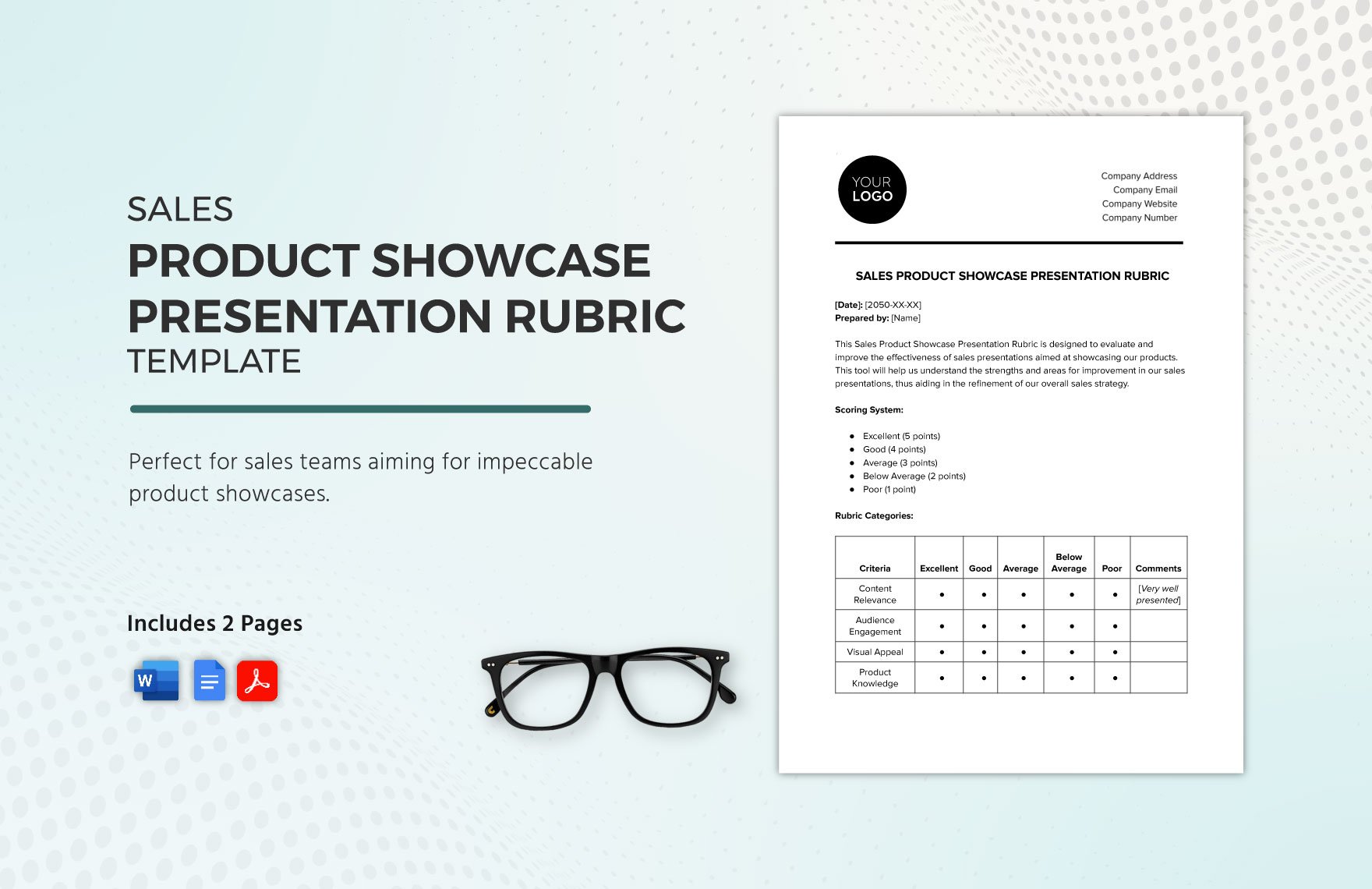

Sales Product Showcase Presentation Rubric Template

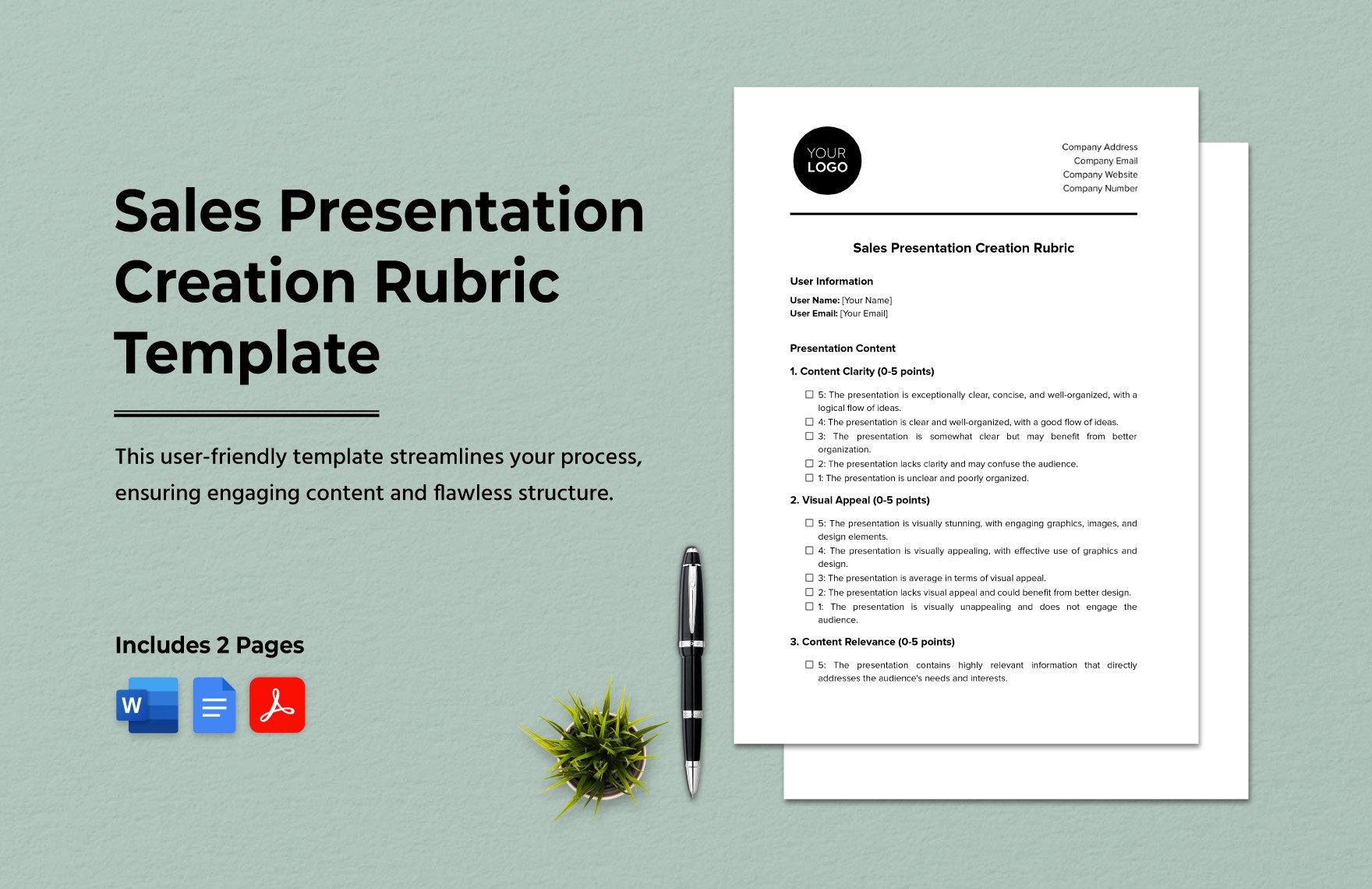

Sales Presentation Creation Rubric Template

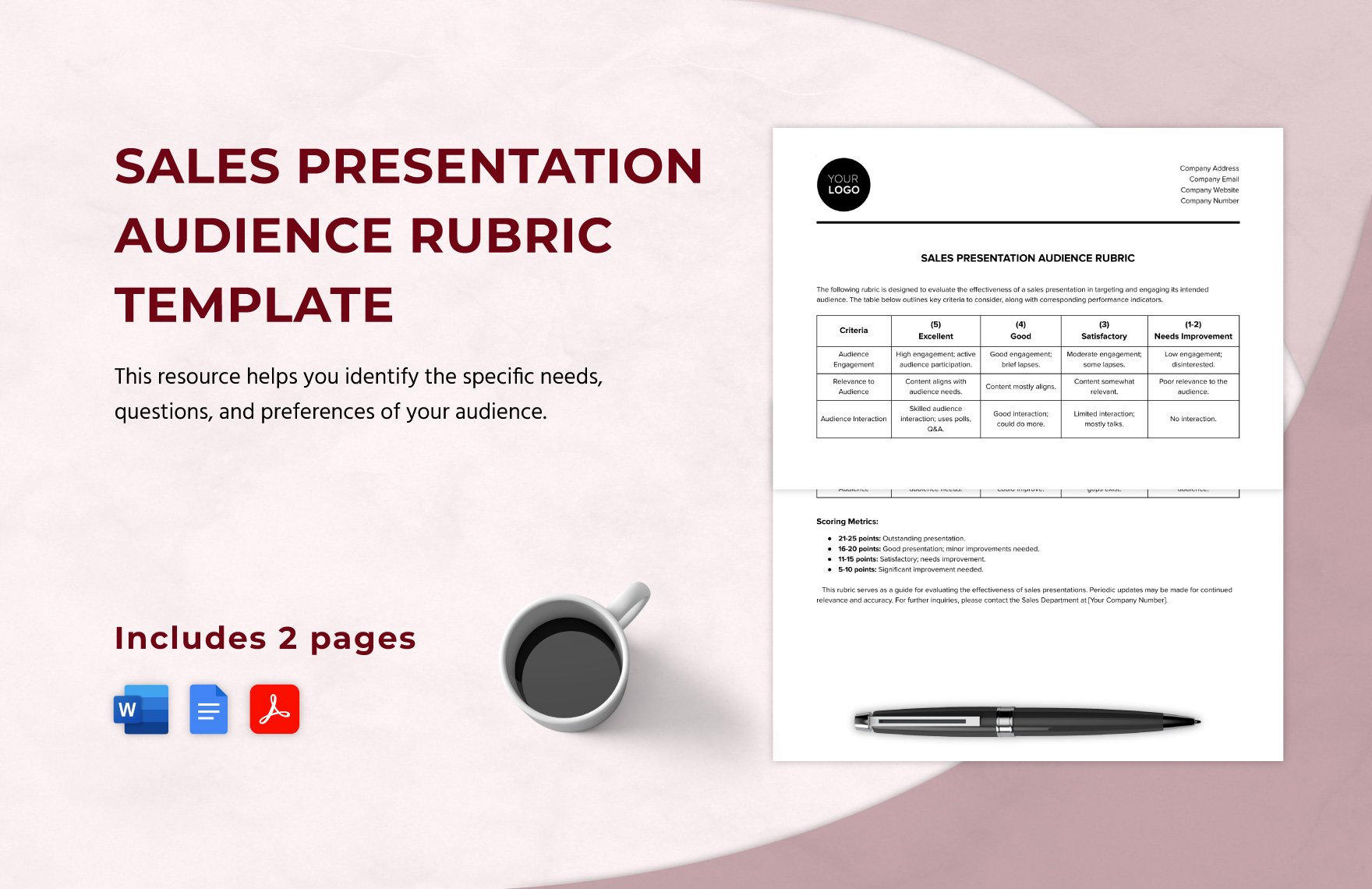

Sales Presentation Audience Rubric Template

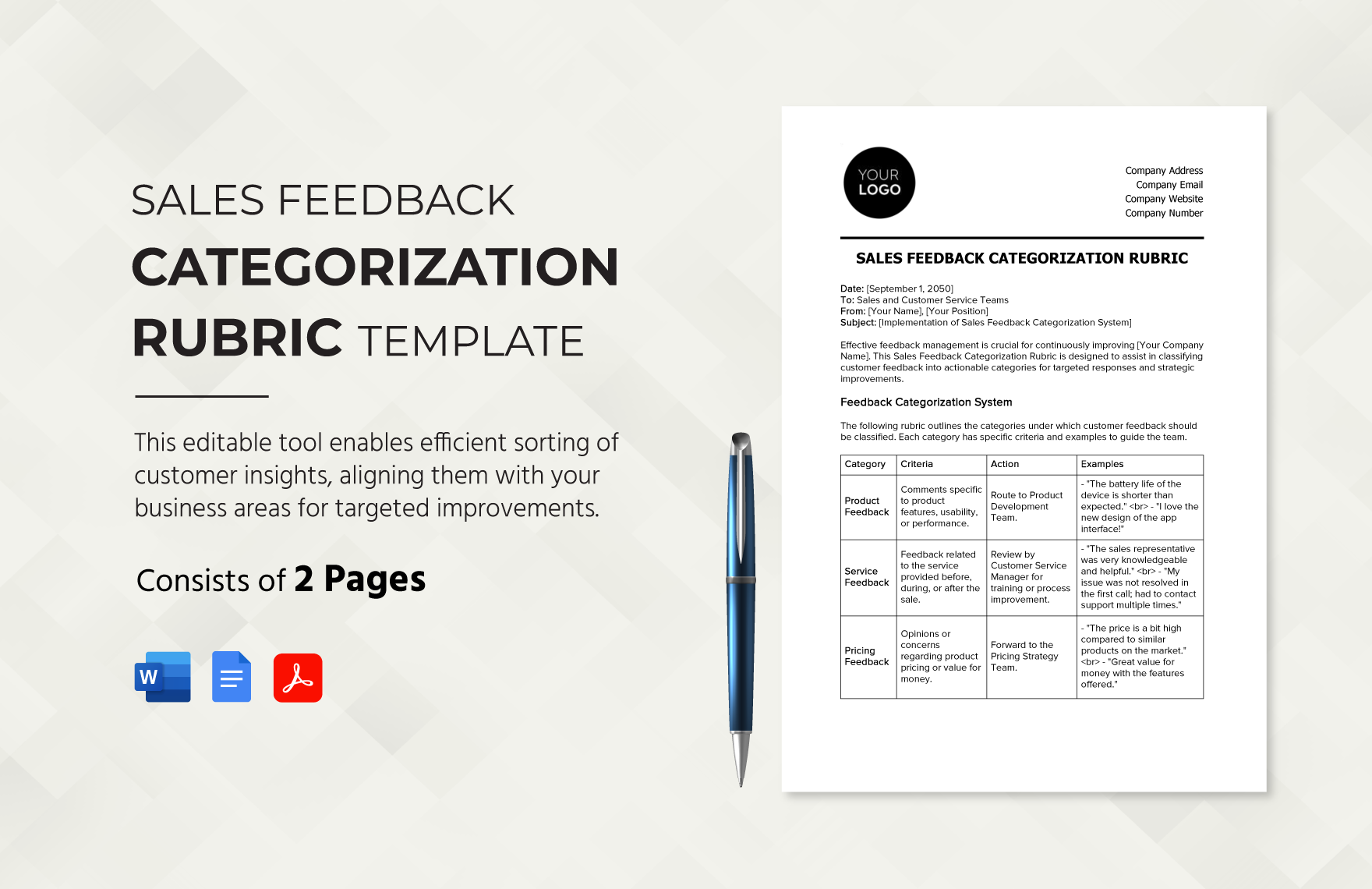

Sales Feedback Categorization Rubric Template

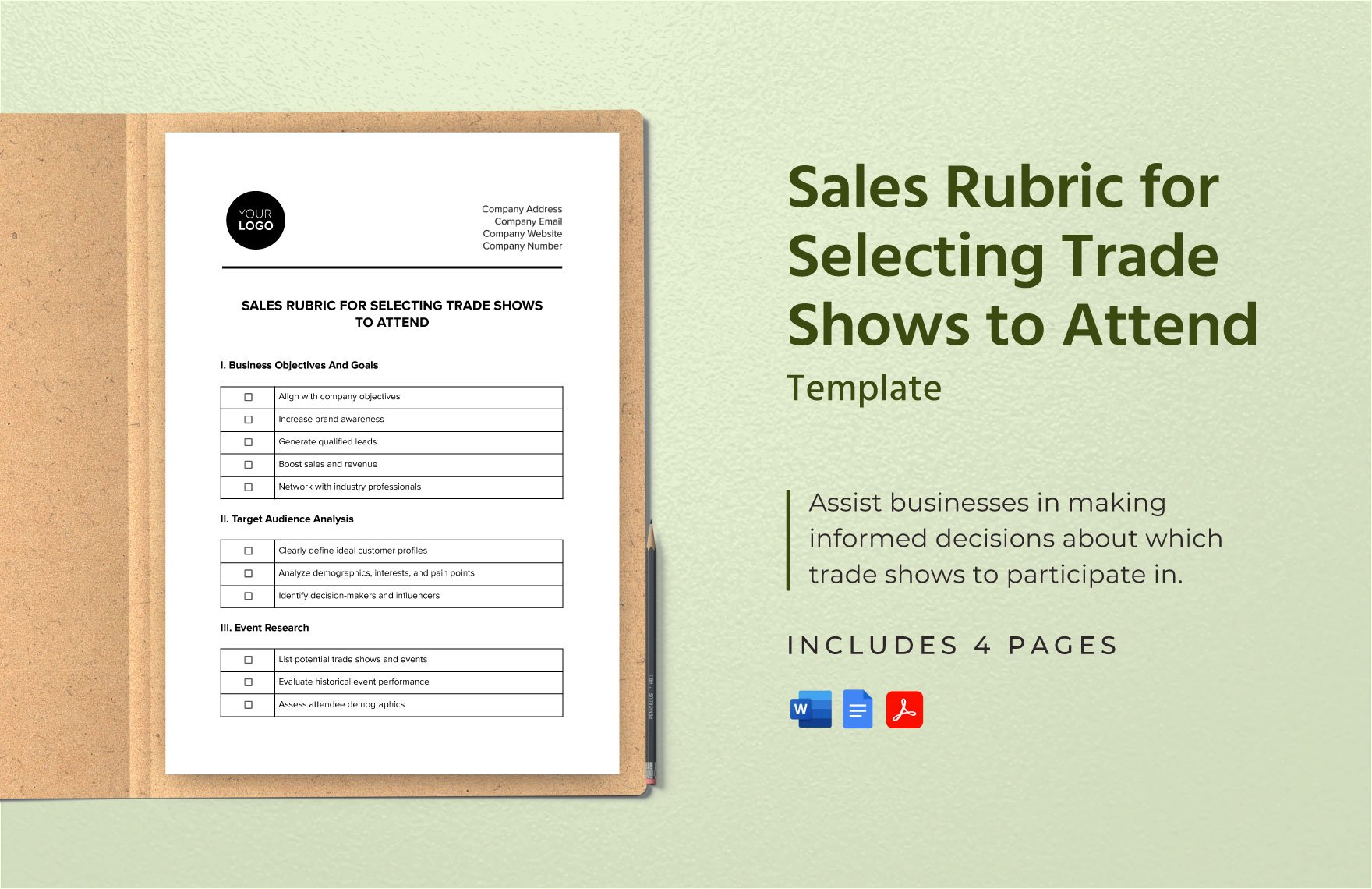

Sales Rubric for Selecting Trade Shows to Attend Template

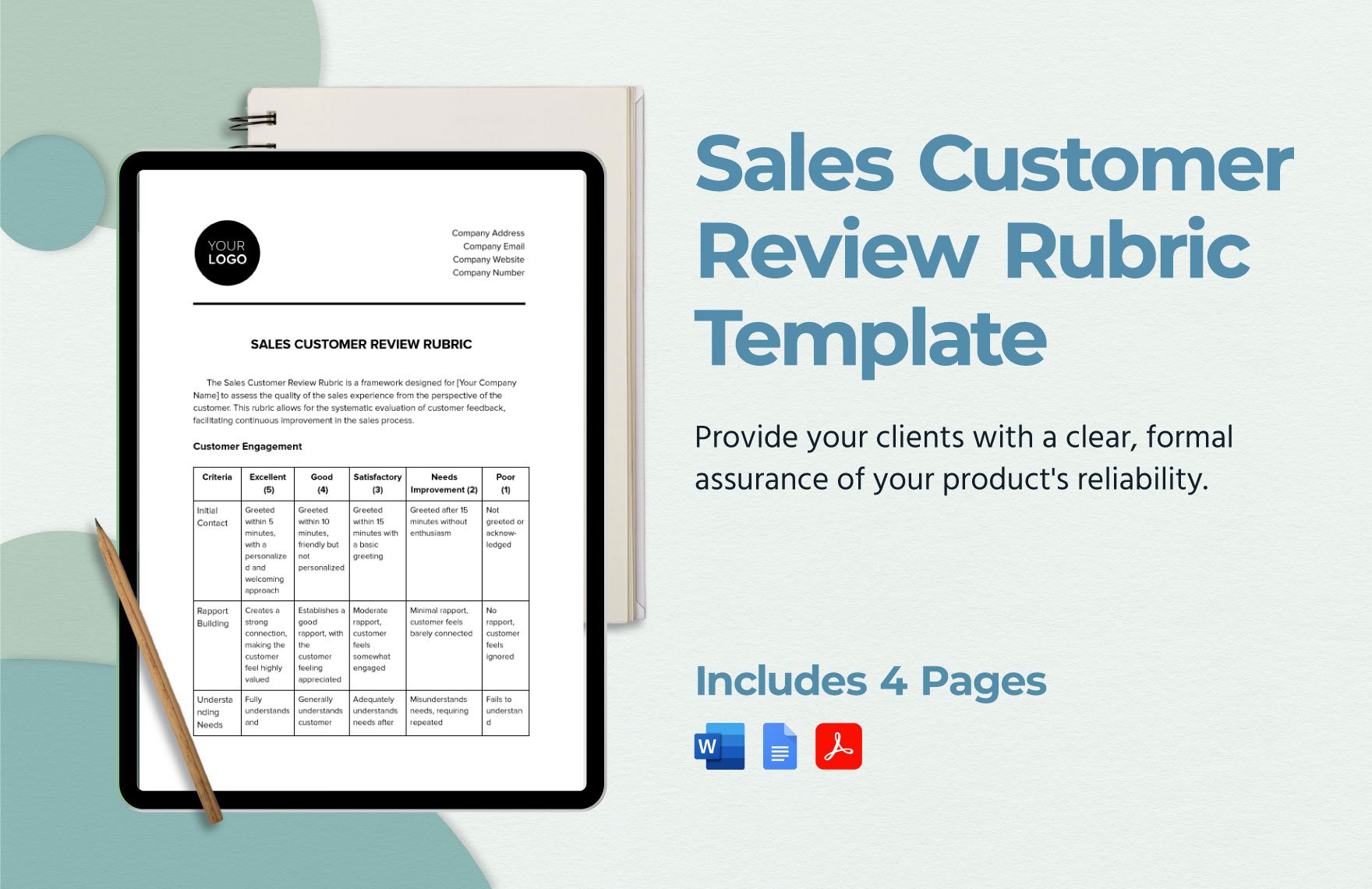

Sales Customer Review Rubric Template

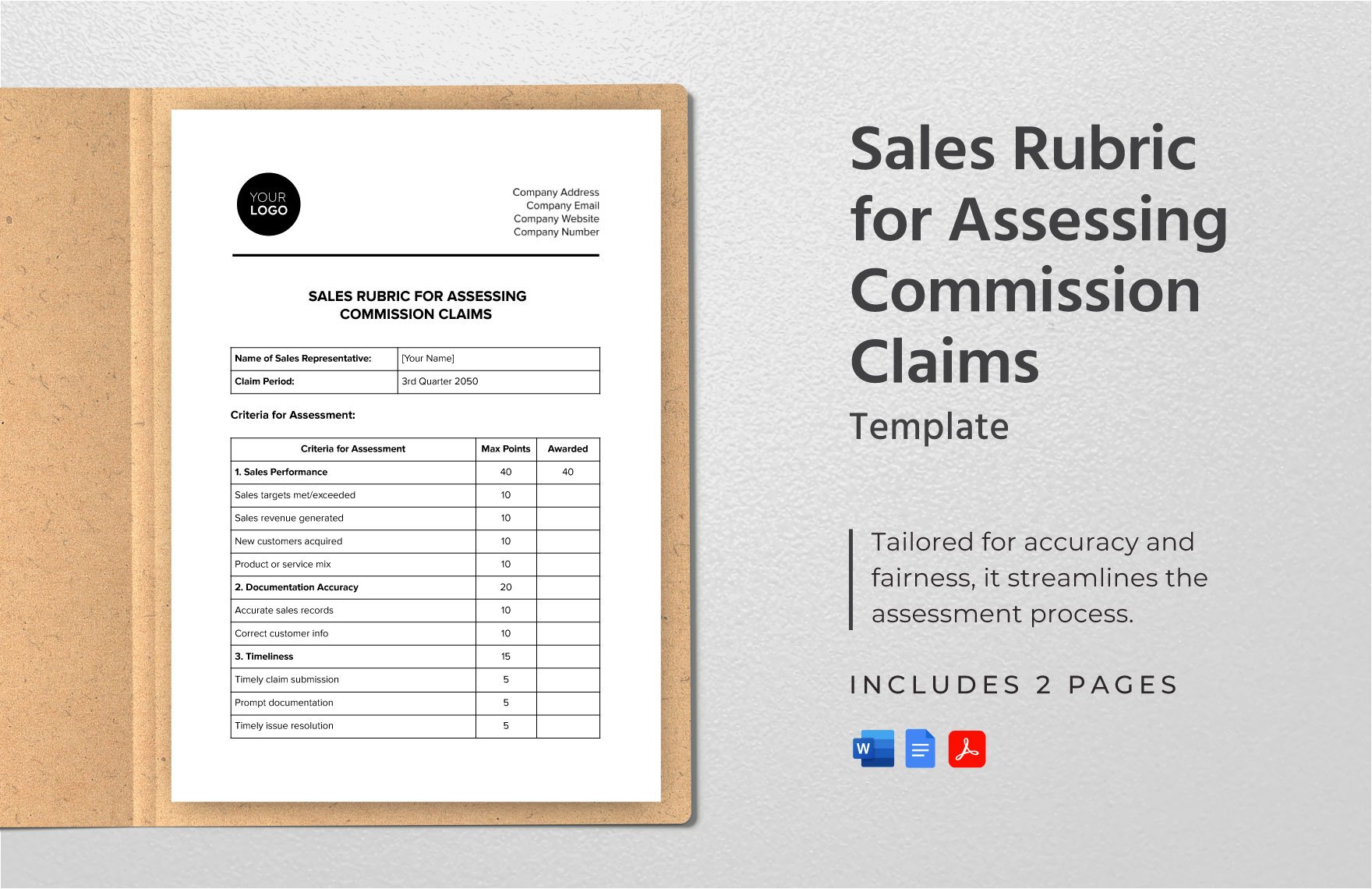

Sales Rubric for Assessing Commission Claims Template

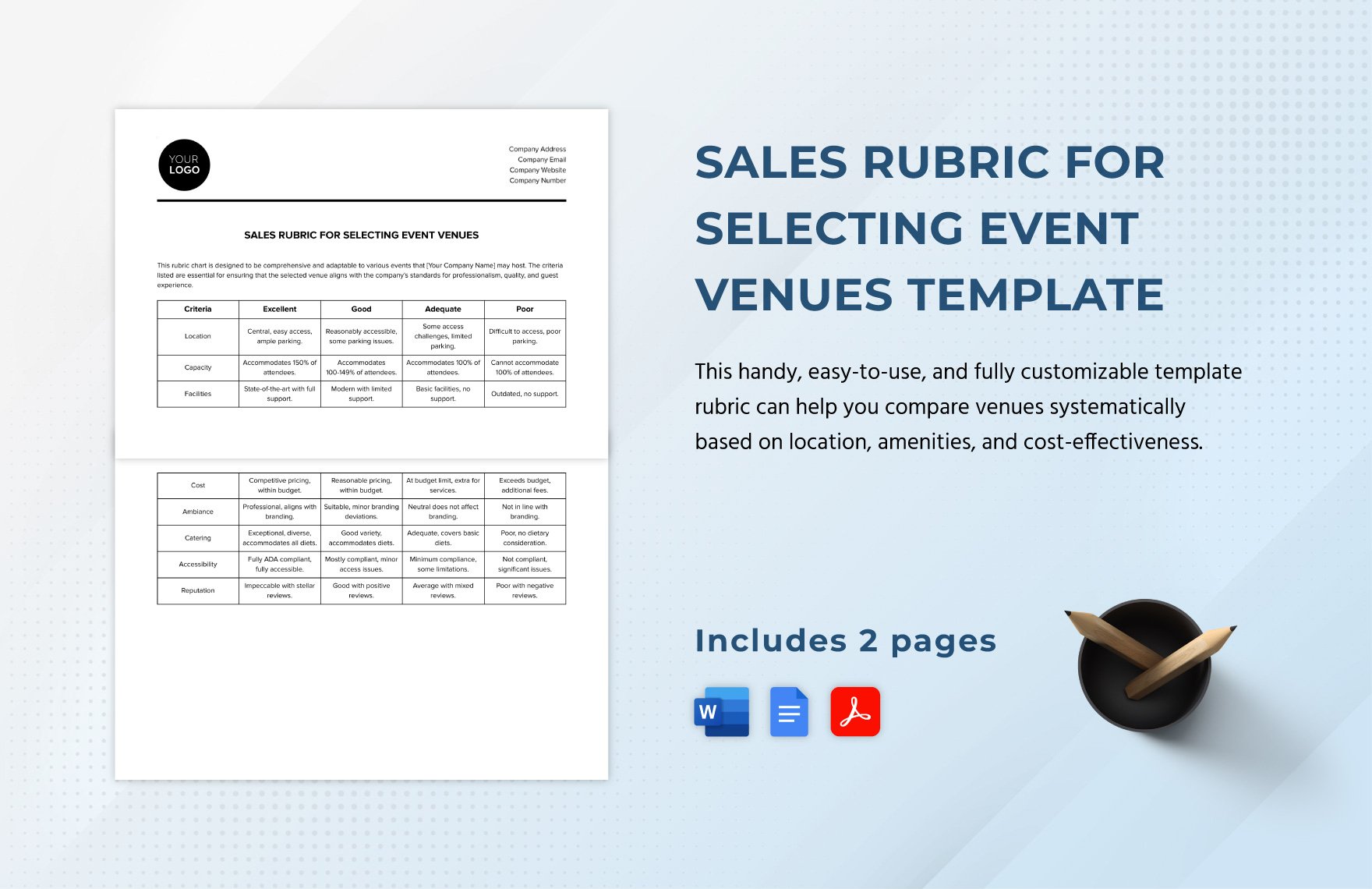

Sales Rubric for Selecting Event Venues Template

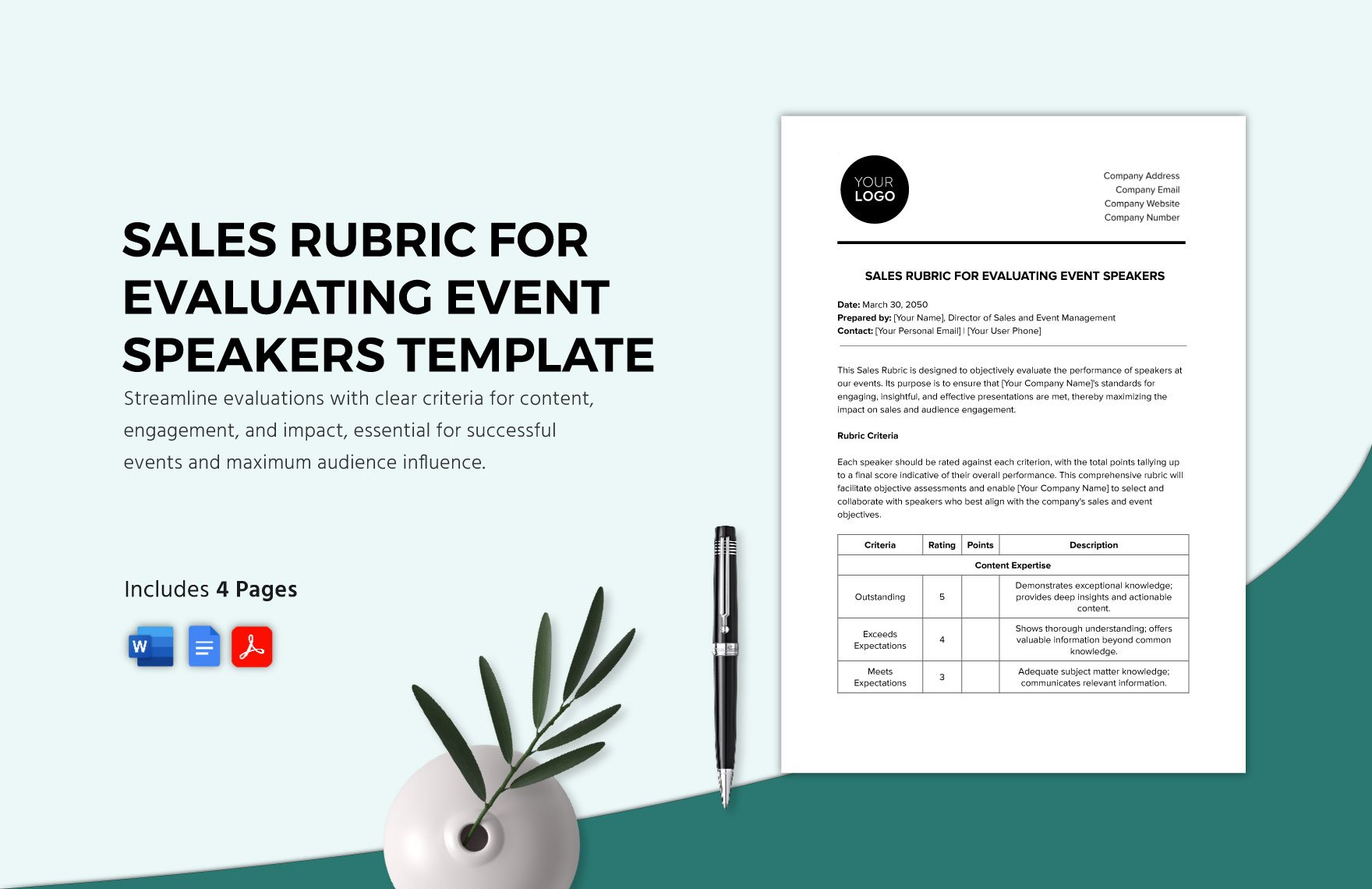

Sales Rubric for Evaluating Event Speakers Template

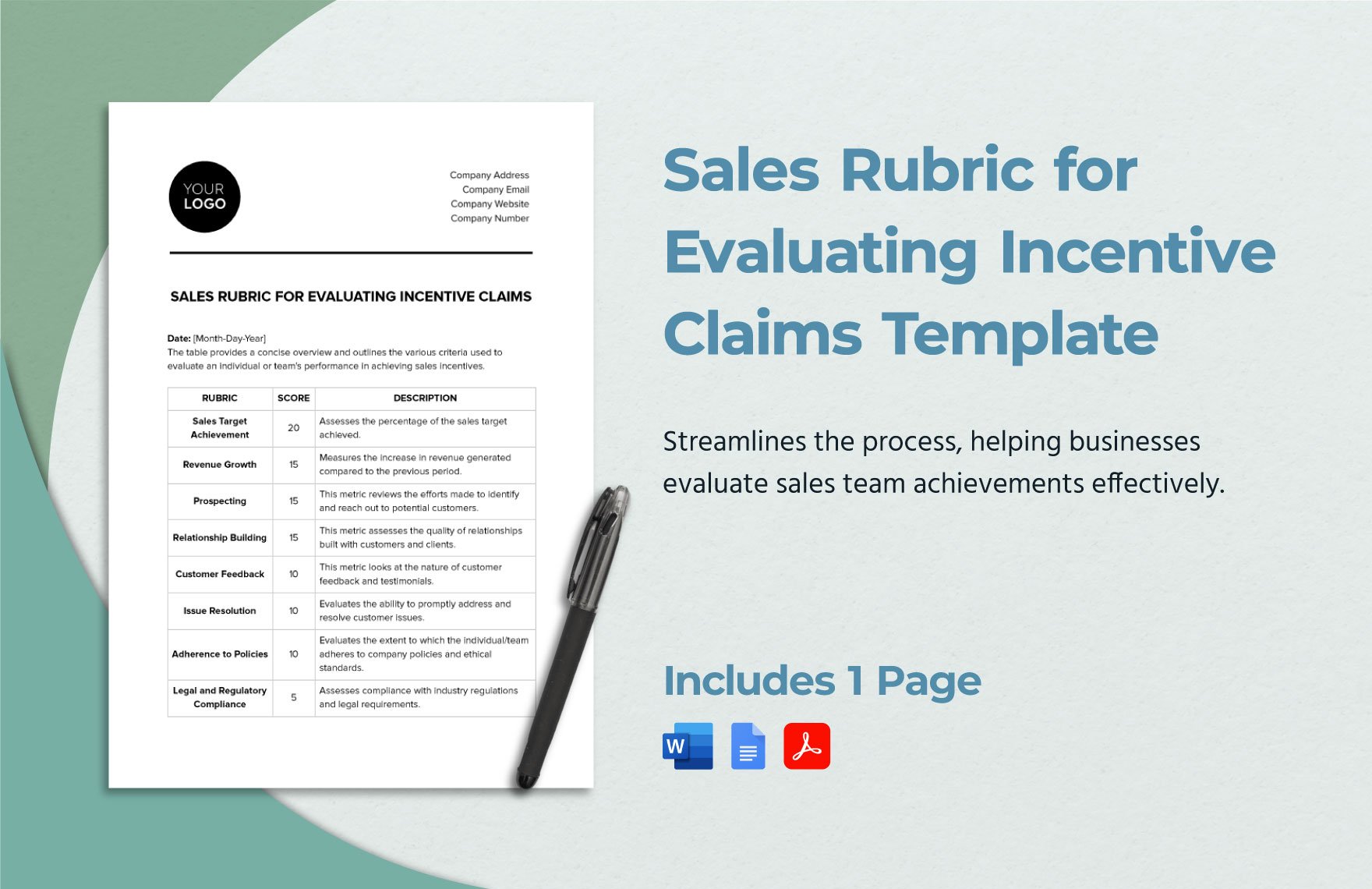

Sales Rubric for Evaluating Incentive Claims Template

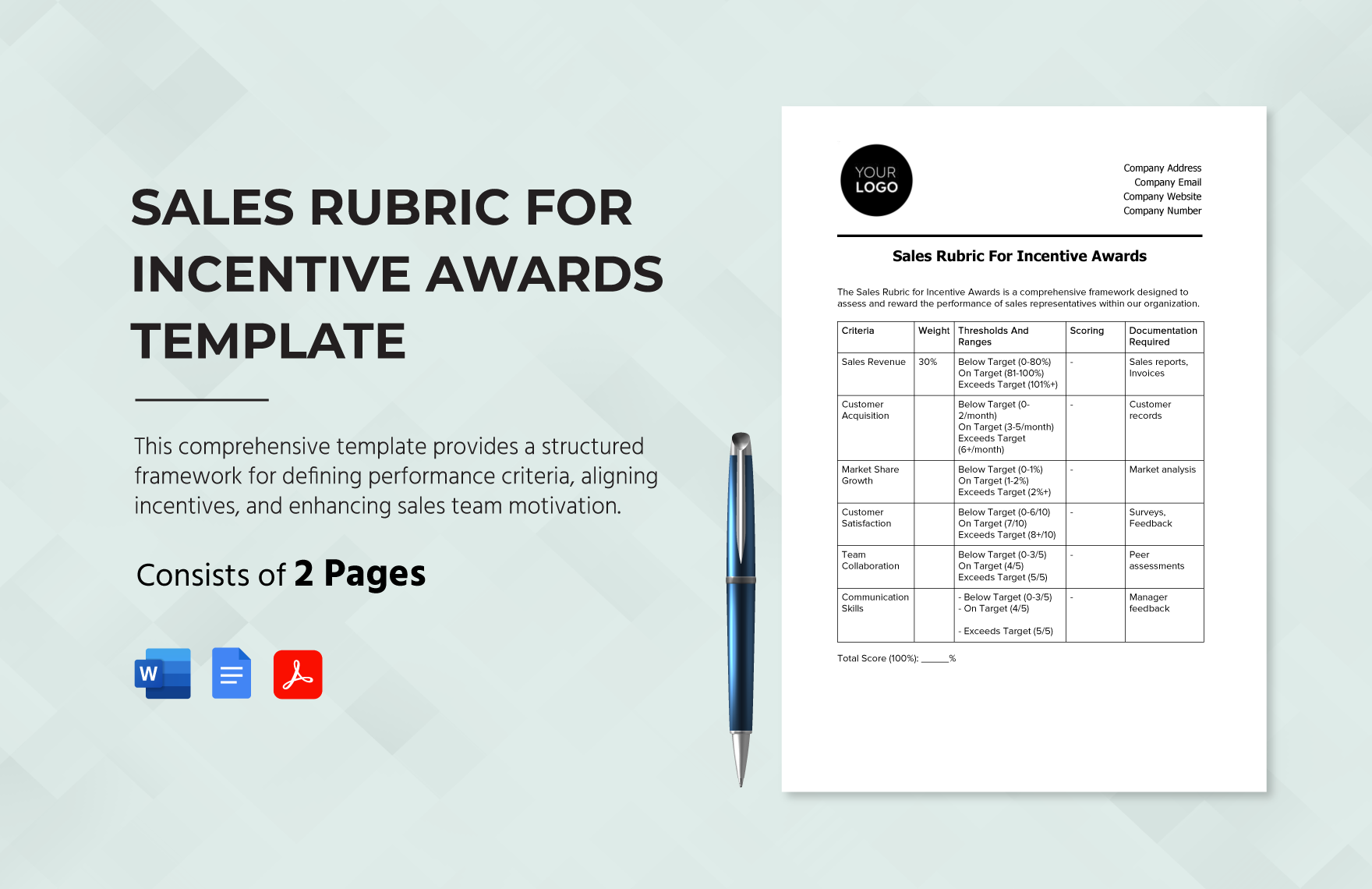

Sales Rubric for Incentive Awards Template

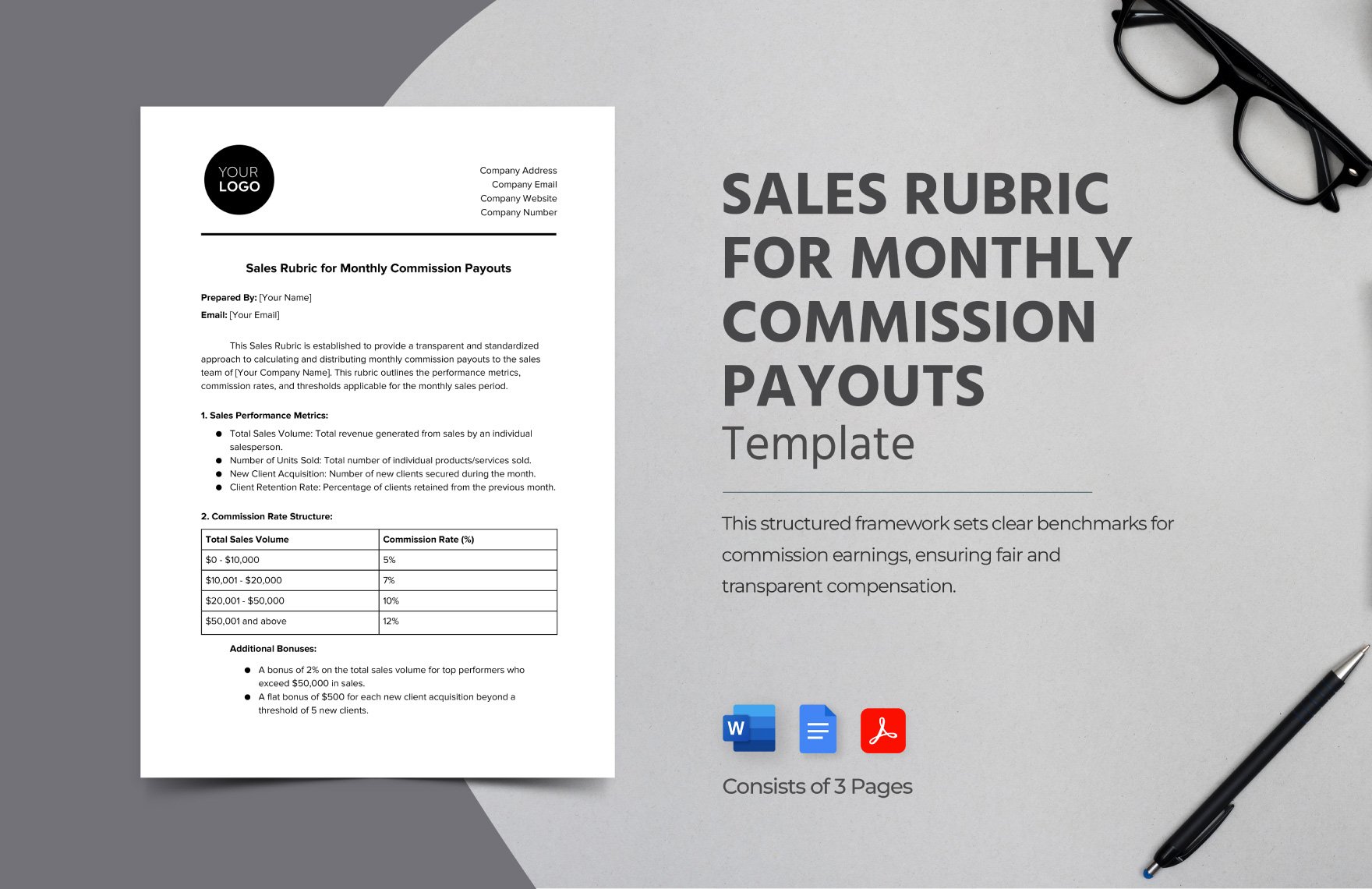

Sales Rubric for Monthly Commission Payouts Template

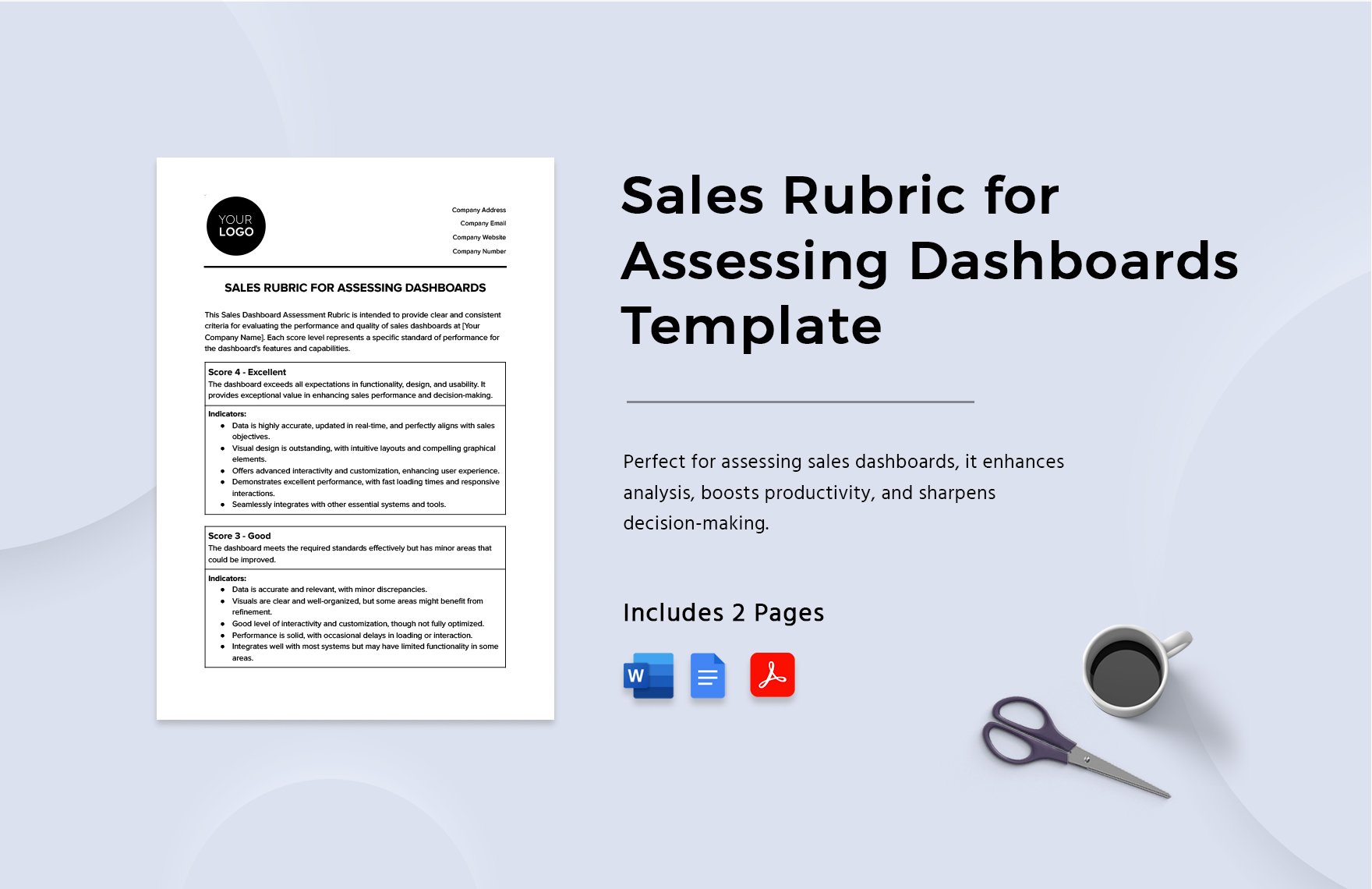

Sales Rubric for Assessing Dashboards Template

Sales Agreement Compliance Rubric Template

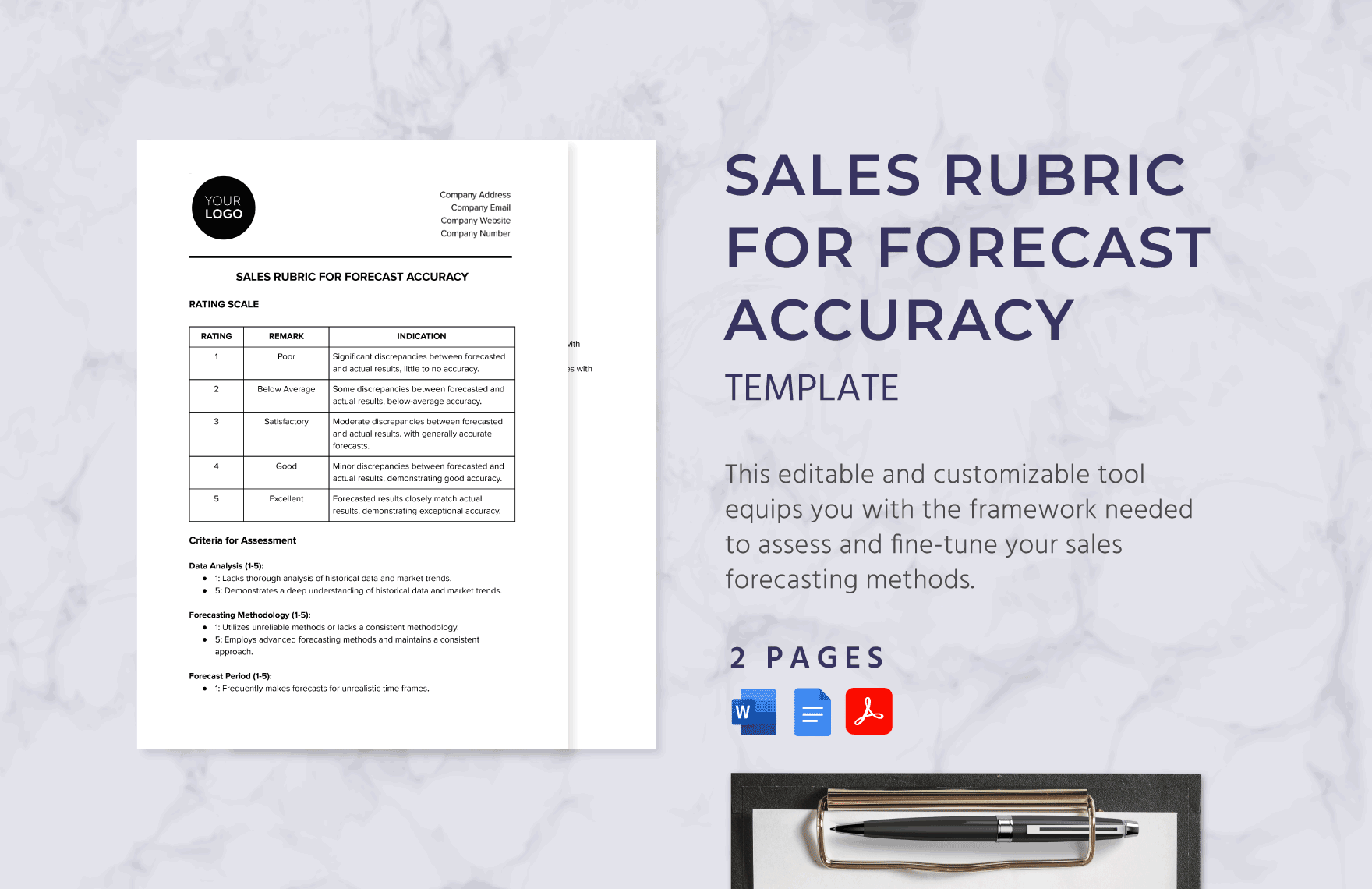

Sales Rubric for Forecast Accuracy Template

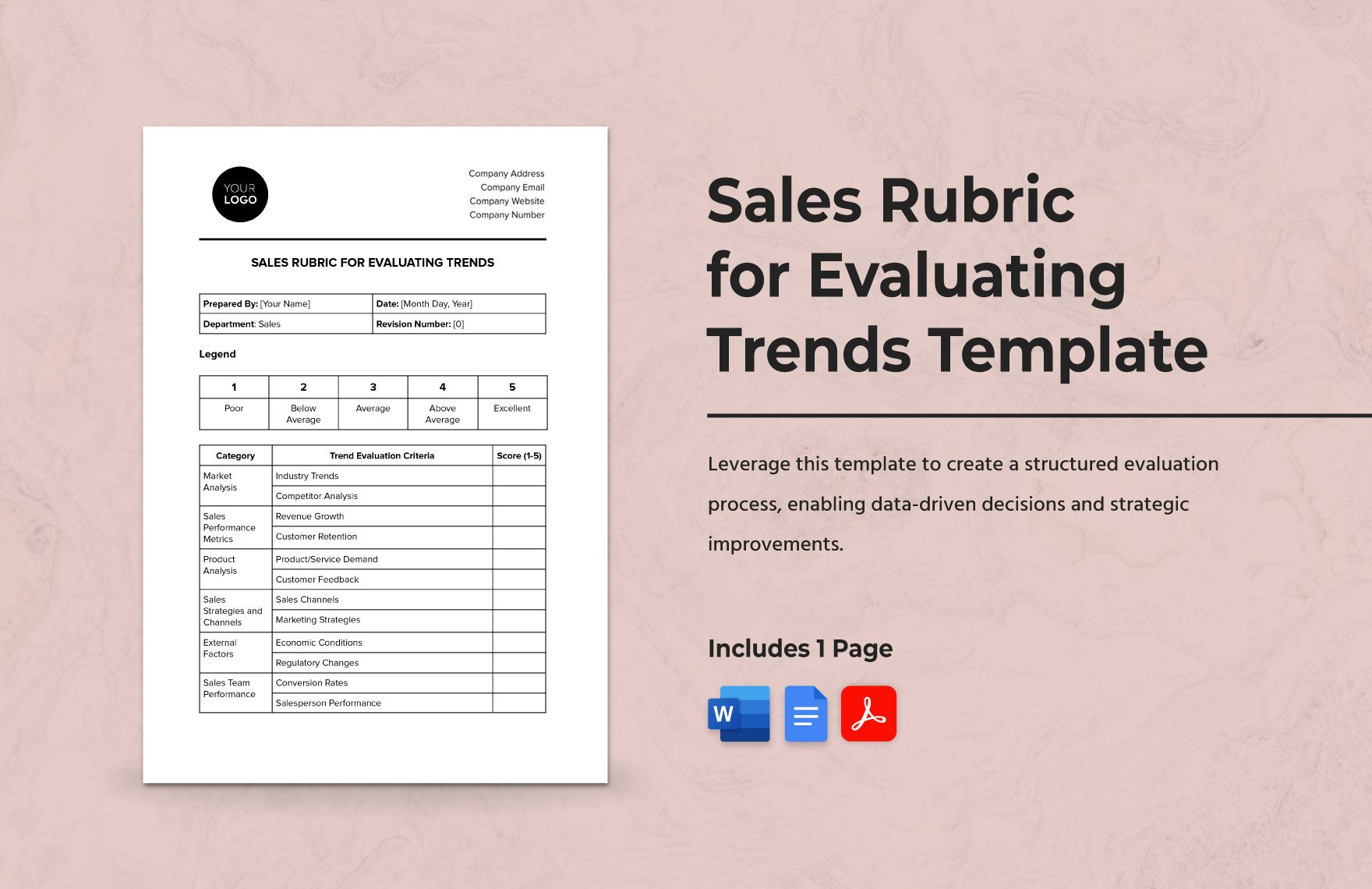

Sales Rubric for Evaluating Trends Template

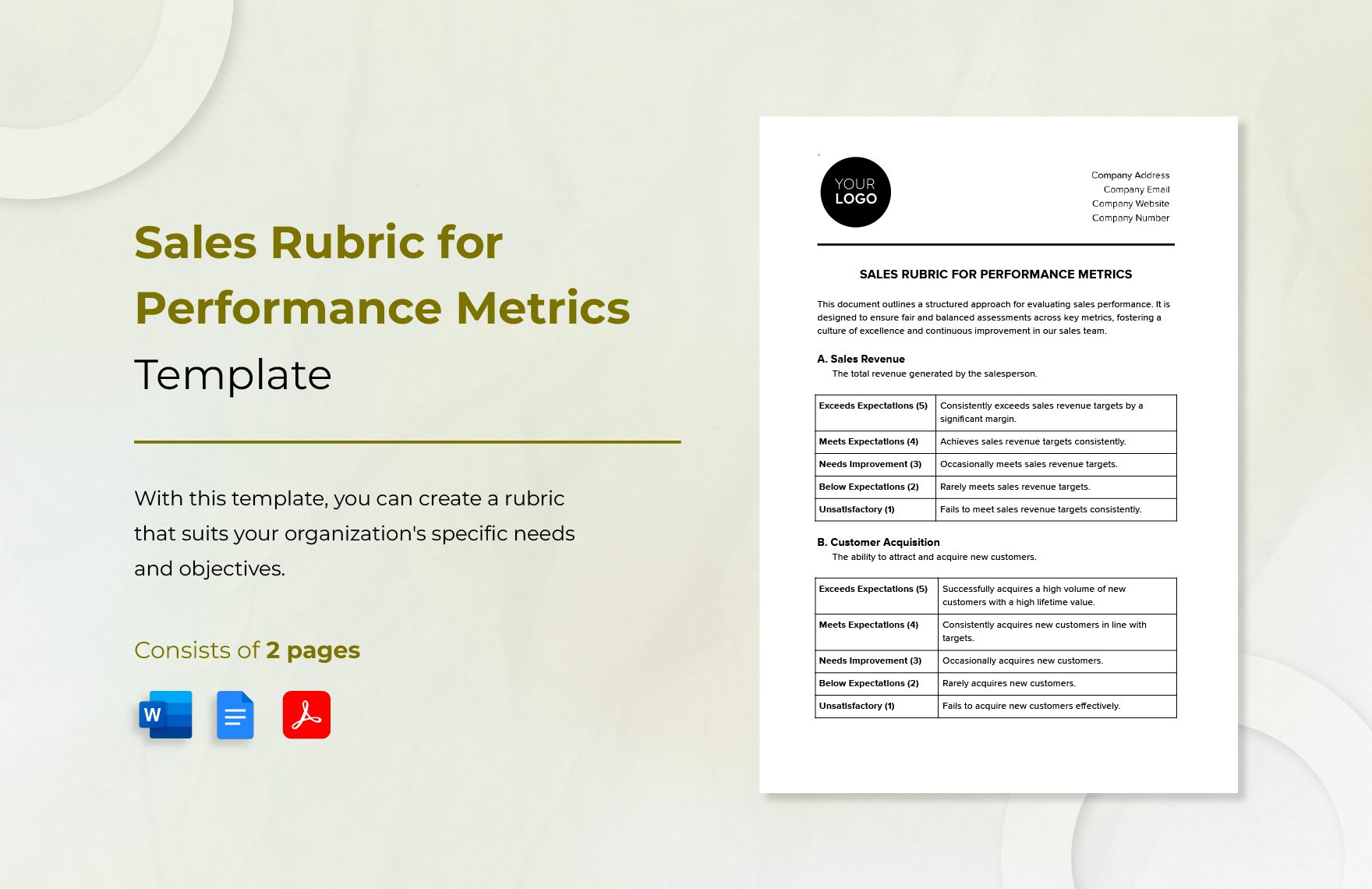

Sales Rubric for Performance Metrics Template

Sales Price Agreement Rubric Template

Sales Agreement Rubric Template

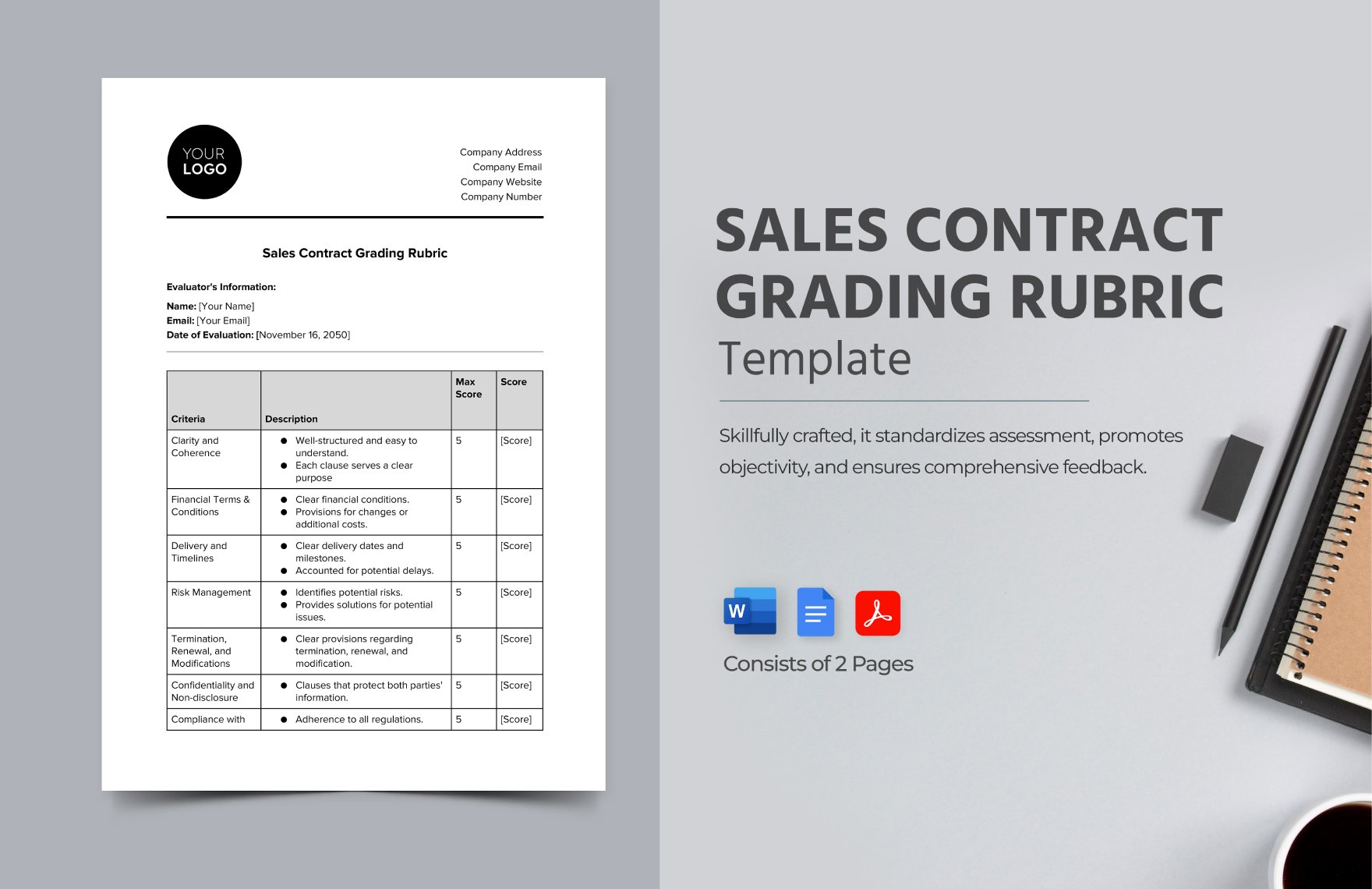

Sales Contract Grading Rubric Template

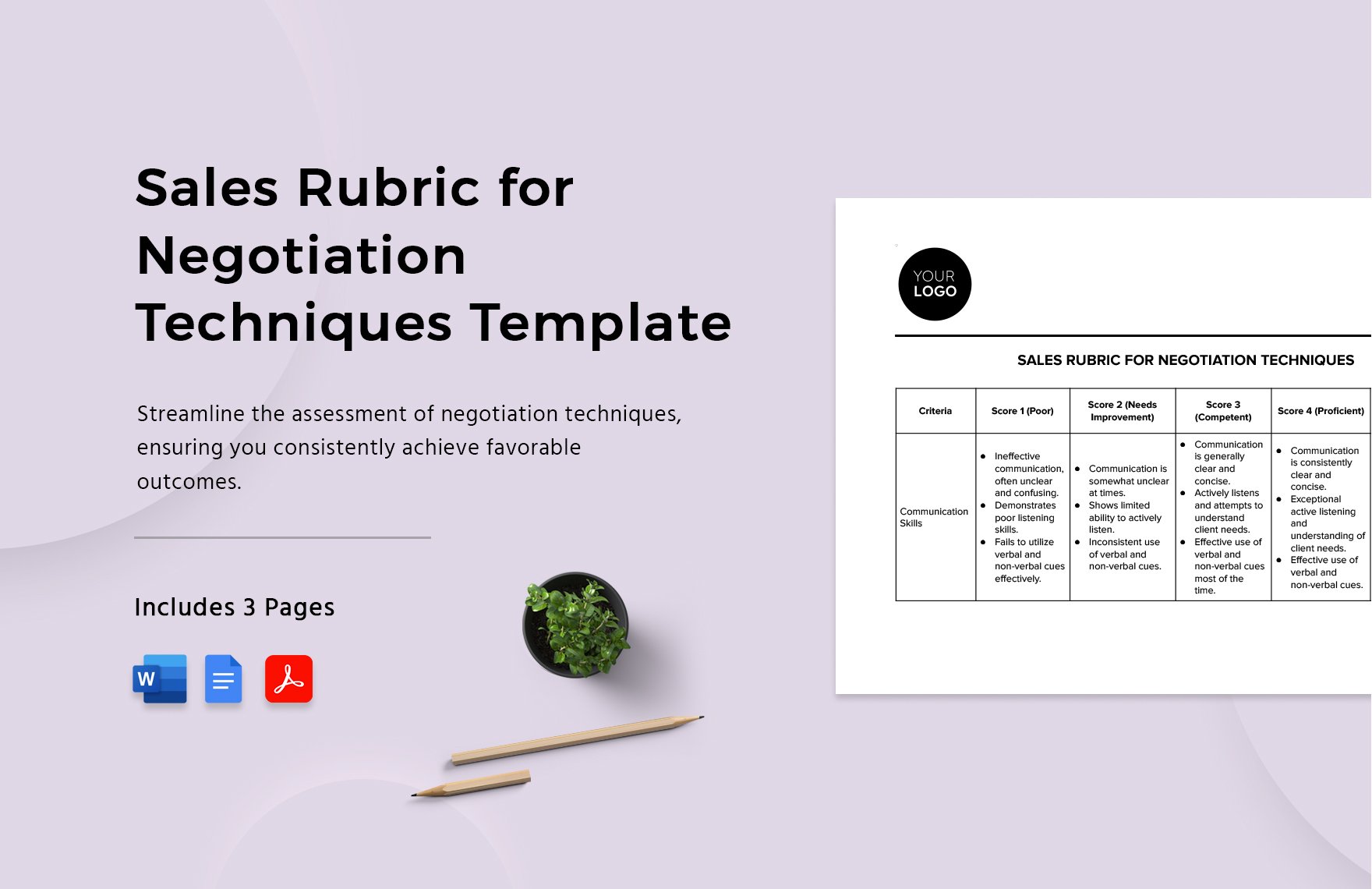

Sales Rubric for Negotiation Techniques Template

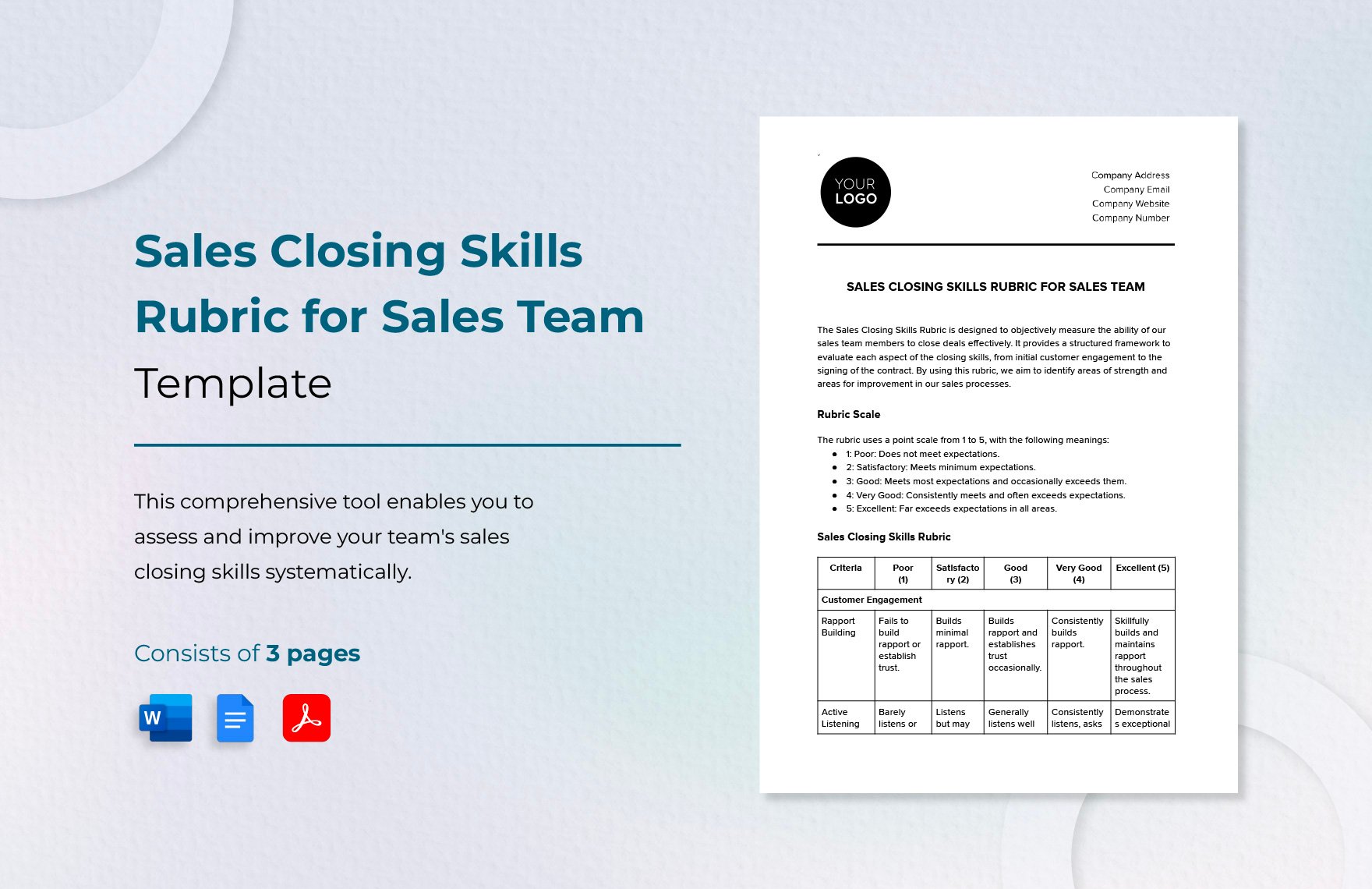

Sales Closing Skills Rubric for Sales Team Template

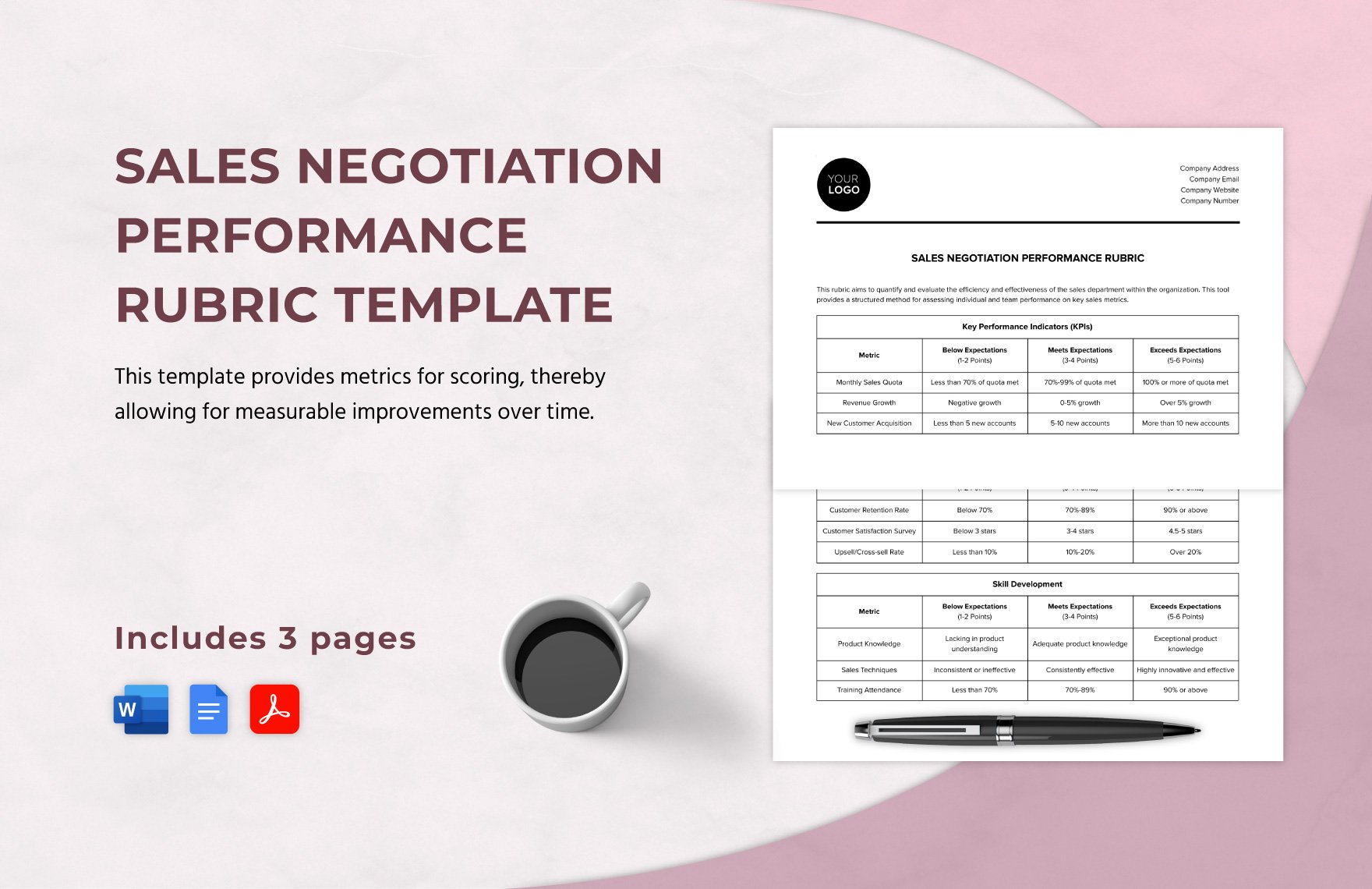

Sales Negotiation Performance Rubric Template

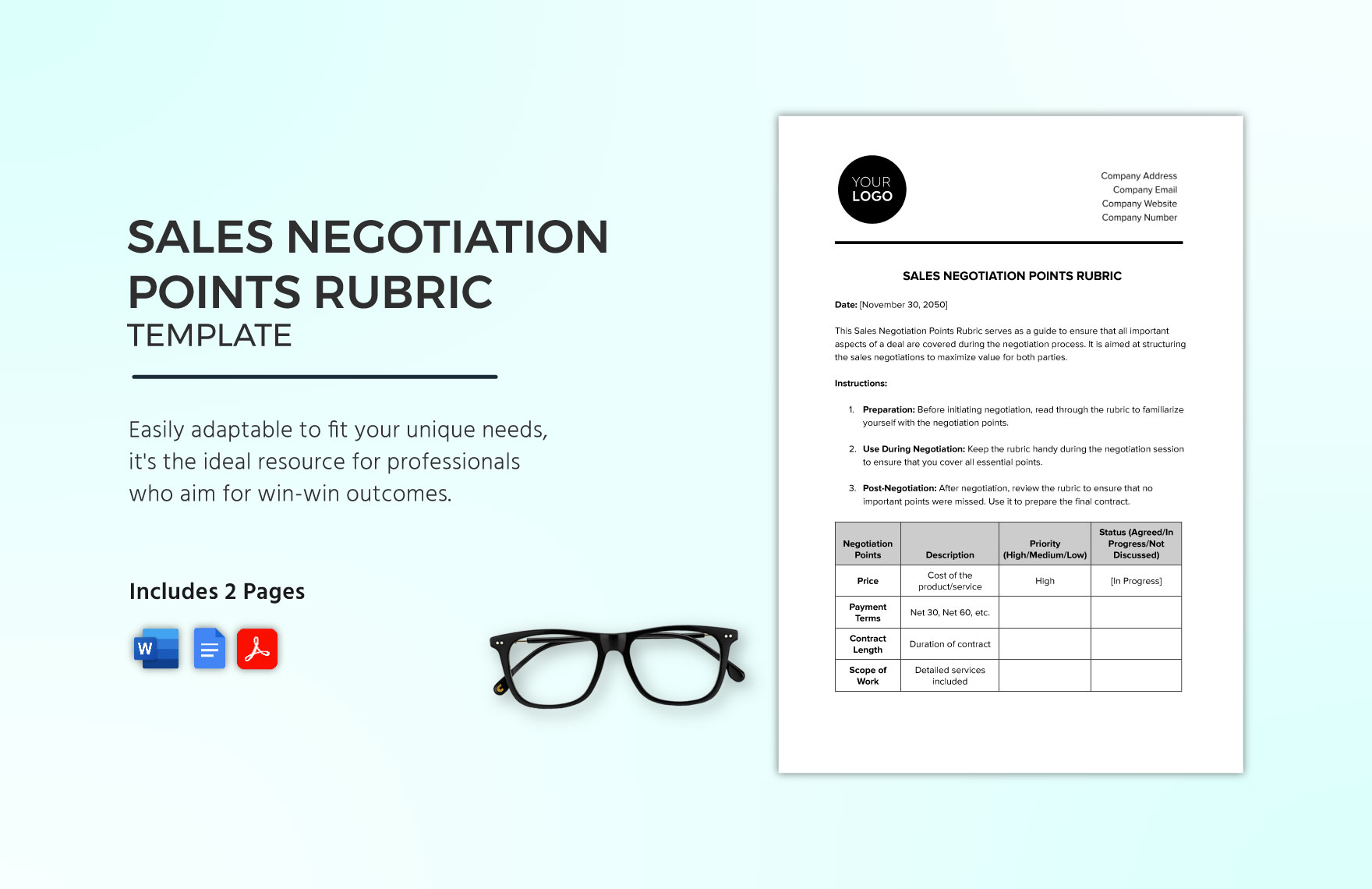

Sales Negotiation Points Rubric Template

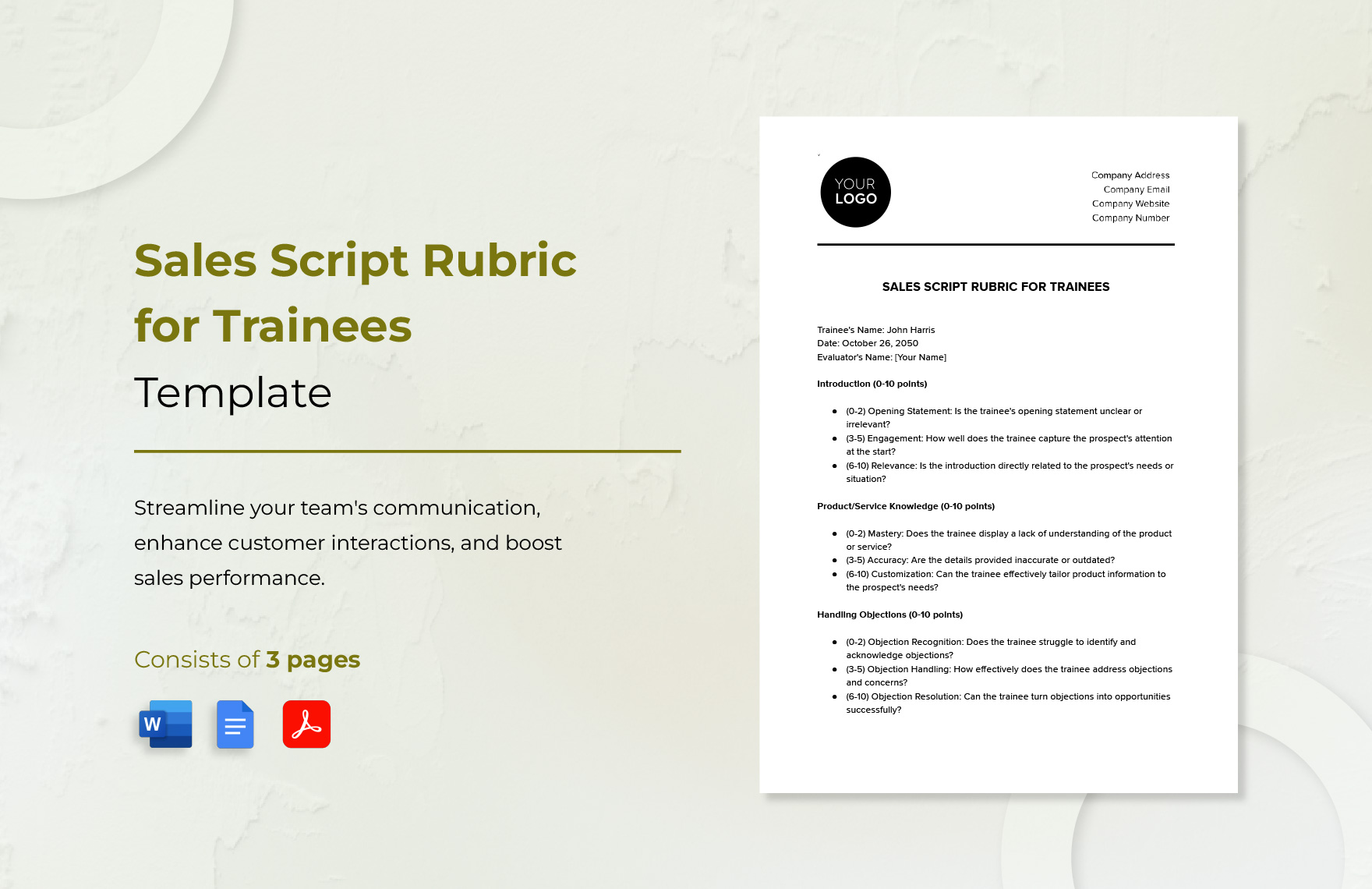

Sales Script Rubric for Trainees Template

Sales Onboarding Feedback Rubric Template

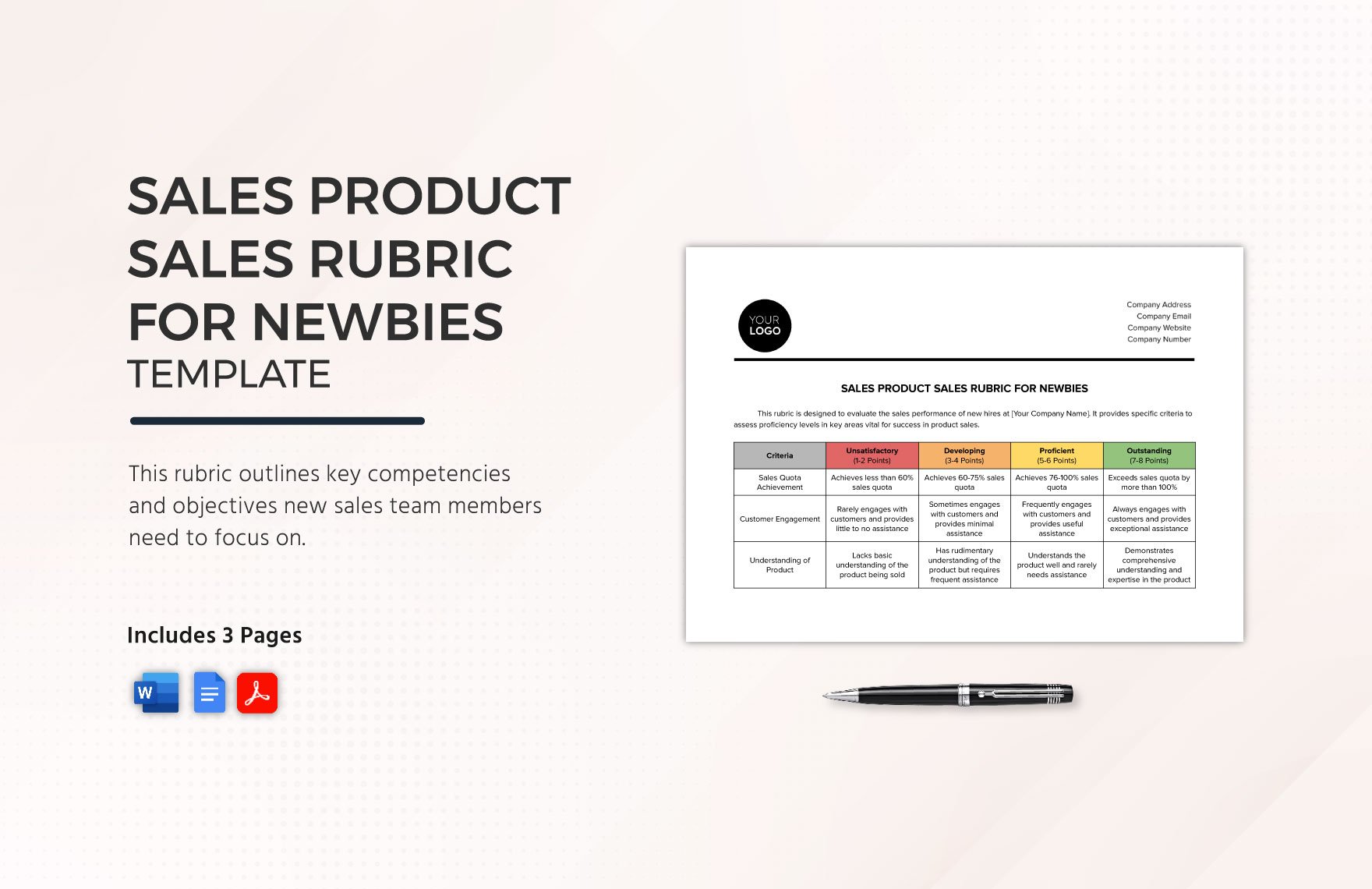

Sales Product Rubric for Newbies Template

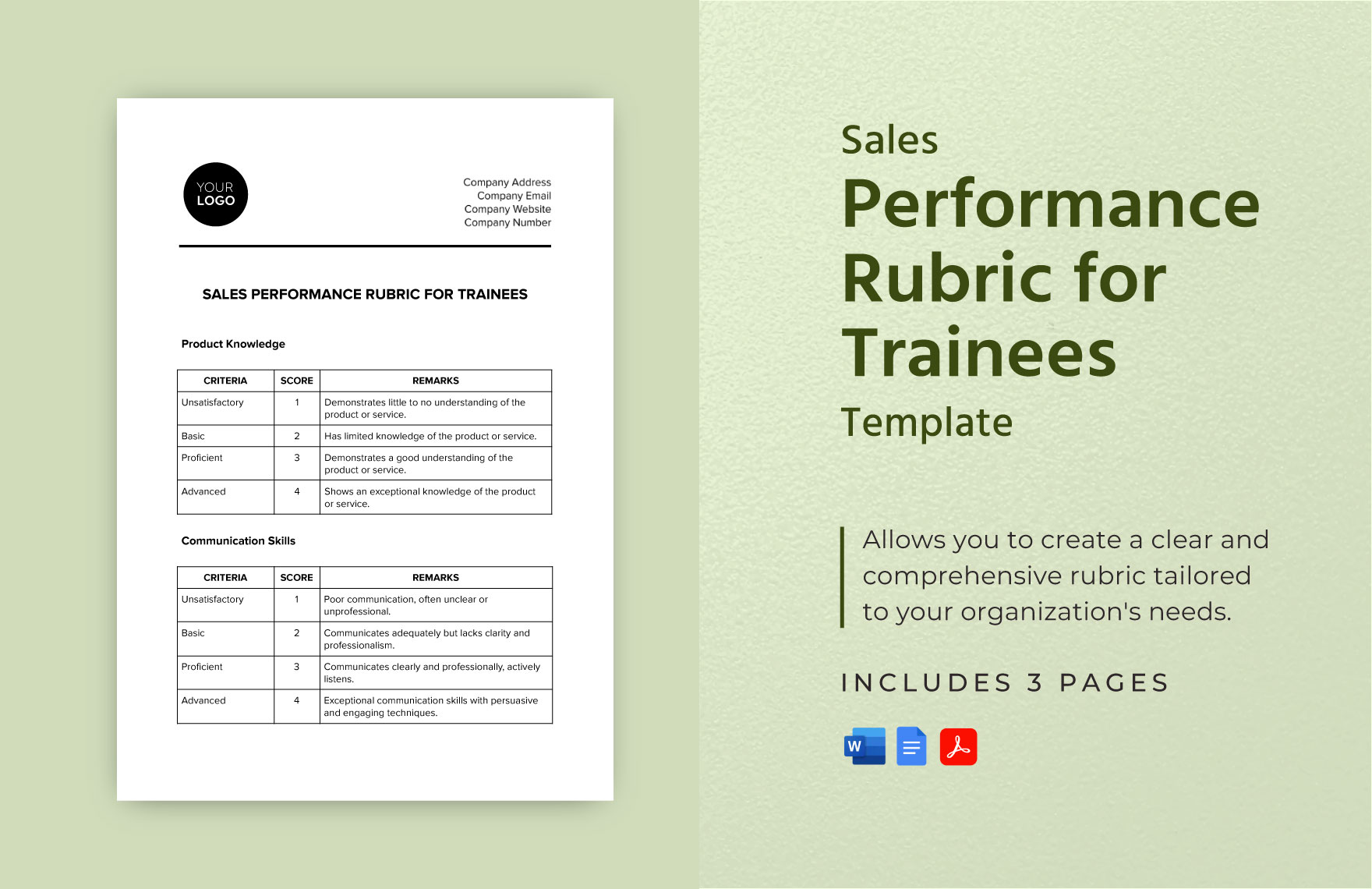

Sales Performance Rubric for Trainees Template

Sales Seasonal Proposal Rubric Template

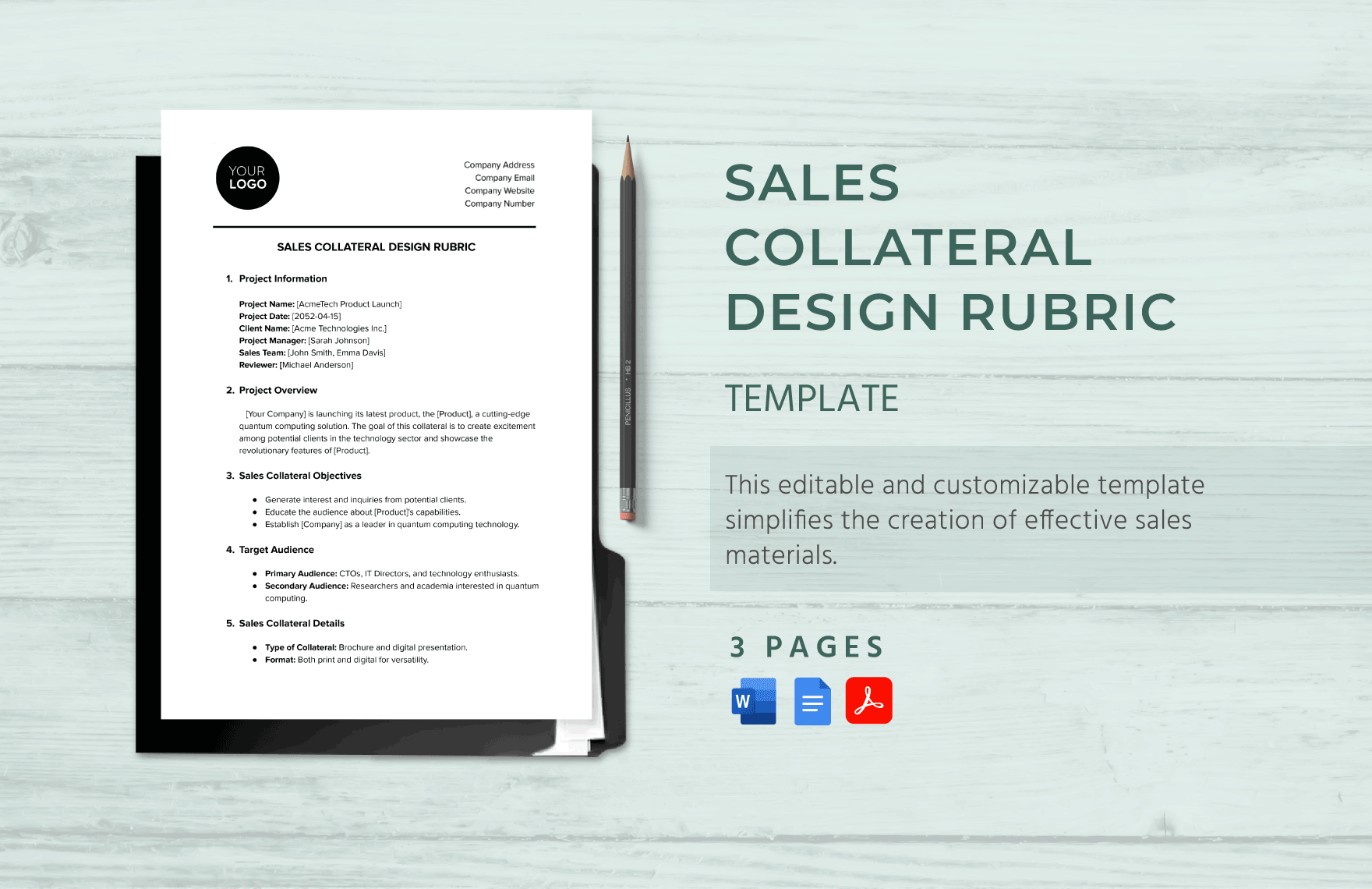

Sales Collateral Design Rubric Template

Sales Standard Quote Rubric Template

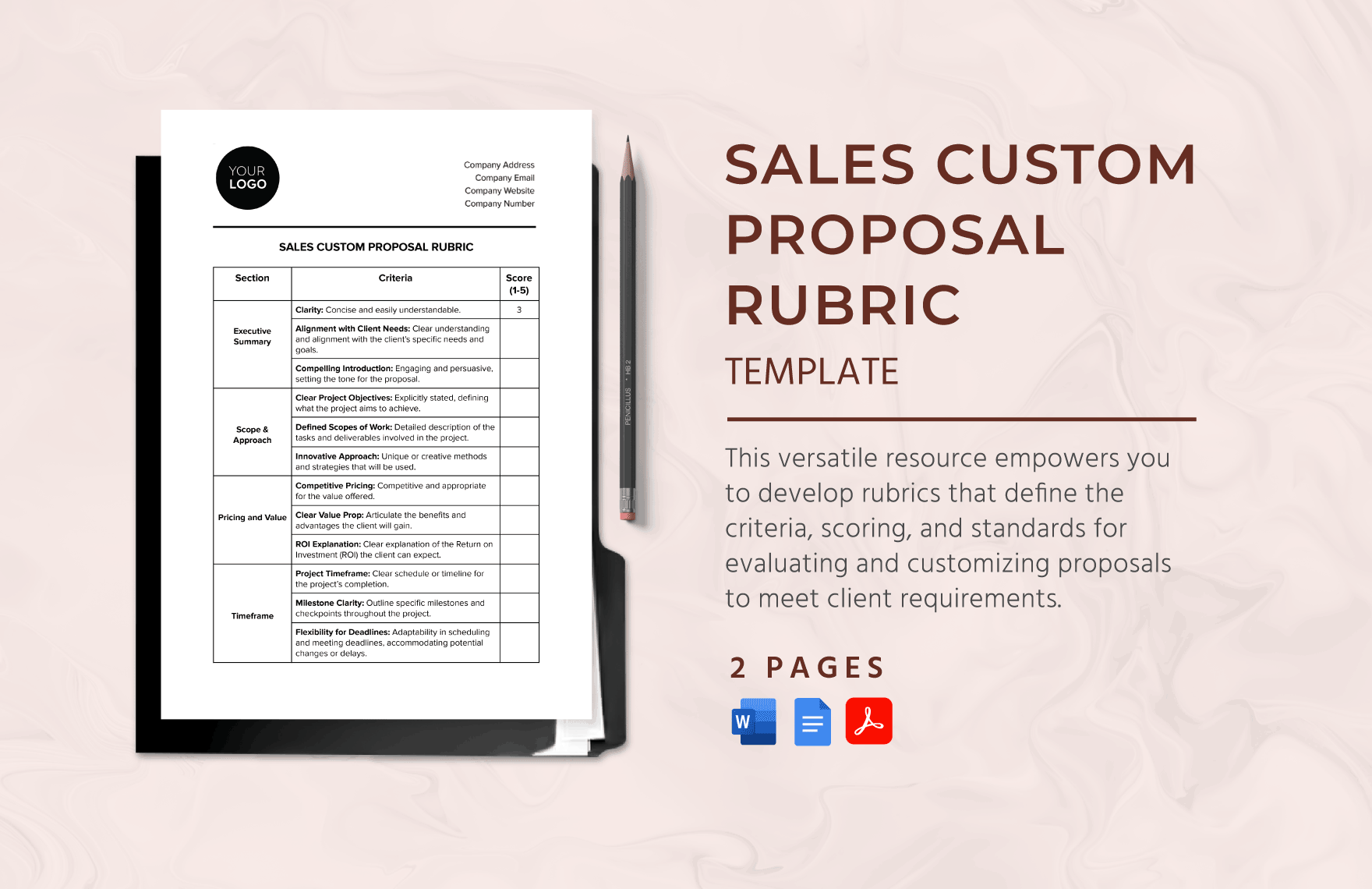

Sales Custom Proposal Rubric Template

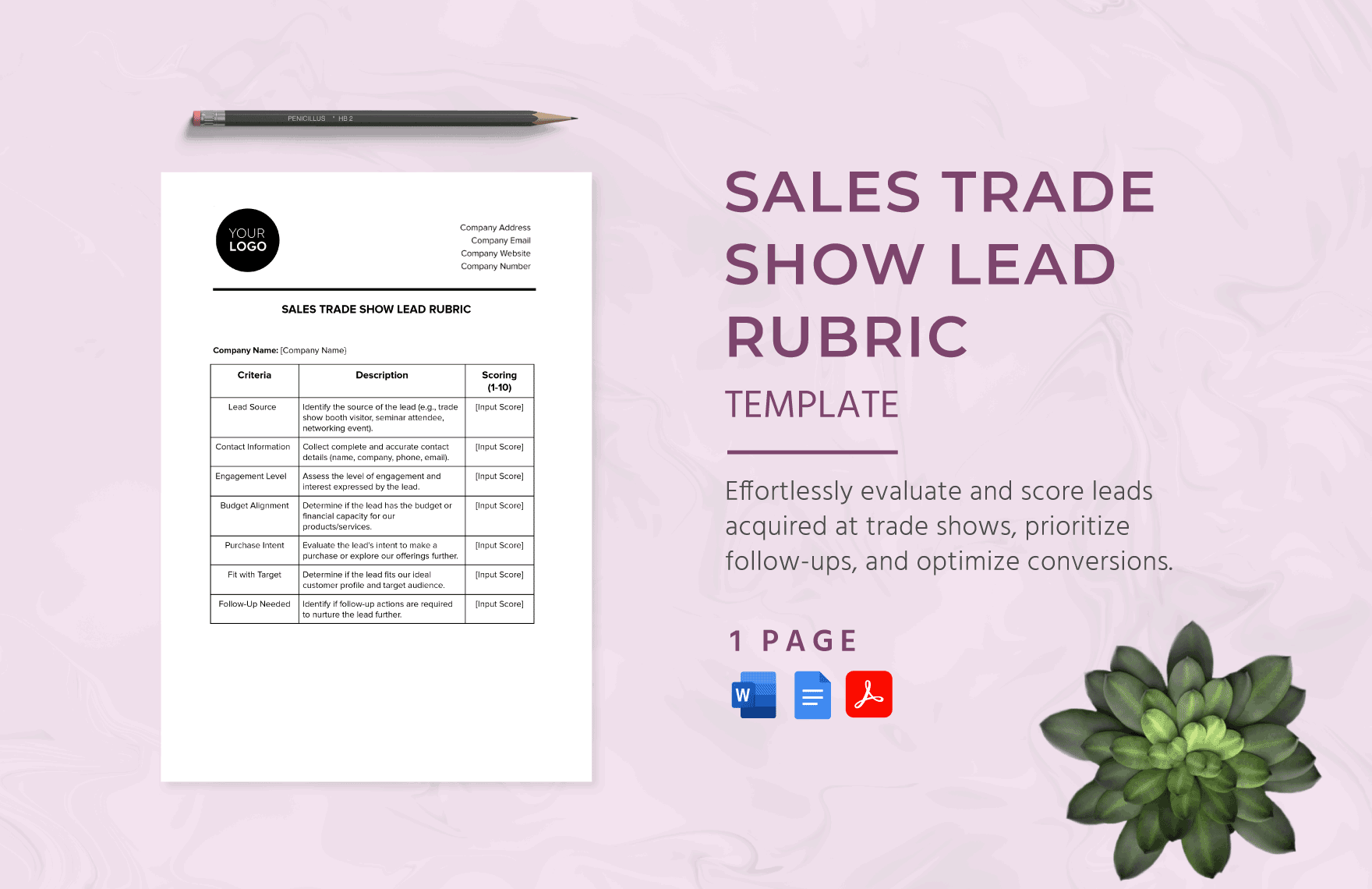

Sales Trade Show Lead Rubric Template

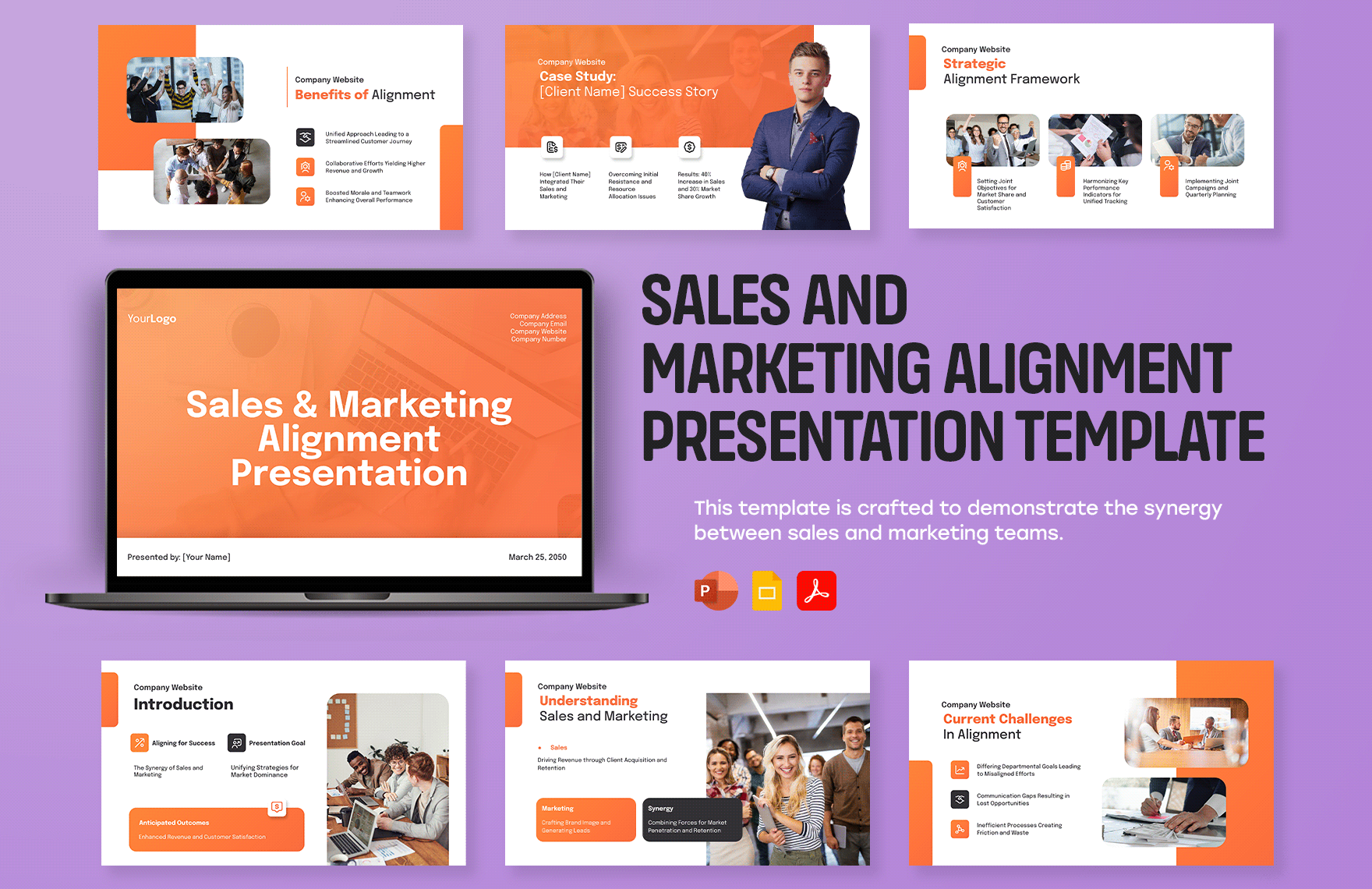

Sales and Marketing Alignment Presentation Template

Sales Customer Testimonials Presentation Template

Sales Product Demonstration Presentation Template

Sales Tools and Resources Presentation Template

Sales New Product Launch Presentation Template

Sales Strategy and Planning Presentation Template

Sales Product Overview Presentation Template

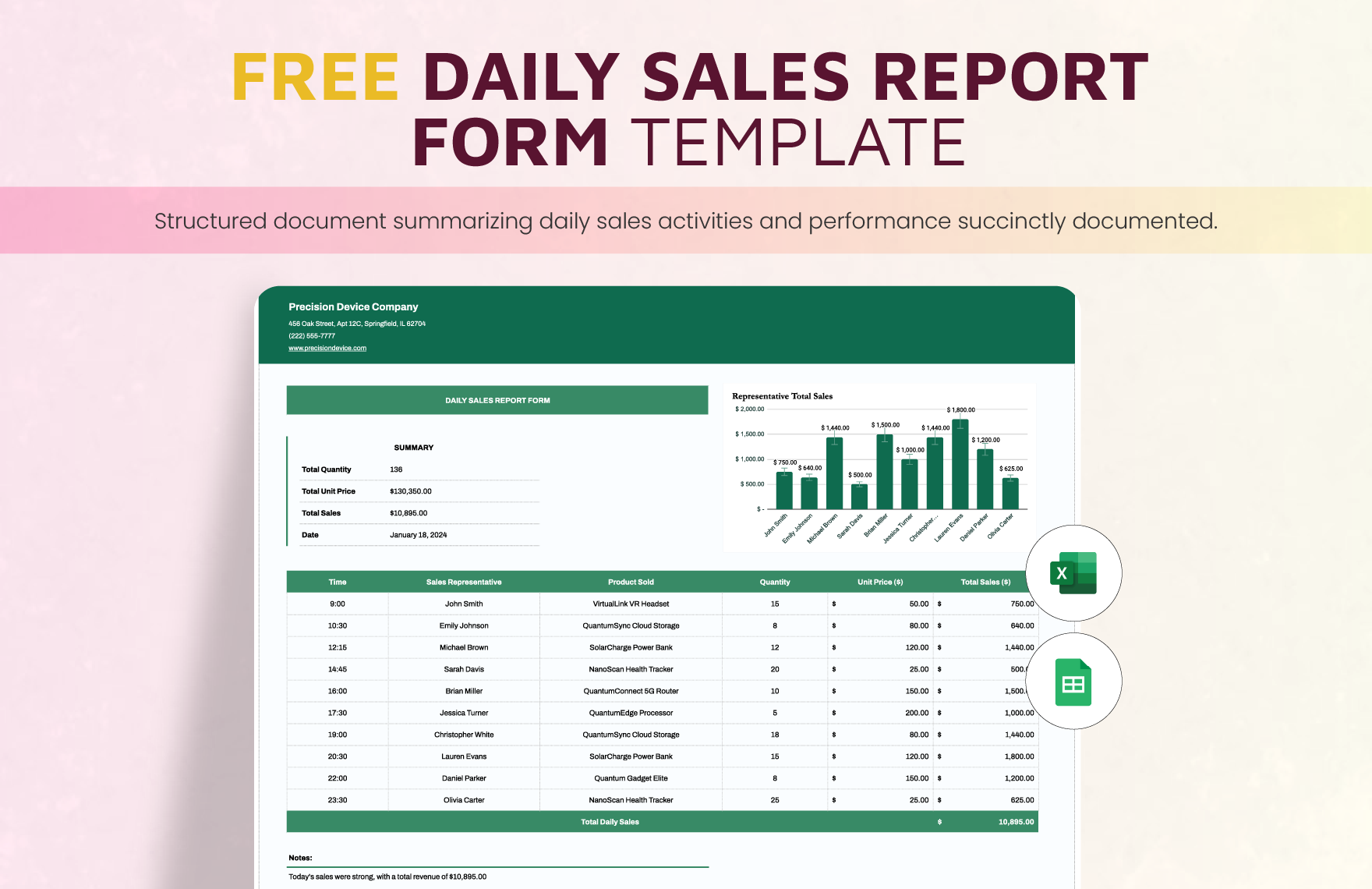

Daily Sales Report Form Template

Sales Customer Correspondence Logo Template

Sales Report on Networking Event ROI Template

Sales Training Materials Portfolio Template

Sales Chart Template

Sales Team Budget Template

Sales Pipeline Analysis Template

Annual Car Sales Dual Chart

Generate Leads

Find quality leads and discover new lead sources

- Email Finder

- LI Prospect Finder

- Chrome Extension

- Email Verifier

Close Deals

Automate outreach with personalized emails to grow sales

- LinkedIn Automation

- Email Deliverability Check

- Email Warm-up

- Gmail Email Tracker

Manage Sales

Keep your lead base organized and your clients buying

Serve your clients warm leads and watch your ROI soar

Snovio Academy

Expert-led crash courses on growing sales.

Case Studies

Stories of growth from real businesses who use Snov.io

News, analysis, growth tips, tutorials and more

Sales Cheats

First-aid solutions to the most common sales problems

Help Center

Find answers to all your Snov.io questions with detailed guides

Beginner-friendly articles on all things sales and marketing

Security Center

See which audits and certifications ensure top-level protection of your data

Integrations

Sync Snov.io with over 5,000 of your favorite tools and apps

- Pipedrive Integration

- Hubspot integration

Integrate Snov.io features directly into your platform

How To Write A Perfect Sales Pitch: Best Practices, Examples, And Templates

When I hear the phrase ‘sales pitch,’ I have ambivalent feelings about it. On the one hand, it’s just something inevitable, something every sales rep has to deal with. On the other hand, there’s…well…negative shade to it. Pitch? Really? I don’t like people pitching me any sort of thing.

Mulling over this confusion, I dare to infer: a good sales pitch can’t be pitchy.

Otherwise, it will make your prospects experience not the best feelings.

But what makes a sales pitch good? In this post, I’ll answer this question and share sales pitch examples and templates to make your pitch not pitchy but perfect .

What is a sales pitch?

Elements of a good sales pitch.

- How to make a sales pitch

- Sales pitch templates

A sales pitch is a concise sales presentation in which a salesperson makes a sales offering. They explain their business and non-intrusively show the value of their product/service. Salespeople commonly make their sales pitch at least once a week, so for sales teams, this is a regular part of the sales process .

You might deal with various sales pitch types depending on which channel you use for it:

- Cold calling. ‘Call the damn leads’ – the phrase you might have heard hundreds of times, which reflects how you can reach a sales prospect with your offering – by phone.

- Email outreach. Alternative to calling a prospect, you can use email to present your offering.

- Social selling. You can contact your prospects on various social media platforms like LinkedIn, Twitter, Facebook, Instagram, and more.

- Elevator pitch. You typically use it at business events or when meeting someone in your industry for the first time.

Interestingly, you might come across the term ‘elevator pitch’ as just a synonym for ‘sales pitch.’ It emphasizes the very short time frame within which a sales pitch should be made – within the time of a single elevator ride.

I won’t tell you that your sales pitch must have a strict structure. To be honest, I’d prefer to deal with creative sales reps who afford a sort of freedom, as they sound more personal and emanate credibility.

Anyway, creativity is something that should follow knowledge. So, if you’re planning to get some understanding of how a good sales pitch differs from a bad one, I would say that a good sales pitch is commonly based on 6 essentials and advise that you keep them in your pitch.

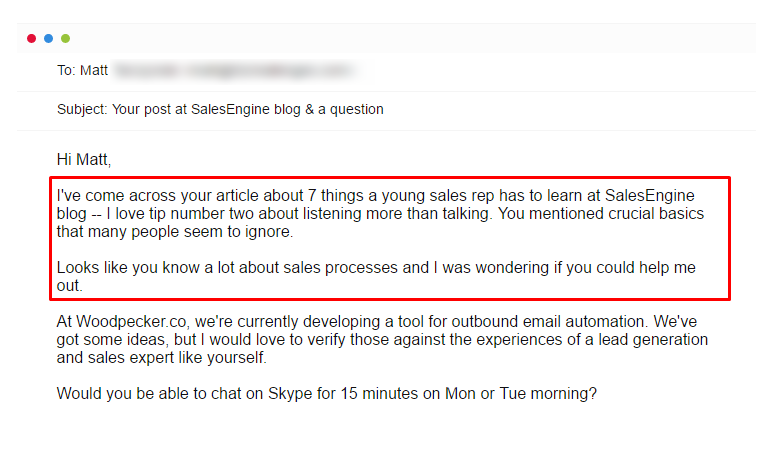

When you contact a person for the first time, you can’t expect them to embrace you with both arms wide. Just put yourself in their shoes; what would you think? I bet you’d think, ‘What do you want from me?’

There must be something that will show them you are not a stranger – a good hook. As a salesperson, you should do thorough research and find information about the prospect that will let you catch their attention from the start.

You’ve read a prospect’s post? You’ve heard their company launched a new product? Or maybe you’ve just looked through their LinkedIn bio and think you have much in common? All this information can work well.

Here are some examples of hooks you can use:

“I see you’ve been promoted to the position of ___. Congratulations!”

“I’ve read your post about ____. I find your tips really useful.”

Alternatively, start your pitch with a direct explanation of why you’re contacting a person:

“The reason I’m calling/emailing is that ____.”

Even after impressing the prospect with your hook, you’re still a stranger to them. It’s time you told them a bit of information about your company. Just be careful here: you might be tempted to speak/write a lot. Resist it. Your intro must be short and straightforward, something like this:

“I am a sales manager at ____. Our company specializes in ____.”

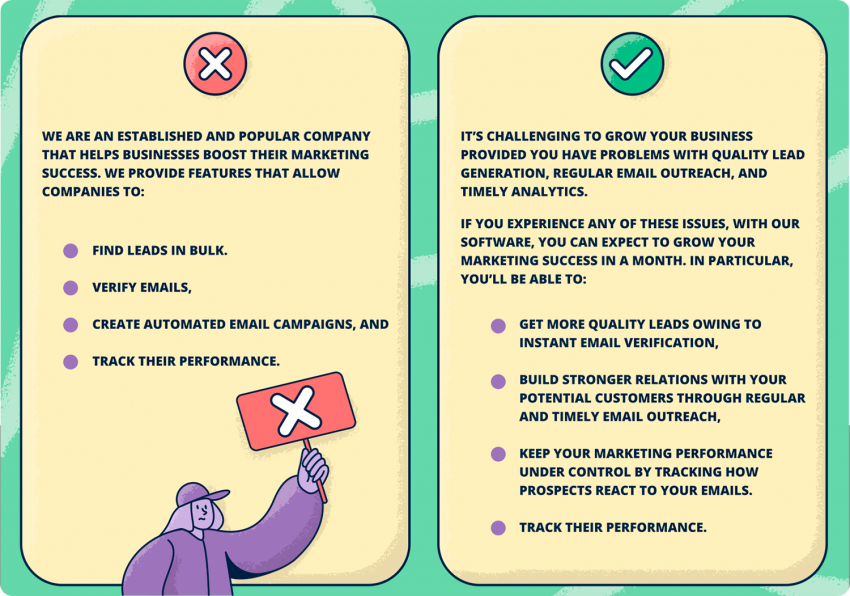

3. Pain points

You’re making a sales pitch without pitching, remember? In your sales pitch, you’re not someone who is selling; you’re someone who is helping the prospect solve their problem. Your task is to identify your prospect’s pain points and highlight how your solution can help.

For example:

“I’ve read your company is using multiple services for ____, _____, and _____. It looks like you’re spending a lot of money on monthly subscriptions while your team has no single platform for cooperation.”

4. Benefits

I would say that’s the most crucial element of your pitch, your best moment to convince the prospect to buy your product/service.

Sadly, but very often, salespeople mix benefits with features. Don’t do this. In fact, your prospects don’t want to hear how excellent your solution is. They want to hear what they’ll get; they want a result.

Provide them with your value proposition.

Try to create a vision of success your prospect will experience after trying your solution. Will they become more productive? Will they spare money? Will they grow their revenue? You should know particular benefits your prospect will get and clearly state them, better with facts and figures.

For instance:

“With our tool, you’ll be able to manage all your workflow on one platform. This will help you enhance your productivity, sparing up to 5 hours daily, which your team can spend on most important tasks, and saving 30% of your budget.”

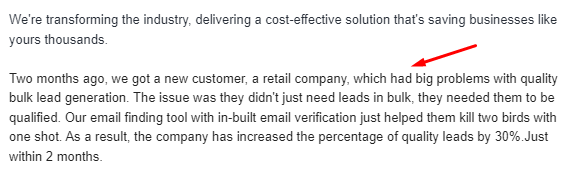

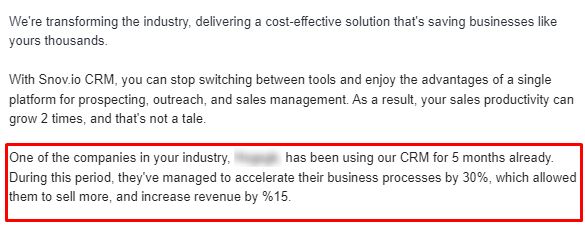

About 72% of customers say positive testimonials increase their trust in a business. That’s because people need proof, so give it to them.

A good way is to reference companies who are your current customers, especially those who are your prospect’s direct competitors. And don’t forget to support it all with facts and numbers.

“We have been able to help companies like _____ grow their productivity by 30% and increase revenue by 15%.”

6. Call to action

The closing element of your sales pitch should hint at further cooperation with the prospective customer. Here I would advise you to ask your prospect an engaging question and call them to action, for instance, get together for a sales interview . But don’t just appoint a meeting; concentrate again on the value it will bring to your potential client.

“What if we arrange a video call next week for me to show you how we have helped companies like yours specifically. Would it be worth your time to see how our solution could save effort and money?”

Now that you understand the basic elements of a sales pitch let’s walk through some working tactics that will help you make your pitch irresistible.

How to make a sales pitch: best practices and examples

Do your research.

Before making a pitch, the first thing to do is to study your prospect from different angles. You should be clear about who you’re pitching to , so don’t neglect to find the basic demographic and firmographic data, like a person’s name, position, and information about the company.

A good option is to rely on LinkedIn , from which you can collect lots of data, such as the company’s news, industry-related posts, and comments, and use it as a compelling hook for your sales presentation.

Use storytelling

Did you know that a great story can lead to the release of oxytocin, which creates a deeper connection between the storyteller and their audience? Not a surprise, storytelling is considered one of the most powerful sales techniques.

I highly recommend that you build your pitch around a narrative. Tell your prospect how other companies started using your product/service and what improvements they got. If you feel your prospect is inclined to object to your offering, you can even tell a brief story of how you have overcome problems by adopting a new technology after several objections.

Focus on the prospect

Even if you provide an example of your company in your sales pitch, make sure you don’t go too far telling your prospect about your best functionality for another long hour.

A good sales pitch is a story where the main hero is a prospect, not you. So concentrate on your prospect’s current challenges and the bright perspectives they’ll get when they buy your offering.

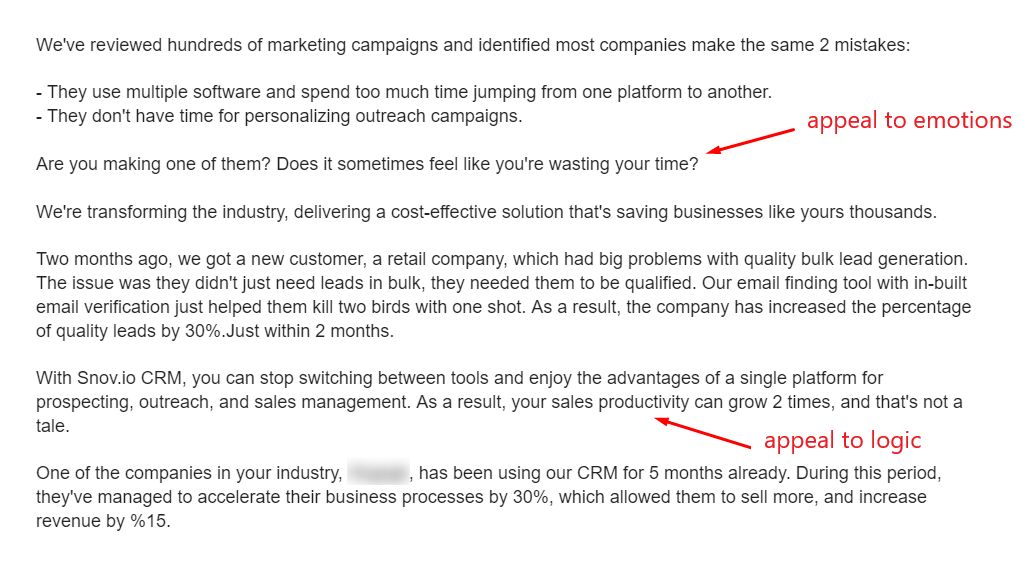

Balance between emotions and reason

In one of my previous posts about B2B sales psychology , I talked about the importance of appealing to emotions during a sales pitch. Here I would add that you should harmonize it with the appeal to the logical side.

You can appeal to emotions while talking about the prospect’s pain points, say, by asking them how they feel about their current problem. Or you can draw a positive picture of future improvements with your solution by asking them how they would feel if your product/service solved their problem.

Create the FOMO effect

FOMO (fear of missing out) is a perception that you’re lagging behind others in experiencing the advantages of your current life. In sales, you can use the FOMO effect as a psychological trick to stimulate your prospect’s motivation to buy.

Try telling them success stories of direct competitors who have been using your product/service for a while. I’ve mentioned it in the previous chapter while talking about proof. This way, your prospects might feel anxious about missing out on something important their rivals already have in their pocket.

Personalize your sales pitch

Make sure your sales pitch is relevant to your prospect. Avoid a one-size-fits-all approach and focus on specific needs and pain points of a company you’re going to sell to. And let me remind you again: do research before you start your pitch and learn about your prospects, so you can address them personally, win their positive attitude, and build trust.

Another way to build trust with your prospects is to position yourself as an industry expert. Why not add interesting facts to your sales pitch that your prospective customer might not know about?

For example, if your offering concerns a sales CRM , you can add some general information about the CRM market or statistics about how companies are adopting a new CRM. That will show you are well-versed in the subject and only add to the value of your offering.

Be prepared to handle sales objections

It hurts, but your sales pitch won’t always be accepted as something your prospect has been waiting for. Prospects do object, and yes, they do it quite often. Just be prepared to come up with counter-arguments to back you up.

Collecting a list of typical sales objections is important to the process of strategizing your sales pitch. When you know how to handle objections quickly, you’ll appear more credible and professional to the prospect.

It might be strange to imagine yourself talking aloud, but you need to practice your sales pitch beforehand. Make a plan of your presentation, including all the elements mentioned above, and exercise what you’ll be saying, in what order, figuring out possible questions and prospects’ reactions to your sales pitch.

The top 5 sales pitch templates for your business

Wow, it seems you’re now ready to conquer the hearts of your prospects. Just one last bonus – I’ve prepared 5 templates to support your sales pitch email efforts.

Just remember: templates are fine, but your pitch must be highly personalized, so use them as convenient backing for your creativity.

Sales pitch email template #1 – Sales introduction

|

|

Use this template in case your prospect hasn’t heard about you before. Your key goal here is to give them a reason to start communicating with you, so prepare a hook and demonstrate you’ve done your homework, researching a company you’re going to pitch to.

Sales pitch email template #2 – Prospect’s website visit

|

|

Never miss a chance to make a pitch to a prospect who has visited your website. You don’t need to look for a specific hook in this case, as you’ve got one already. This template will help show you are attentive to your website audience and ready to help immediately.

Sales pitch email template #3 – Responding to content

Most of your prospecting customers are publishing regular content, usually blog articles. This is a wonderful opportunity to use one of their posts as a hook to build links and make a sales pitch.

Sales pitch email template #4 – LinkedIn connection email template

|

|

LinkedIn is one of the best platforms for getting new customers, so once your prospect has accepted your connection, you can use it as a hook for making a non-intrusive sales pitch. You can do this through LinkedIn messages, InMails, or email. The latter will be a better solution to deal with LinkedIn limits and restrictions .

Sales pitch email template #5 – Objection handling

This template will help you to stay in the game even after your prospect objects. As you see, a bit of storytelling can save the situation. If you don’t have a similar story to share, you can always use one of your customer’s use cases .

Wrapping up

A sales pitch is an inevitable part of your job as a sales rep. And while there are dozens of prospects who have negative associations with it (yes, just like me), you already know that making a good sales pitch is possible without being pitchy.

I hope all the above tips, examples, and templates will help you come up with a sales pitch that will melt your prospect’s hearts the way none ever did. Meanwhile, Snov.io will take care of your sales process from start to finish.

Leave a Reply (0) Cancel reply

Most Popular

Prepare, Present, And Follow Up: How To Nail Your Best Sales Presentation

December 20 2023

10 Tips On How To Increase Sales For Your Small Business In 2024

August 29 2024

How To Convince Your Boss To Start Using Sales Automation (Snov.io, In Particular)

August 22 2024

Copied to clipboard

Thanks for subscribing 🎉

You will now receive the freshest research and articles from Snov.io Labs every month!

We've seen you before 👀

It looks like you've already subscribed to Snov.io Labs. Be patient - our next newsletter is already in the works!

🌴🥥 If you like piña coladas, closing deals every day... get 25% off ANY annual plan 🍹😎

New Business Concepts Pitch Guidelines | Social Ventures Pitch Guidelines | Ten Questions That You Should Try To Answer

New Business Concepts Pitch Guidelines

New Business Concepts will be evaluated on the following judging criteria.

- How well was the concept explained?

- How reasonable, sustainable, and scalable is the new concept?

- Is there a genuine need for the product or service?

- How well was the target market defined?

- What is the size and growth of the market?

- What is the consumers' willingness to pay for the product/service?

- Is the description clear?

- Is the product feasible?

- How easily it can be duplicated?

- Is there a presence of potential substitutes for the product?

- Have the current and potential competitors, competitive response, and analysis of strengths and weaknesses been adequately defined?

- How realistically defined is the marketing plan?

- Does the plan adequately address price, product, place, and promotion?

- Are resources sufficiently allocated for marketing?

- What is the likelihood of securing resources required for production?

- Is there an ability to operate competitively and grow?

- Does the team exhibit the experience and skills required for operation?

- What is the depth and breadth of the team's capabilities?

- Does the team demonstrate the ability to grow with the organization and attract new talent?

- How compelling is the business model?

- Have the resources required for the venture been addressed?

- Has the team clearly and adequately presented a breakeven analysis?

- How reasonable are the financial projections?

- Are there prospects for long-term profitability?

- Did the entrepreneurial team explain funding?

- Were offerings to investors and anticipated returns clearly explained?

- Did the team calculate a realistic valuation?

- How feasible is the exit strategy?

- Did the presenter(s) engage the audience and hold their attention?

- Did the presenter(s) appear to speak with confidence authority?

- Were visual aids (i.e. PowerPoint® slides) clear and valuable?

- Was the pitch exciting and compelling?

- How efficiently did the team allot their time?

Social Venture Pitch Guidelines

Social Ventures will be evaluated on the following judging criteria.

- Does the proposed venture address a significant and critical social problem?

- Does the proposed venture adequately describe the problem it hopes to address and have defined parameters within which it plans to operate?

- Does the entrepreneurial team possess the skills and experience required to translate the plan into action?

- Can they demonstrated the passion, commitment, and perseverance required to overcome inevitable obstacles?

- Is the team comprised of individuals committed to ethical standards?

- Does the proposal approach the social problem in an innovative, exciting, and dynamic way?

- Does the initiative aspire towards clear, realistic and achievable goals, while thinking big?

- Can it be implemented effectively?

- Are there clear and coherent schedules, milestones, objectives, and financial plans?

- Has adequate attention been given to the way in which the product or service is to be produced and/or delivered?

- Do they have, or can likely secure, the resources required for production?

- Will they be able to operate competitively and grow?

- Does the proposed venture include adequate strategies for fundraising and income generation?

- Does it consider the different dimensions of financial and social sustainability in a conscientious manner?

- How will the implementation of this social venture benefit the community and the multiple stakeholders involved?

- Is there the potential for significant social impact and engagement of the broader community?

* While there is some debate regarding the precise definition of a social venture, and what exactly differentiates it from a traditional for profit business, the Selection Committee and Judging Panel will use the following criteria:

- PRIMARY MISSION - is the organization's primary purpose to serve its owners (New Business Concept) or society (Social Venture)

- PRIMARY MEASURE OF SUCCESS - does the organization measure its success primarily by profitability (New Business Concept) or positive social change (Social Venture)

Ten Questions That You Should Try To Answer

Whether pitching a New Business Concept or a Social Venture, try to address the following ten big questions as completely as possible. Remember, you should not simply talk about a general idea (those are "a dime a dozen"), rather, try to present a concise concept with a clear economic model, convincing everyone that you can actually make it happen.

- 1. What's the PROBLEM?

- 2. What's your SOLUTION?

- 3. How large is the MARKET?

- 4. Who is the COMPETITION?

- 5. What makes you so SPECIAL?

- 6. What's your ECONOMIC MODEL?

- 7. How exactly will you achieve SALES?

- 8. Have you assembled a qualified TEAM?

- 9. How will you secure required RESOURCES?

- 10. What are you proposing for an INVESTMENT?

Suggested reading: The Art of the Start by Guy Kawasaki (Penguin 2004), especially Chapter 3, "The Art of Pitching"

Copyright 2016· All rights reserved

Designed by Zymphonies

- Configure block

- Presentations

- Most Recent

- Infographics

- Data Visualizations

- Forms and Surveys

- Video & Animation

- Case Studies

- Design for Business

- Digital Marketing

- Design Inspiration

- Visual Thinking

- Product Updates

- Visme Webinars

- Artificial Intelligence

14 Winning Sales Deck Examples (& How to Make One)

Written by: Christopher Jan Benitez

If you’re serious about generating leads and closing deals, you need a sales deck presentation that wins.

A sales deck is a powerful product presentation you show to potential clients to showcase products or services. It’s basically an elevator pitch in digital form.

Here’s the good news. Creating a custom sales deck isn’t as difficult as it sounds. There are plenty of examples to take inspiration from. And when you’re ready to design a sales deck for your business, use a Visme sales deck template to create one in minutes.

Here’s a short selection of 8 easy-to-edit sales deck templates you can edit, share and download with Visme. View more templates below:

Table of Contents

What should a sales deck include, 14 best b2b sales deck examples.

- How to Build a Winning Sales Deck in 4 Steps

Sales Deck FAQs

Get more sales with an amazing sales deck.

- Sales decks are essential tools for sales teams to make sales and close deals regularly.

- To create a winning sales deck, follow the standard formula and add your brand’s unique visual messaging and guidelines.

- Get inspired by the best sales deck examples in the list below and learn how to apply Visme sales deck templates that achieve similar layouts and designs.

- Follow the steps to create your own sales deck with Visme in 4 steps.

- Supercharge your sales content by watching the replay of our webinar .

What is a Sales Deck?

A sales deck is a visual presentation used in sales pitches to guide potential customers through a company's story, product or service. It's like a roadmap to portray why a potential customer should choose your offering.

It lays out your value proposition, the problems you solve and how your solution is unique. It's a useful tool that helps you communicate effectively and persuasively, making it easier to close deals.

The best sales decks are the ones that combine the regular with the extraordinary. Follow a formula people expect and add your personal brand touch to make it special and different. Visme has everything you need to create branded sales decks that convert.

Here is a trusty outline to follow when building sales decks:

- Introduction to the product and the market.

- The problem or pain point the audience has.

- Showcase your product or service as the solution to the problem.

- Highlight the product or service features.

- Cost or investment.

- Closing and thanks.

- A dose of storytelling and emotional connection throughout the slides.

If you’re looking for how to create a pitch deck, read our guides about what a pitch deck is and the best pitch deck examples to inspire your own.

Do you want to start a presentation but don’t know where to begin? These B2B sales deck examples are a great reference point. See which of these sales pitch deck designs best resonates with you and your brand.

Did you know that Visme is a practical tool not just for product sales teams but also for marketing, content, and communication teams?

This is what Anne McCarthy, the Senior Director of Learning Experience at EmployBridge had to say about that; “My whole team has been using Visme for several years, but now seeing the kind of work we’re producing, our marketing team wanted to start using Visme.”

150birds’ sales deck is an example of a simple sales deck. Unless there’s a need to use more, most slide pages only use two colors, making it easier on the eyes. You’ll also notice the frequent appearance of birds which not only references the company name but also ties all pages together visually.

Why does this work?

A simple design coupled with a fun and colorful visual element like a mascot attracts the right type of attention.

Is there a Visme template similar to this?

The Cosmetics Company Investor sales deck also uses two tones for the most part. It should be able to accomplish the same effect. Visme lets you add animated characters in your presentations if you want to have a figure that serves the role of a mascot.

Brandon Global IT

When exploring effective sales pitch deck examples, Brandon Global IT's presentation stands out. It communicates so much with very little. The design is flawless, as the person behind the sales deck focused more on text than other design elements. If you’re selling a product or service that requires a lot of explanation, a clean look like the one featured here is the way to go.

This sales deck design takes a minimalist approach to present products and services that could appeal to a particular clientele.

Build relationships with customers and drive sales growth

- Reach out to prospects with impressive pitch decks and proposals that convert

- Monitor clients' level of engagement to see what they are most interested in

- Build a winning sales playbook to maximize your sales team's efficiency

Sign up. It’s free.

Visme has everything you need to increase the value of your presentation. This simple yet high-impact slide deck has a minimalist design. It embraces simplicity on every slide and prioritizes the essential components of your presentation.

CallTools proves that you don’t need graphic design experience to develop a sales deck. While this sales deck uses stock photos to represent the business, you can replace the images with photos of your employees and products so potential clients can connect with your business.

Aside from using stock photos, CallTools’ sales deck wins because it communicates its message to its audience in a clear and concise manner. The sales pitch deck focuses on the tool’s unique features to help set it apart from its competitors.

Visme’s Work+Biz Pitch Deck allows you to highlight your audience's problems and offer your services as solutions. It has placeholder slides where you can input your data and feature high-resolution stock photos from the platform’s extensive library to help make a stronger case for stakeholders.

High-quality visuals can significantly enhance your presentation's vividness and overall impact. Use Visme's AI image editing tools to unblur, upscale, touch up and edit images for your presentation. You can sharpen blurry images, enhance small pictures without quality loss, make minor adjustments and tailor images to fit your design.

Epic Media Group

Businesses rarely use a dark color scheme for their sales deck design, but that’s precisely what Epic Media Group did. Making black the dominant color in your design conveys seriousness, which is the tone that some businesses want to portray Also, using red to highlight certain words and phrases does well to bring the point home.

A darker color scheme is easy on the eyes, which works for some people. It also evokes a certain mood that can complement some products or services.

Patch uses green not only in its logo and design elements but also in the images across the sales pitch deck. The use of color makes sense in this context—green signifies the environment and your community, and Patch is about helping your brand reach out to more local customers.

Placing all the elements on top of a white background makes this sales deck appear clean and professional.

This sales deck is a winner because it explains why people should advertise themselves with Patch and how it does. The presentation also discusses what makes the platform different and arguably better than other similar sites.

Not only is the Interior Design presentation a beautiful template to work with, but it also uses green and other cool colors. It has a soothing effect that makes the sales deck easier to read.

Spectrum Magazine

A magazine like Spectrum knows how to use beautiful images to capture the attention of its readers. And it does a great job with its sales deck too. The sales deck’s layout feels professional right to the last page. Any potential advertiser would be happy to work with this team.

Spectrum Magazine is using one of its best assets — photos. If you have great photos to share, you can do a layout similar to what Spectrum has done.

The Lete Events Pitch Deck template has a similar layout style as the one in the example. It features a rich blend mix light and dark colors that help the audience grasp information quickly. It has stunning images, several stock photos, quality vector icons, and stylized content blocks. Users can easily customize this pitch deck as they can add data visualizations and other features.

SteadyBudget

The SteadyBudget sales deck is yet another example of how simplicity can effectively deliver a message. And because this particular sales deck makes excellent use of clean lines and background, every chart and graph that was added pops out of every slide.

If you’re looking to present a lot of visual aids in your sales deck, opting for a simpler sales deck design might be the safest choice.

The clean design and the use of colors in lines make this sales deck so easy to look at. This is ideal for sales decks full of complex figures like charts and graphs.

While it’s not exactly a presentation about PPC software, the ToughSEO pitch deck shares similarities with the above. It discusses how the platform can help your business increase its online visibility via SEO. The deck also shares the company's timeline history, its current analytics and metrics, and how it plans to use the funding it receives.

Another way to make visually pleasing sales decks is to play with the typography. That is what the designer did with this Airbnb sales deck. By using a few visual elements, the focus is placed mainly on the message.

This sales deck is also easier to digest because it only contains vital information.

Using different fonts is a great way for non-graphic designers to keep their sales decks visually interesting.

The Airsns pitch deck should help you win more stakeholders if you're developing an Airbnb alternative. It details the market to help validate your product idea before moving on to its features. Then it discusses your marketing strategy and lists your competitors and what makes your app different from them.

This sales deck for Snapchat—just like the company—appeals to a younger generation. The way the background colors change as you move through the slides is excellent for keeping readers alert. It helps that Snapchat’s branding uses just the right yellow hue to bring important slides to life.

The youthful appearance of this sales deck will win over potential advertisers for the brand. And it could also work for you if you share the same target market.

The IworkUwork sales deck template comes pretty close. It uses the same yellow accent color to brighten up each slide.

The Tealet sales deck design is one of the more complicated ones on this list. It uses custom graphics to drive home all the data the business needs to present.

If you have the budget to hire a designer or have some graphic design knowledge, you should aspire to create a sales deck just like this.

This sales deck has professionalism stamped all over it. From the images used down to how the copy is presented, it’s a cut above the rest.

The agriculture startup pitch deck delivers a unique product to the market that cuts out the middlemen and allows farmers to get higher profit margins. The presentation discusses how the app works, its business model, and its benefits. It also shares financial projections to instill greater stakeholders' confidence in the product.

Any changes made to the original slide will automatically reflect across all presentations where the slide was used.

Lunchbox showcases its services with a visually appealing sales deck, employing minimalist design and punchy colors to capture attention. The layout allows for easy readability while engaging graphics make complex information digestible. Simple graphics, alongside impactful stats, convey Lunchbox's value proposition and competitive positioning.

Lunchbox's sales deck stands out due to its minimalist design and vibrant colors. It utilizes engaging graphics and a clear layout to present complex information in an easily digestible manner and showcases the company's unique strengths effectively.

The e-commerce pitch deck template on Visme is indeed comparable to the Lunchbox example. It's a vibrant, visually appealing template with modern layouts to showcase unique selling propositions, customer acquisitions, revenue streams, and data analysis.

The template caters mainly to online store owners, strategists and digital marketers aiming to convert eCommerce data into visually striking presentations. It's undoubtedly a good template to start crafting a sales deck like Lunchbox's.

Vue Storefront

Image Source

The Vue Storefront sales deck boasts a design that's dynamic and intuitive. They use large, bold fonts for key messages and break down technical concepts with visual aids. The deck's compelling storytelling is backed by data and customer testimonials. Also, they used a green color scheme throughout the presentation to reflect their brand personality.

The deck's design establishes brand personality while simplifying complex tech concepts. Balancing engaging visuals and data-driven content offers a clear understanding of Vue's offerings and the complex problem they are solving with the front end.

The ClickChat pitch deck presentation template is quite similar to the Vue Storefront sales deck outline. This template features a striking green color scheme, attractive data visualizations, high-quality graphics and bold fonts.

It's an excellent option for creating a sales pitch deck that conveys key messages effectively, making it a great starting point for those looking to emulate the look and feel of the Vue Storefront presentation.

Do you need help in writing copy for your sales pitch deck presentation? Use Visme's AI writer tool for crafting compelling content for your presentations. It lets you generate text, create structured outlines and even edit and proofread your content.

The tool ensures your ideas are well-structured and language is error-free, making your presentation text engaging and polished.

Softr's sales deck showcases a clean, minimalist design emphasizing whitespace and a fresh aesthetic. The visually appealing presentation covers essential information using limited text, relying on iconography and subtle colors to guide the viewer effortlessly through their offering.

The minimalist approach reduces cognitive load, making it easier for viewers to focus on core ideas. The ample whitespace creates a pleasant, clutter-free experience, effectively capturing the audience's attention and making Softr's value proposition memorable.

Equipped with bright colors, catchy visuals and customizable charts and widgets, this template is an excellent match for those looking to create a sales deck similar to Softr's. The template allows you to convey complex ideas effectively while maintaining your branding.

Plum Fintech

Plum Fintech's sales deck features a striking blue and white color combination with diverse sans-serif typography usage. The layout is beautifully balanced, with well-curated graphics and a consistent design language that brings harmony and clarity to the overall presentation.

The uniform color scheme and variety in font styles enhance readability while creating an identity that's distinctive to Plum. The well-integrated visuals and typography inspire trust, facilitating a better understanding of Plum's financial solutions.

If you're looking to replicate the style of Plum Fintech's sales deck, the phonebook pitch deck presentation template may be a good fit. This Visme template offers a highly customizable base to create your own pitch deck. It helps present your business ideas in an appealing way to investors with its professional and stylish layout.

Additionally, read our article about the 13 powerful sales pitch presentation templates you can customize to create your own.

How to Create a Sales Deck in 4 Steps

As was said earlier, building a sales deck isn’t complicated at all, especially if you’re using a Visme template.

You can use Visme to create professional documents, including sales presentations.

So, where do you start with your presentation ?

Here’s how you do it.

Do Your Research

A good sales deck should contain product features, statistics, pricing, and other information to help your leads figure out how your product can make a difference in their lives.

Before picking a template, you’ll want to have a rough idea of what your outline should be. From here, you can use storytelling to flesh out your outline by identifying the problem and introducing the product as a solution.

Find a Template

Visme has a rich library of customizable templates for any type of presentation. And the best part is that you can preview each one to see which of them perfectly fits your needs.

Business Templates

Ecommerce Webinar Presentation

Buyer Presentation

PixelGo Marketing Plan Presentation

Technology Presentation

Communication Skills - Keynote Presentation

Company Ethics Presentation

Work+Biz Pitch Deck - Presentation

Product Training Interactive Presentation

Create your template View more templates

It’s important to note that you’re never stuck with what the template gives you. If you need to add or remove slides from the deck, you can. The same goes for adding or removing elements on each page.

You are in complete creative control over all the details that appear on your sales deck.

For example, if you don’t want to use the background image, you can delete it and replace it with something better.

This process becomes easier if you’ve used Visme before because you can use saved elements in new presentations. For example, if you already have a title slide, you can add that slide to your sales deck.

Any changes made to the original slide will automatically reflect across all presentations where the slide was used. The same principle applies to the dynamic fields feature that helps to easily update information throughout your projects and slides.

Customize Your Sales Deck

Visme is a drag-and-drop style builder. That means anyone—even those that don’t have any graphic design experience—can pull off a sales deck that looks professional from beginning to end.

You can change images, shapes, and text with ease. And there are thousands of visual elements that you can make your presentation shine. To find design elements quickly, use the / shortcut feature . Simply click on / (forward slash) and input what you want into the pop up search bar or scroll to browse.

Changing numbers on charts and graphs is easy. Just select the data you want to update and select settings. From here, you’ll be able to select the value you want to update along with other cosmetic changes you’d like to make.

We’ve got other features worth mentioning. You can make short snippets of information with a call-to-action pop up when a user hovers over an element. Also, our tool lets you add links to other slides or external pages.

Another interesting feature is the live data integration which lets you connect charts on your slides to data from Google Sheets. That means if changes are made to the data from Google Sheets, the data in your sales deck is updated automatically.

Download or Share Your Sales Deck

Once you finish your sales deck, you can download your powerful business presentation and call it a day. However, you can share your sales deck with others in other ways.

You can send specific people private links to your Visme presentations . There is also an option to present your sales deck directly from Visme, which will preserve interactivity and animations if you add any.

Visme has an analytics feature that lets you measure the impact of your presentation. You can view how users are interacting with your presentation and figure out how to further engage them. You can view how users interact with your presentation and determine how to further engage them.

Another alternative for creating presentations is using Visme's AI presentation maker to create your presentation. All you have to do is explain the tool about what you want to create. Our smart Chatbot will ask you a few questions to tailor the presentation to your needs.

You can further edit your presentation design to include additional information, data visualization, images, etc.

Here are some of the most frequently asked questions about sales deck presentations.

What Is a Sales Deck?

A sales deck is a presentation that helps business owners, sales agents, sponsorship and partnership directors , and marketers sell more products to potential customers. It highlights specific problems a lead could have and how the product can help them get rid of them.

What Is the Difference Between a Sales Deck and a Pitch Deck?

A sales deck is a type of presentation that is designed to convert leads into customers. This type of presentation primarily focuses on a specific product or service that a company offers.

A pitch deck is designed to sell a company to potential investors. Its content focuses more on the company’s vision, financials, and all products and services that the business offers.

What Should I Include in a Sales Deck?

A sales deck should identify the customer’s pain points, introduce the solution (your product), list product features, and get the customer to take action.

What Makes a Great Sales Deck?

First, you want a sales deck cover image that quickly grabs the reader's attention. Next, your sales deck should convey everything your leads need to know about your product, service or idea and nudge them to take action. You’ll also want to back up any claim with factual and accurate data and include a call to action.

Getting the most out of your startups requires you to secure funding from investors first. From here, you can successfully disrupt the market and create a profitable business selling a product or service.

To do that, you need a sales deck designed to close more deals and make more sales. The examples above should give you ideas on how to create your presentation.

If you don’t fancy yourself as a graphic designer, you can still create a stunning presentation with Visme. Use a Visme sales deck template to jumpstart the process.

Easily put together winning sales decks in Visme

Trusted by leading brands

Recommended content for you:

Create Stunning Content!

Design visual brand experiences for your business whether you are a seasoned designer or a total novice.

About the Author

Christopher Jan Benitez is a freelance writer who specializes in digital marketing. His work has been published on SEO and affiliate marketing-specific niches like Monitor Backlinks, Niche Pursuits, Nichehacks, Web Hosting Secret Revealed, and others.

Nebraska FBLA

Future business leaders of america.

Sales Presentation

Category: Prejudge Project, Presentation

Type: Individual, Team

Grade Level: 9-12

Deadline/Testing: 15-Feb

Competitor Limit: 1 Entry per chapter

Event Guidelines

Preliminary Round

- The presentation is a sales pitch and should be prepared for a product or concept with equipment used to present the sales presentation.

- Student members, not advisers, must prepare presentations.

- Visual aids and samples related to the product or concept may be used in the presentation.

- The competitor may use notes, note cards, and props.

- The competitor will provide the necessary materials and merchandise for the demonstration along with the product or concept.

- Facts and working data may be secured from any source.

- Comply with state and federal copyright laws.

- Follow the event rating sheet for items to consider in the presentation.

- Competitors will create a video of the sales presentation, which allows the judges to view the competitor as well as the presentation slides.

- Upload the video to YouTube as unlisted and disable comments.

- Save video as SalesPresentation_chaptername_year

- The preliminary round will be judged based on the presentation submitted online. The audio recording should be clear with the appropriate volume.

- Eight (8) finalists will advance to the final round.

Final Round

- The top eight (8) competitors will be notified of their eligibility and performance times prior to the conference.

- Competitors must be available to compete at the designated time in the program.

- Performance times for the presentations will be randomly selected by the state office staff.

- Five minutes (5) will be allowed to set up and remove equipment and other presentation items. Equipment may be connected or partially connected before entering the presentation room.

- Competitors must perform all aspects of the presentation (e.g., speaking, setup, operating equipment). Other representatives of the chapter or the adviser may not provide assistance.

- The competitor has seven (7) minutes to deliver the presentation.

- Visual aids and samples specifically related to the presentation may be used; however, no items may be left with the judges or audience.

- A timekeeper will stand at six (6) minutes and again at seven (7) minutes. When the presentation is finished, the timekeeper will record the time used, noting a deduction of five (5) points for any presentation over seven (7) minutes.

- Following each presentation, judges will conduct a three (3) minute question-answer period.

- The performance is open to conference attendees, except performing participants of this event. No audio or video recording will be allowed.

Eligibility:

Each local chapter may enter one team of 2-3 members or an individual in Grades 9 through 12.

Each presentation will be judged by a panel of judges. All judges’ decisions are final.

Who Goes to Nationals?

The first-, second-, and third-place winners of this event will represent Nebraska in the Sales Presentation event at the National Leadership Conference, provided they have not placed in the top 10 for this event at a previous National Leadership Conference.

fbla-rubric-salespres

- help_outline help

iRubric: Shark Tank Sales Pitch rubric

| Rubric Code: By Ready to use Private Rubric Subject: Type: Grade Levels: 6-8 |

| Presentation Rubric | ||||||

| | ||||||

| | ||||||

| | ||||||

| | ||||||

| | ||||||

| | ||||||

- Presentation

- Communication

- Engineering

Moscow Method

What do you think of this template.

Product details

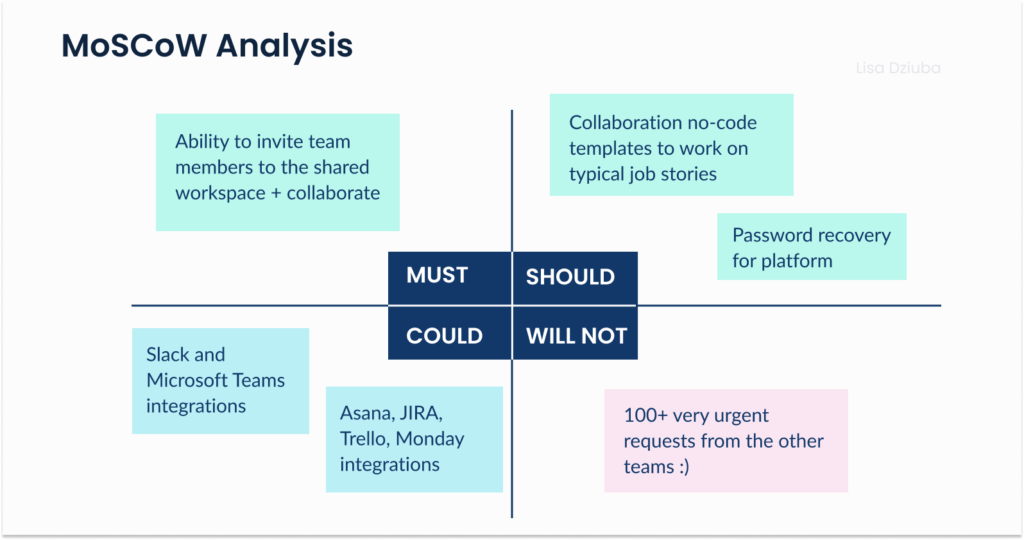

At its core, the MoSCoW method is simply a prioritization framework that can be applied to any kind of situation or project, but it works best when a large number of tasks need to be ruthlessly whittled down into a prioritized and achievable to-do list. The core aim of the process is to classify tasks into four buckets; Must, Should, Could and Won’t. As you can probably fathom, Must is the highest priority bucket, and Won’t is the lowest. You can also presumably now see where the funny capitalization in the term ‘MoSCoW’ derives from. One of the primary benefits of a MoSCoW exercise is that it forces hard decisions to be made regarding which direction a digital product project will take. Indeed, the process is usually the first time a client has been asked to really weigh up which functions are absolutely fundamental to the product (Must), which are merely important (Should) and which are just nice-to-haves (Could). This can make the MoSCoW method challenging, but also incredibly rewarding. It’s not uncommon for there to be hundreds of user stories at this stage of a project, as they cover every aspect of what a user or admin will want to do with the digital product. With so many stories to keep track of it helps to group them into sets. For example, you may want to group all the stories surrounding checkout, or onboarding into one group. When we run a MoSCoW process, we use the following definitions. Must – These stories are vital to the function of the digital product. If any of these stories were removed or not completed, the product would not function. Should – These stories make the product better in important ways, but are not vital to the function of the product. We would like to add these stories to the MVP build, but we’ll only start working on them once all the Must stories are complete. Could – These stories would be nice to have, but do not add lots of extra value for users. These stories are often related to styling or ‘finessing’ a product. Won’t – These stories or functions won’t be considered at this stage as they are either out of scope or do not add value.

The first two slides of the template are similar in design and structure. These slides can be used to provide general information to the team about the client’s needs. The slides will be useful for the product owner, development team, and scrum master. The next slide groups user stories into vertical columns. You can also set a progress status for each user story. The last slide gives you the ability to specify the time spent on each user story. After summing up the time for each group, the team can understand how long it will take them to complete each group. All slides in this template are editable based on your needs. The template will be useful to everyone who uses the Agile method in their work.

Related Products

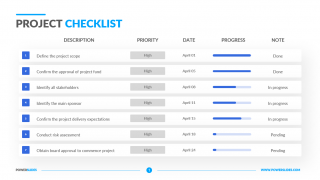

Checklist Template

Cohort Analysis

Action Item Template

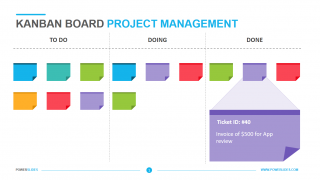

Kanban Board Project Management

Project Checklist

Customer Journey Map Template

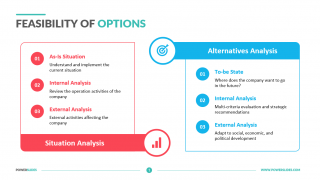

Feasibility of Options

Data Lake Architecture

Project Management Process

Action Plan Template

You dont have access, please change your membership plan., great you're all signed up..., verify your account.

PowerSlides.com will email you template files that you've chosen to dowload.

Please make sure you've provided a valid email address! Sometimes, our emails can end up in your Promotions/Spam folder.

Simply, verify your account by clicking on the link in your email.

- Integrations

- Learning Center

MoSCoW Prioritization

What is moscow prioritization.

MoSCoW prioritization, also known as the MoSCoW method or MoSCoW analysis, is a popular prioritization technique for managing requirements.

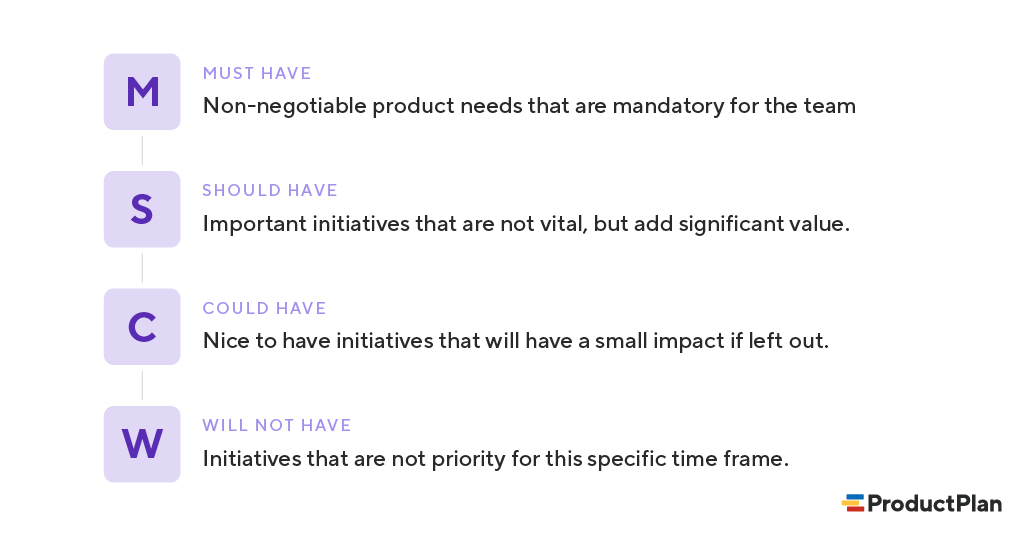

The acronym MoSCoW represents four categories of initiatives: must-have, should-have, could-have, and won’t-have, or will not have right now. Some companies also use the “W” in MoSCoW to mean “wish.”

What is the History of the MoSCoW Method?

Software development expert Dai Clegg created the MoSCoW method while working at Oracle. He designed the framework to help his team prioritize tasks during development work on product releases.

You can find a detailed account of using MoSCoW prioritization in the Dynamic System Development Method (DSDM) handbook . But because MoSCoW can prioritize tasks within any time-boxed project, teams have adapted the method for a broad range of uses.

How Does MoSCoW Prioritization Work?

Before running a MoSCoW analysis, a few things need to happen. First, key stakeholders and the product team need to get aligned on objectives and prioritization factors. Then, all participants must agree on which initiatives to prioritize.

At this point, your team should also discuss how they will settle any disagreements in prioritization. If you can establish how to resolve disputes before they come up, you can help prevent those disagreements from holding up progress.

Finally, you’ll also want to reach a consensus on what percentage of resources you’d like to allocate to each category.

With the groundwork complete, you may begin determining which category is most appropriate for each initiative. But, first, let’s further break down each category in the MoSCoW method.

Start prioritizing your roadmap

Moscow prioritization categories.

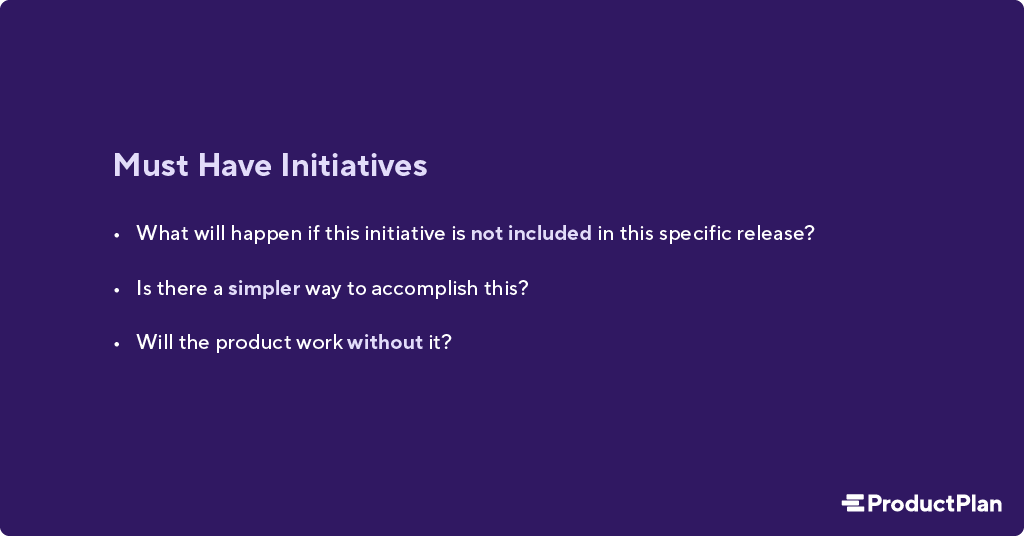

1. Must-have initiatives

As the name suggests, this category consists of initiatives that are “musts” for your team. They represent non-negotiable needs for the project, product, or release in question. For example, if you’re releasing a healthcare application, a must-have initiative may be security functionalities that help maintain compliance.

The “must-have” category requires the team to complete a mandatory task. If you’re unsure about whether something belongs in this category, ask yourself the following.

If the product won’t work without an initiative, or the release becomes useless without it, the initiative is most likely a “must-have.”

2. Should-have initiatives

Should-have initiatives are just a step below must-haves. They are essential to the product, project, or release, but they are not vital. If left out, the product or project still functions. However, the initiatives may add significant value.

“Should-have” initiatives are different from “must-have” initiatives in that they can get scheduled for a future release without impacting the current one. For example, performance improvements, minor bug fixes, or new functionality may be “should-have” initiatives. Without them, the product still works.

3. Could-have initiatives

Another way of describing “could-have” initiatives is nice-to-haves. “Could-have” initiatives are not necessary to the core function of the product. However, compared with “should-have” initiatives, they have a much smaller impact on the outcome if left out.

So, initiatives placed in the “could-have” category are often the first to be deprioritized if a project in the “should-have” or “must-have” category ends up larger than expected.

4. Will not have (this time)

One benefit of the MoSCoW method is that it places several initiatives in the “will-not-have” category. The category can manage expectations about what the team will not include in a specific release (or another timeframe you’re prioritizing).

Placing initiatives in the “will-not-have” category is one way to help prevent scope creep . If initiatives are in this category, the team knows they are not a priority for this specific time frame.

Some initiatives in the “will-not-have” group will be prioritized in the future, while others are not likely to happen. Some teams decide to differentiate between those by creating a subcategory within this group.

How Can Development Teams Use MoSCoW?

Although Dai Clegg developed the approach to help prioritize tasks around his team’s limited time, the MoSCoW method also works when a development team faces limitations other than time. For example:

Prioritize based on budgetary constraints.

What if a development team’s limiting factor is not a deadline but a tight budget imposed by the company? Working with the product managers, the team can use MoSCoW first to decide on the initiatives that represent must-haves and the should-haves. Then, using the development department’s budget as the guide, the team can figure out which items they can complete.

Prioritize based on the team’s skillsets.

A cross-functional product team might also find itself constrained by the experience and expertise of its developers. If the product roadmap calls for functionality the team does not have the skills to build, this limiting factor will play into scoring those items in their MoSCoW analysis.

Prioritize based on competing needs at the company.

Cross-functional teams can also find themselves constrained by other company priorities. The team wants to make progress on a new product release, but the executive staff has created tight deadlines for further releases in the same timeframe. In this case, the team can use MoSCoW to determine which aspects of their desired release represent must-haves and temporarily backlog everything else.

What Are the Drawbacks of MoSCoW Prioritization?

Although many product and development teams have prioritized MoSCoW, the approach has potential pitfalls. Here are a few examples.

1. An inconsistent scoring process can lead to tasks placed in the wrong categories.

One common criticism against MoSCoW is that it does not include an objective methodology for ranking initiatives against each other. Your team will need to bring this methodology to your analysis. The MoSCoW approach works only to ensure that your team applies a consistent scoring system for all initiatives.

Pro tip: One proven method is weighted scoring, where your team measures each initiative on your backlog against a standard set of cost and benefit criteria. You can use the weighted scoring approach in ProductPlan’s roadmap app .

2. Not including all relevant stakeholders can lead to items placed in the wrong categories.

To know which of your team’s initiatives represent must-haves for your product and which are merely should-haves, you will need as much context as possible.

For example, you might need someone from your sales team to let you know how important (or unimportant) prospective buyers view a proposed new feature.

One pitfall of the MoSCoW method is that you could make poor decisions about where to slot each initiative unless your team receives input from all relevant stakeholders.

3. Team bias for (or against) initiatives can undermine MoSCoW’s effectiveness.

Because MoSCoW does not include an objective scoring method, your team members can fall victim to their own opinions about certain initiatives.

One risk of using MoSCoW prioritization is that a team can mistakenly think MoSCoW itself represents an objective way of measuring the items on their list. They discuss an initiative, agree that it is a “should have,” and move on to the next.

But your team will also need an objective and consistent framework for ranking all initiatives. That is the only way to minimize your team’s biases in favor of items or against them.

When Do You Use the MoSCoW Method for Prioritization?

MoSCoW prioritization is effective for teams that want to include representatives from the whole organization in their process. You can capture a broader perspective by involving participants from various functional departments.

Another reason you may want to use MoSCoW prioritization is it allows your team to determine how much effort goes into each category. Therefore, you can ensure you’re delivering a good variety of initiatives in each release.

What Are Best Practices for Using MoSCoW Prioritization?

If you’re considering giving MoSCoW prioritization a try, here are a few steps to keep in mind. Incorporating these into your process will help your team gain more value from the MoSCoW method.

1. Choose an objective ranking or scoring system.

Remember, MoSCoW helps your team group items into the appropriate buckets—from must-have items down to your longer-term wish list. But MoSCoW itself doesn’t help you determine which item belongs in which category.

You will need a separate ranking methodology. You can choose from many, such as:

- Weighted scoring

- Value vs. complexity

- Buy-a-feature

- Opportunity scoring

For help finding the best scoring methodology for your team, check out ProductPlan’s article: 7 strategies to choose the best features for your product .

2. Seek input from all key stakeholders.

To make sure you’re placing each initiative into the right bucket—must-have, should-have, could-have, or won’t-have—your team needs context.

At the beginning of your MoSCoW method, your team should consider which stakeholders can provide valuable context and insights. Sales? Customer success? The executive staff? Product managers in another area of your business? Include them in your initiative scoring process if you think they can help you see opportunities or threats your team might miss.

3. Share your MoSCoW process across your organization.

MoSCoW gives your team a tangible way to show your organization prioritizing initiatives for your products or projects.

The method can help you build company-wide consensus for your work, or at least help you show stakeholders why you made the decisions you did.

Communicating your team’s prioritization strategy also helps you set expectations across the business. When they see your methodology for choosing one initiative over another, stakeholders in other departments will understand that your team has thought through and weighed all decisions you’ve made.

If any stakeholders have an issue with one of your decisions, they will understand that they can’t simply complain—they’ll need to present you with evidence to alter your course of action.

Related Terms

2×2 prioritization matrix / Eisenhower matrix / DACI decision-making framework / ICE scoring model / RICE scoring model

Prioritizing your roadmap using our guide

Talk to an expert.

Schedule a few minutes with us to share more about your product roadmapping goals and we'll tailor a demo to show you how easy it is to build strategic roadmaps, align behind customer needs, prioritize, and measure success.

Share on Mastodon

- Certifications

- Our Instructors

The Ultimate Guide to Product Prioritization + 8 Frameworks

Lisa Dziuba

Updated: August 28, 2024 - 26 min read

One of the most challenging aspects of Product Management is prioritization. If you’ve transitioned to Product from another discipline, you might already think you know how to do it. You choose which task to work on first, which deadline needs to be met above all others, and which order to answer your emails in.

Priorities, right? Wrong!

In product management, prioritization is on a whole other level! The engineers are telling you that Feature A will be really cool and will take you to the next level. But a key stakeholder is gently suggesting that Feature B should be included in V1. Finally, your data analyst is convinced that Feature B is completely unnecessary and that users are crying out for Feature C.

Who decides how to prioritize the features? You do.

Prioritization is an essential part of the product management process and product development. It can feel daunting, but for a successful launch , it has to be done.

Luckily, a whole community of Product experts has come before you. They’ve built great things, including some excellent prioritization frameworks!

Quick summary

Here’s what we’ll cover in this article:

The benefits and challenges of prioritization

The best prioritization frameworks and when to use them

How real Product Leaders implement prioritization at Microsoft, Amazon, and HSBC

Common prioritization mistakes

Frequently Asked Questions

Benefits and challenges of prioritization

Before we dive into the different prioritization models, let’s talk about why prioritization is so important and what holds PMs back.

Benefits of effective feature prioritization

Enhanced focus on key objectives: Prioritization allows you to concentrate on tasks that align closely with your product's core goals. For example, when Spotify prioritized personalized playlists, it significantly boosted user engagement, aligning perfectly with its goal of providing a unique user experience.

Resource optimization: You can allocate your team’s time and your company’s resources more efficiently. Focusing on fewer, more impactful projects can lead to greater innovation and success.

Improved decision-making: When you prioritize, you're essentially making strategic decisions about where to focus efforts. This clarity in decision-making can lead to more successful outcomes, avoiding the pitfalls of cognitive biases like recency bias and the sunk cost fallacy .

Strategic focus: Prioritization aligns tasks with the company's broader strategic goals, ensuring that day-to-day activities contribute to long-term objectives.

Consider the example of Apple Inc. under the leadership of Steve Jobs. One of Jobs' first actions when he returned to Apple in 1997 was to slash the number of projects and products the company was working on.

Apple refocused its efforts on just a handful of key projects. This ruthless prioritization allowed Apple to focus on quality rather than quantity, leading to the development of groundbreaking products like the iPod, iPhone, and iPad.

Stress reduction : From customer interactions to executive presentations, the responsibilities of a PM are vast and varied, often leading to a risk of burnout if not managed adeptly. For more on this, check out this talk by Glenn Wilson, Google Group PM, on Play the Long Game When Everything Is on Fire .

Challenges of prioritization

Managing stakeholder expectations: Different stakeholders may have varying priorities. For instance, your engineering team might prioritize feature development , while marketing may push for more customer-centric enhancements. Striking a balance can be challenging.

Adapting to changing market conditions: The market is dynamic, and priorities can shift unexpectedly. When the pandemic hit, Zoom had to quickly reprioritize to cater to a massive surge in users, emphasizing scalability and security over other planned enhancements.

Dealing with limited information: Even in the PM & PMM world, having a strong data-driven team is more often a dream rather than a current reality. Even when there is data, you can’t know everything. Amazon’s decision to enter the cloud computing market with AWS was initially seen as a risky move, but they prioritized the gamble and it paid off spectacularly.

Limited resources : Smaller businesses and startups don’t have the luxury of calmly building lots of small features, hoping that some of them will improve the product. The less funding a company has, the fewer mistakes (iterations) it can afford to make when building an MVP or figuring out Product-Market Fit.

Bias: If you read The Mom Test book, you probably know that people will lie about their experience with your product to make you feel comfortable. This means that product prioritization can be influenced by biased opinions, having “nice-to-have” features at the top of the list.

Lack of alignment: Different teams can have varying opinions as to what is “important”. When these differences aren’t addressed, product prioritization can become a fight between what brings Product-Led Growth, more leads, higher Net Promoter Score, better User Experience, higher retention, or lower churn. Lack of alignment is not the last issue startups face when prioritizing features.

Prioritization Frameworks

There are a lot of prioritization models for PMs to employ. While it’s great to have so many tools at your disposal, it can also be a bit overwhelming. You might even ask yourself which prioritization framework you should…prioritize.

In reality, each model is like a different tool in your toolbox. Just like a hammer is better than a wrench at hammering nails, each model is right depending on the type of prioritization task at hand. The first step is to familiarize yourself with the most trusty frameworks out there. So, without further ado, let’s get started.

The MoSCoW method

Known as the MoSCoW Prioritization Technique or MoSCoW Analysis , MoSCoW is a method used to easily categorize what’s important and what’s not. The name is an acronym of four prioritization categories: Must have, Should have, Could have, and Won’t have .

It’s a particularly useful tool for communicating to stakeholders what you’re working on and why.

According to MoSCoW, all the features go into one of four categories:

Must Have These are the features that will make or break the product. Without them, the user will not be able to get value from the product or won’t be able to use it. These are the “painkillers” that form the why behind your product, and often are closely tied to how the product will generate revenue.

Should Have These are important features but are not needed to make the product functional. Think of them as your “second priorities”. They could be enhanced options that address typical use cases.

Could Have Often seen as nice to have items, not critical but would be welcomed. These are “vitamins”, not painkillers. They might be integrations and extensions that enhance users’ workflow.

Won’t Have Similar to the “money pit” in the impact–effort matrix framework, these are features that are not worth the time or effort they would require to develop.

Pros of using this framework: MoSCoW is ideal when looking for a simplified approach that can involve the less technical members of the company and one that can easily categorize the most important features.

Cons of using this framework: It is difficult to set the right number of must-have features and, as a result, your Product Backlog may end up with too many features that tax the development team.

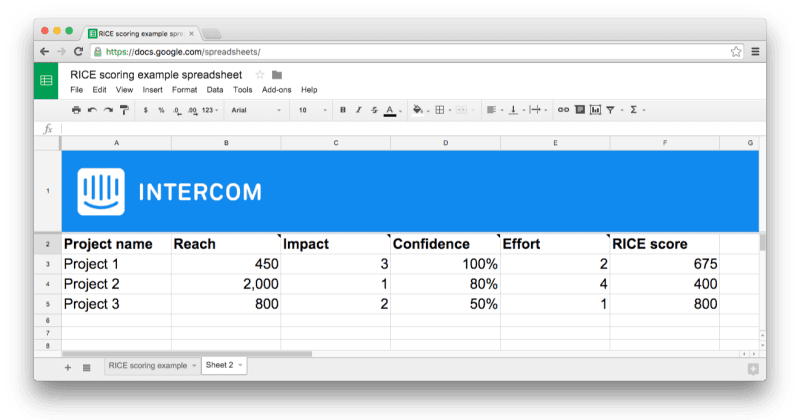

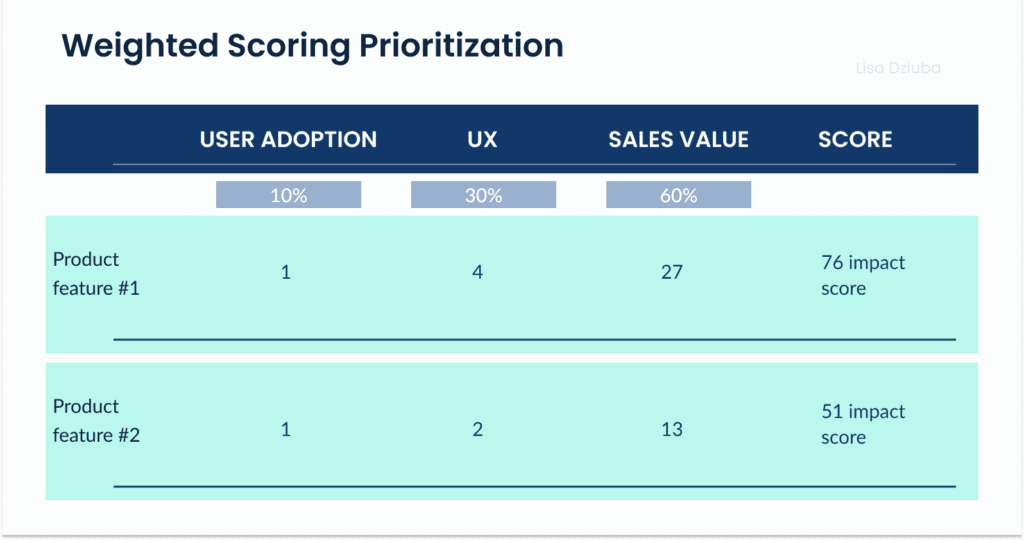

RICE scoring

Developed by the Intercom team, the RICE scoring system compares Reach, Impact, Confidence , and Effort.

Reach centers the focus on the customers by thinking about how many people will be impacted by a feature or release. You can measure this using the number of people who will benefit from a feature in a certain period of time. For example, “How many customers will use this feature per month?”

Now that you’ve thought about how many people you’ll reach, it’s time to think about how they’ll be affected. Think about the goal you’re trying to reach. It could be to delight customers (measured in positive reviews and referrals) or reduce churn.

Intercom recommends a multiple-choice scale:

3 = massive impact

2 = high impact

1 = medium impact

0.5 = low impact

0.25 = minimal impact

A confidence percentage expresses how secure team members feel about their assessments of reach and impact. The effect this has is that it de-prioritizes features that are too risky.

Generally, anything above 80% is considered a high confidence score, and anything below 50% is unqualified.

Considering effort helps balance cost and benefit. In an ideal world, everything would be high-impact/low-effort, although this is rarely the case. You’ll need information from everyone involved (designers, engineers, etc.) to calculate effort.

Think about the amount of work one team member can do in a month, which will naturally be different across teams. Estimate how much work it’ll take each team member working on the project. The more time allotted to a project, the higher the reach, impact, and confidence will need to be to make it worth the effort.

Calculating a RICE score

Now you should have four numbers representing each of the 4 categories. To calculate your score, multiply Reach, Impact, and Confidence. Then divide by Effort.

Pros of using this framework:

Its spreadsheet format and database approach are awesome for data-focused teams. This method also filters out guesswork and the “loudest voice” factor because of the confidence metric. For teams that have a high volume of hypotheses to test, having a spreadsheet format is quick and scalable.

Cons of using this framework:

The RICE format might be hard to digest if your startup team consists mainly of visual thinkers. When you move fast, it’s essential to use a format that everyone will find comfortable. When there are 30+ possible features for complex products, this becomes a long spreadsheet to digest.

Impact–Effort Matrix

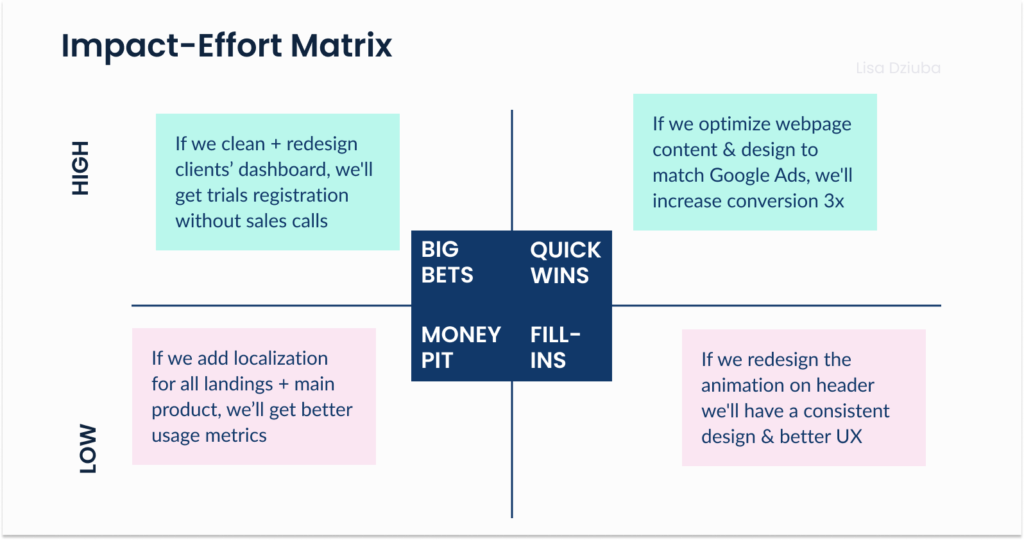

The Impact-Effort Matrix is similar to the RICE method but better suited to visual thinkers. This 2-D matrix plots the “value” (impact) of a feature for the user vs the complexity of development, otherwise known as the “effort”.

When using the impact–effort matrix, the Product Owner first adds all features or product hypotheses. Then the team that executes on these product hypotheses votes on where to place the features on the impact and effort dimensions. Each feature ends up in one of 4 quadrants:

Quick wins Low effort and high impact are features or ideas that will bring growth.

Big bets High effort but high impact. These have the potential to make a big difference but must be well-planned. If your hypothesis fails here, you waste a lot of development time.

Fill-ins Low value but also low effort. Fill-ins don’t take much time but they still should only be worked on if other more important tasks are complete. These are good tasks to focus on while waiting on blockers to higher priority features to be worked out.

Money pit Low value and high effort features are detrimental to morale and the bottom line. They should be avoided at all costs.

Pros of using this framework: It allows quick prioritization and works well when the number of features is small. It can be shared across the whole startup team, as it’s easy to understand at first glance.